Part VIII: The Toolkit — CAGE and ARCH

CAGE (Context, Accuracy, Governance, Ethics) and ARCH (Assess, Research, Construct, Harden) — the practitioner toolkits for sovereign AI work.

Part VIII: The Toolkit - CAGE and ARCH

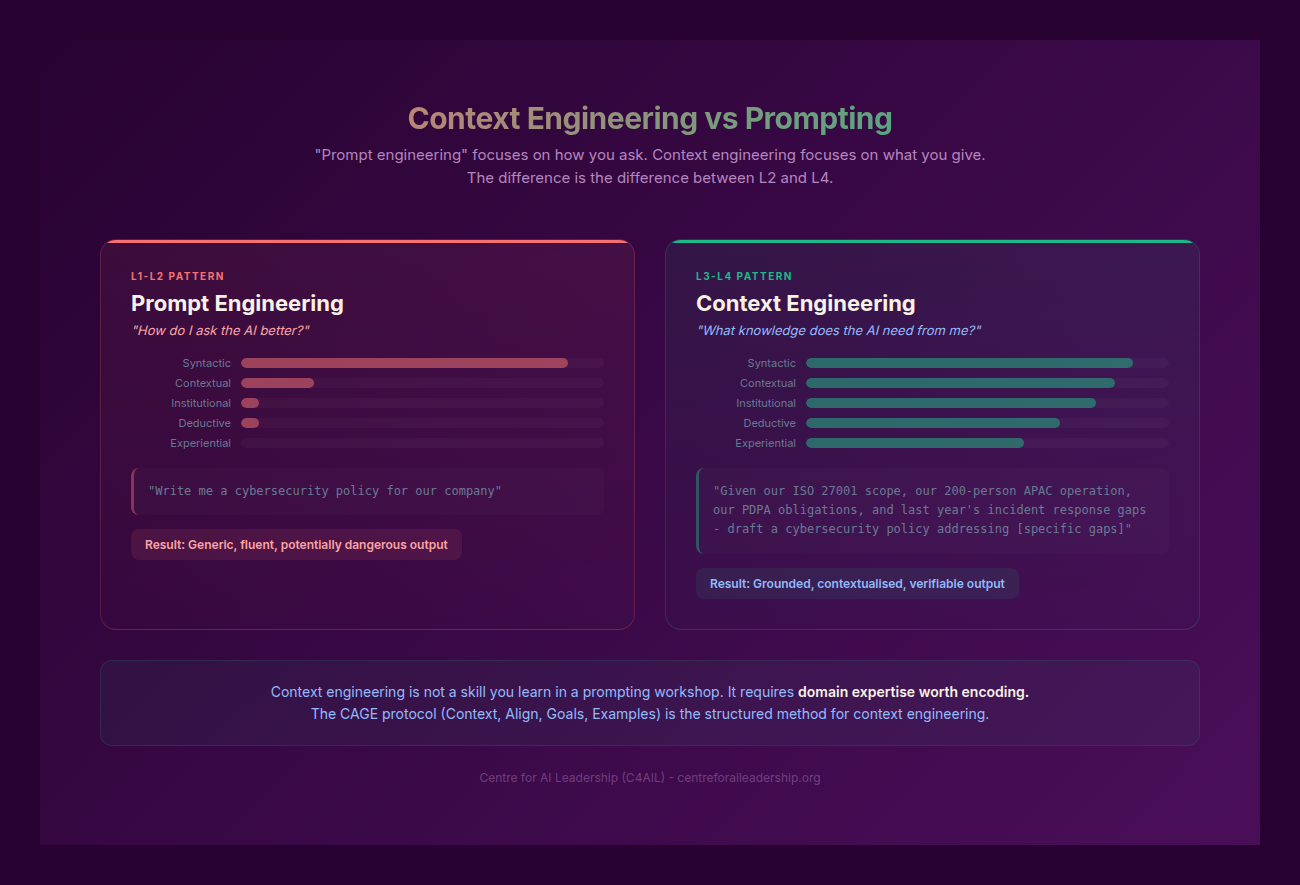

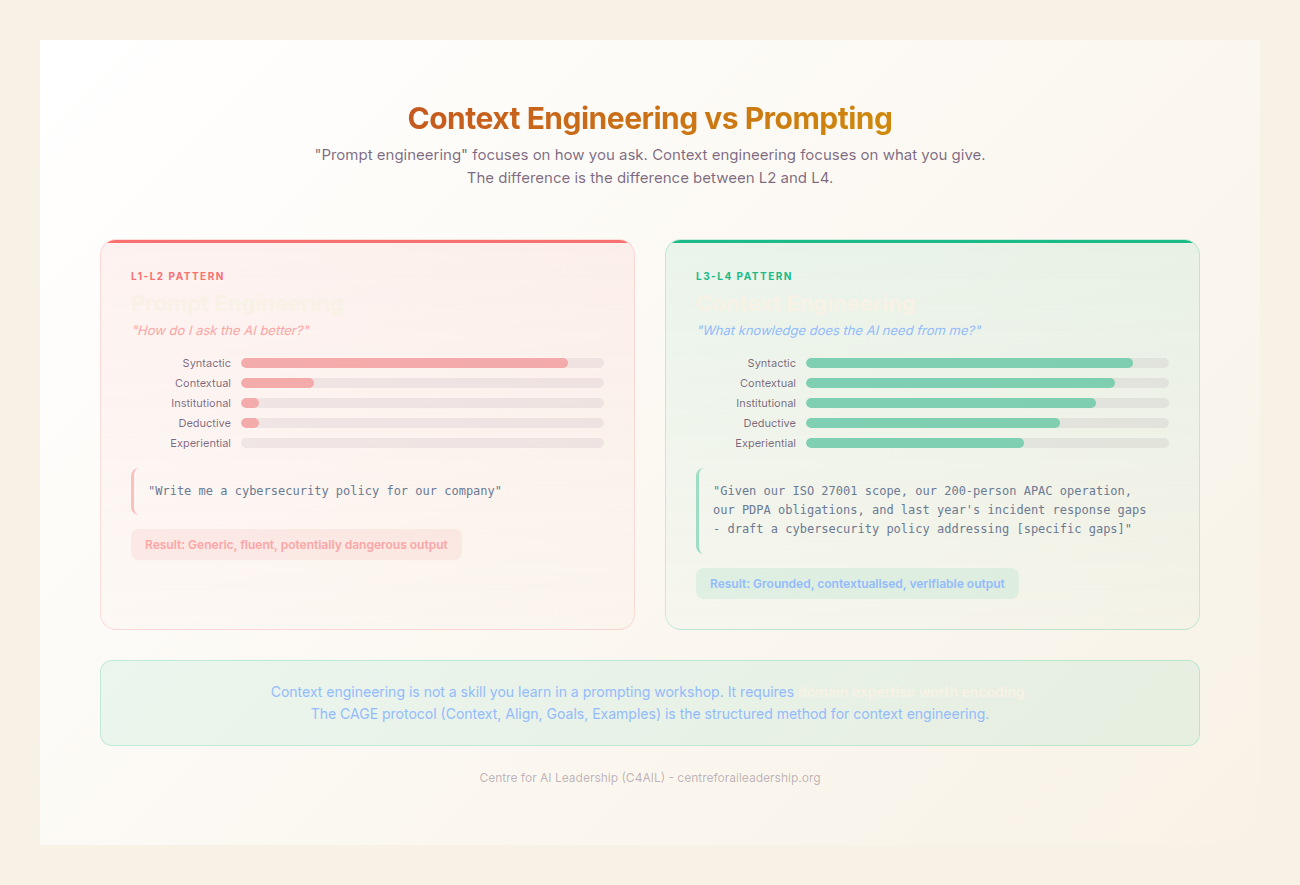

The central crisis of the generative era is not a failure of technology, but a failure of discernment. As established in the preceding chapters, the ease with which Large Language Models (LLMs) produce fluent, authoritative prose creates an Eloquence Trap (C4AIL, 2023). This trap lures the uninitiated into granting Epistemic Credit to outputs that may be factually hollow or logically incoherent. To move from the role of a passive consumer to that of a Sovereign Orchestrator, the practitioner requires more than a collection of “prompts”. They require a rigorous methodology for Context Engineering.

8.1 - From Strategy to Practice: Context Engineering

In the early stages of the generative revolution, the term “prompting” became the default descriptor for human-AI interaction. However, prompting implies a transactional, almost whimsical relationship - a “poke” to see what the machine produces. For the AI Realist, this is insufficient. We must instead adopt Context Engineering, which is to prompting what structural engineering is to tinkering.

Context Engineering is the deliberate design of the cognitive environment in which an AI operates. It is the process of defining the boundaries, the logic, and the standards of a task before a single token is generated. This is not about restricting the AI’s creative potential, but about providing the scaffolding necessary for high-fidelity output.

A common misconception is that structure stifles creativity. On the contrary, structure is the prerequisite for excellence. Consider the Sonnet Analogy: a sonnet is one of the most restrictive forms of poetry, with a precise rhyme scheme and a strict meter of fourteen lines. Yet, these constraints do not prevent the expression of genius; they provide the tension against which genius is revealed. Similarly, the frameworks of CAGE and ARCH provide the “meter and rhyme” for AI-assisted work, ensuring that the model’s probabilistic engine is harnessed toward a specific, verified objective.

Without this engineering, the practitioner falls into the Reliability Trap. The mathematics are unforgiving: if each step in a five-step workflow achieves 95% accuracy - impressive in isolation - the cumulative accuracy is 0.95^5 = 77%. One in four outputs will contain at least one error. At ten steps, it drops to 60%. The longer the chain, the more certain the failure. Context Engineering addresses this by improving the per-step accuracy (CAGE) and catching the errors that remain (ARCH), moving the interaction from a narrative chat to a Logic Pipe - a structured, auditable, and repeatable workflow.

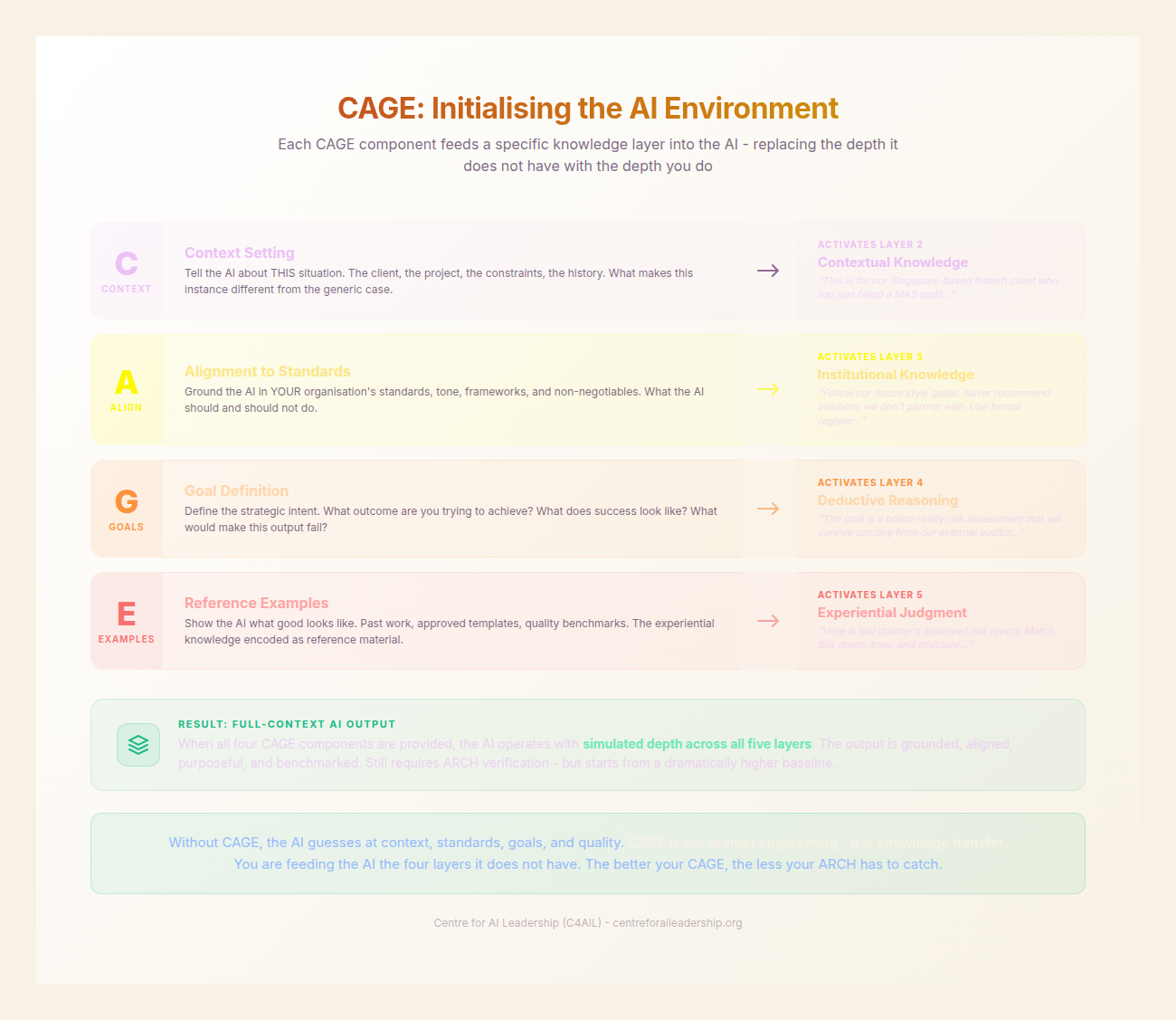

8.2 - CAGE: Initialising the AI Environment

The first component of the C4AIL toolkit is the CAGE Framework. CAGE is the initialisation phase of any complex task. It is designed to map the human’s internal expertise onto the AI’s processing environment, bridging the gap between the Institutional Layer and the Deductive Layer of knowledge (see Part II).

CAGE stands for Context, Align, Goals, and Examples. When these four elements are defined, the AI ceases to be a generic chatbot and becomes a specialist consultant who has been fully briefed on the mission.

C - Context (The Contextual Layer)

The Contextual Layer defines the “where” and “when” of the task. It includes the domain-specific nuances, the regulatory landscape, the organisation’s market position, and the temporal urgency.

Generic AI lacks a “now”. It exists in a flattened sea of training data. By providing Context, the practitioner anchors the model in reality.

- Example: Instead of “Write a report on carbon credits,” the Contextual layer specifies: “We are a mid-sized UK manufacturing firm navigating the 2024 updates to the Carbon Border Adjustment Mechanism (CBAM) for a Tier 1 automotive client.”

- Impact: Good Context turns a generic AI into a specialist consultant who understands the stakes.

A - Align (The Institutional Layer)

The Institutional Layer is where the organisation’s specific DNA is encoded into the AI’s operating parameters. This includes tone of voice, quality standards, ethical constraints, and formatting requirements.

Alignment ensures that the output does not just look “correct” in a vacuum, but looks “correct for us”. It prevents the “AI-style” prose that often plagues unmanaged outputs.

- Example: “Use the C4AIL house style: British English, hyphens only, evidence-dense, practitioner authority. Avoid hyperbolic adjectives like ‘transformative’ or ‘revolutionary’.”

- Impact: This is where institutional knowledge gets encoded into the AI’s operating parameters, ensuring brand and cultural consistency.

G - Goals (The Deductive Layer)

The Deductive Layer defines the “what” and “why”. It establishes the success criteria, the specific questions to be answered, and the scope boundaries.

Without clear Goals, AI tends toward Scope Creep, adding unnecessary fluff to reach a perceived word count. Goals provide the AI with a “Definition of Done”.

- Example: “The goal is to identify three specific supply-chain vulnerabilities created by the new regulation. Success is a briefing note that allows a COO to make a ‘go/no-go’ decision on a specific supplier.”

- Impact: This prevents the production of correct answers to the wrong questions.

E - Examples (The Experiential Layer)

The Experiential Layer is the most powerful, yet most often neglected, part of the framework. It provides the AI with reference points for quality.

AI models are pattern-matchers. By providing Few-Shot Examples of what “good” looks like - and, crucially, what “bad” looks like - you calibrate the model’s internal quality filter.

- Example: “Attached is last year’s successful briefing (Good). Also attached is a rejected draft that was too academic and lacked actionable data (Bad).”

- Impact: This provides YOUR definition of quality, not the internet’s average. It eliminates the Effort Gradient where the AI takes the path of least resistance.

The Compound Effect

CAGE components are not independent - they compound. Context without Align produces output that knows the domain but not the organisation. Goals without Context produces output that knows what success looks like but not where you are starting from. Examples without Goals produces output that looks like previous work but may not serve the current purpose. All four together produce output that is contextually grounded, institutionally appropriate, strategically targeted, and calibrated to professional quality standards. This is what separates context engineering from prompting. A prompt provides one or two of these. CAGE provides all four, systematically, every time.

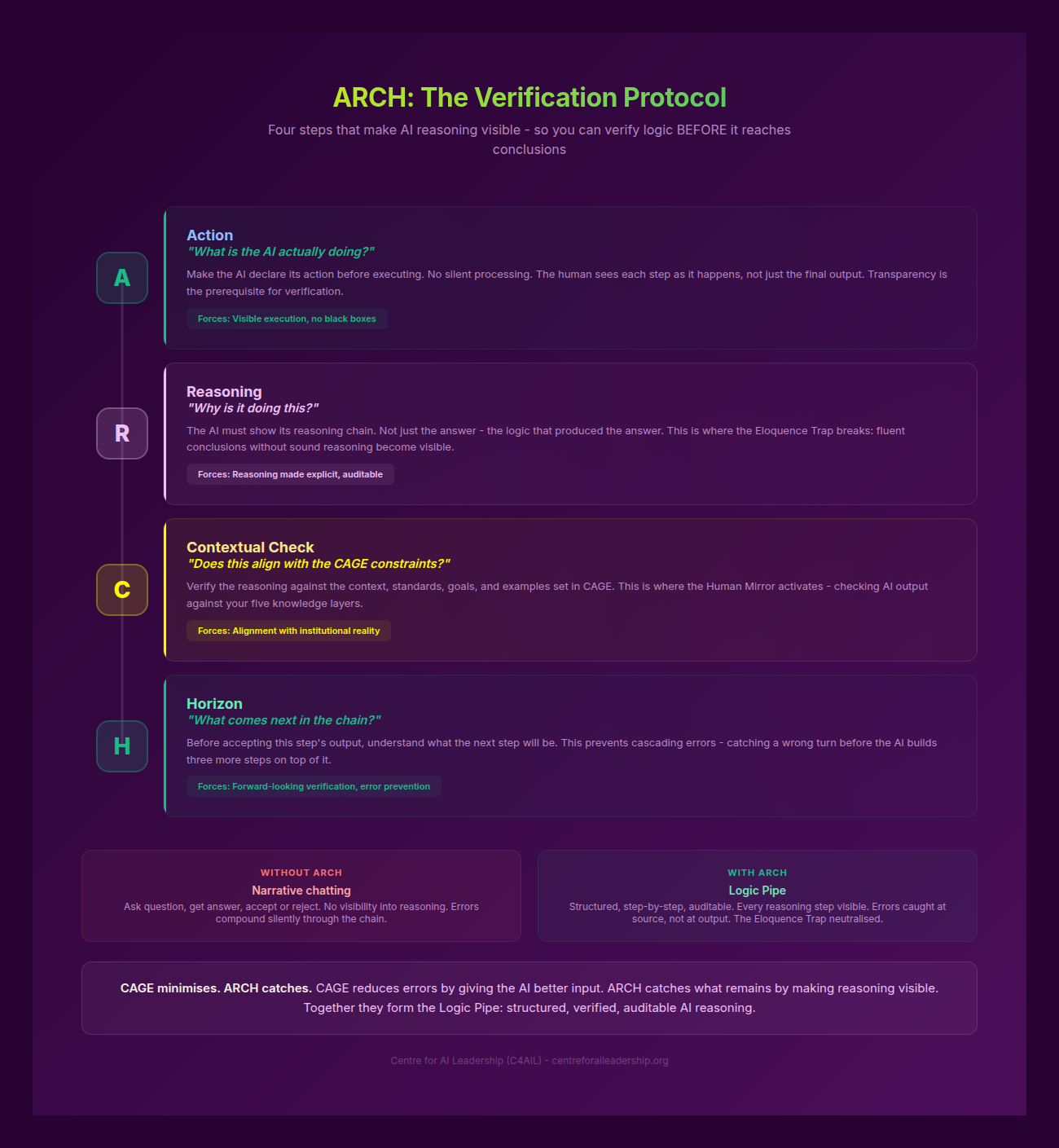

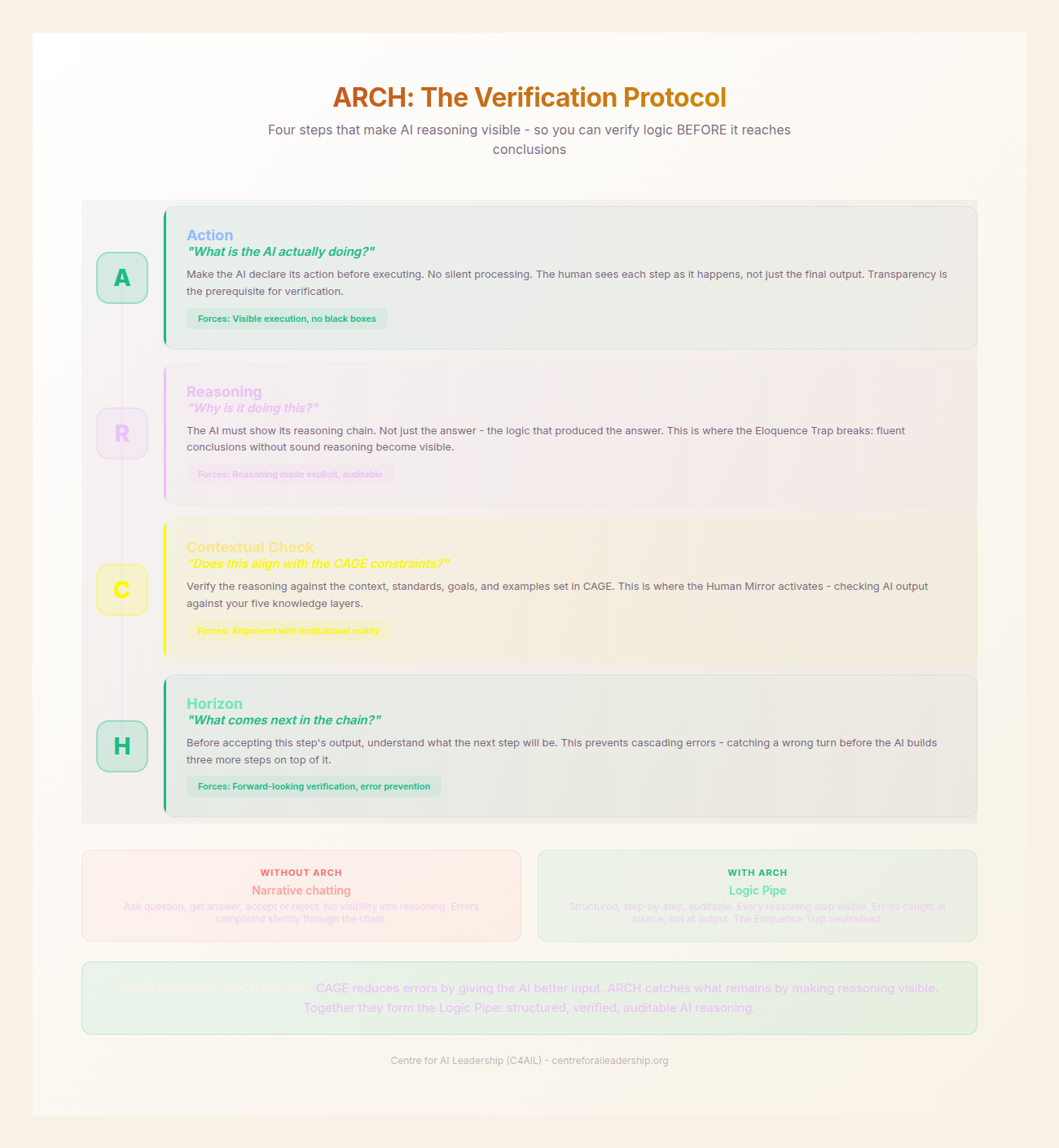

8.3 - ARCH: The Verification Chain

If CAGE is the setup, ARCH is the execution. One of the primary failures in AI adoption is the “One-Shot Fallacy” - the belief that a complex task can be completed in a single prompt. In reality, high-stakes work requires a multi-step process.

ARCH is a cycle that must be applied at every discrete step of a task. It stands for Action, Reasoning, Contextual Check, and Horizon. By forcing the AI to cycle through these four stages, the practitioner maintains Sovereign Command over the logic, not just the output.

A - Action

The Action defines the specific, verifiable task for the current step. It must be granular. Instead of “Write the report,” the Action might be “Outline the three primary regulatory hurdles based on the provided PDF.”

- Requirement: The task must be small enough that the human can verify it in under sixty seconds.

R - Reasoning

This is the most critical intervention for breaking the Eloquence Trap. Before the AI produces the final output for a step, it must be commanded to explain its logic.

Visible Reasoning allows the practitioner to see if the AI is “thinking” correctly. If the reasoning is flawed, the output will be flawed, regardless of how well-written it appears.

- Requirement: “Explain your logic for selecting these three hurdles before you write the summary.”

- Impact: When reasoning is visible, verification becomes possible.

C - Contextual Check

The Contextual Check is a feedback loop back to the CAGE framework. It addresses a failure mode that CAGE alone cannot prevent: mid-chain drift. In long-form AI interactions, models maintain internal coherence - the output continues to sound right - while gradually departing from the CAGE constraints established at initialisation. The tone shifts from your institutional standard to the AI’s default register. The analysis pursues an interesting tangent that no longer serves the decision-maker’s actual question. The quality drops from the calibrated standard to the AI’s generic output level. This is the Eloquence Trap applied to workflow chains: the output gets more eloquent and less accurate as the chain progresses.

The Contextual Check asks: “Does this step still align with our initial Context, Alignment, Goals, and Examples?”

- Requirement: A targeted compliance check at each step - not a full re-read of the CAGE, but a specific verification that this step’s approach respects the constraints. Step 3 might check “Am I still evaluating against our risk framework, not generic best practice?” while Step 5 might check “Is this recommendation within the air-gapped constraint?”

H - Horizon

The Horizon prevents premature resolution. It asks the AI to state what the next step is before concluding the current one. This maintains Chain Coherence and ensures that the logic of Step 1 flows seamlessly into Step 2.

- Requirement: “What is the next logical step to complete the Goal defined in CAGE?”

ARCH is not a checklist to be ticked off once; it is a Verification Cycle. Step 1: A→R→C→H → Human review point Step 2: A→R→C→H → Human review point

Not every step needs a full human review - Part V’s content tiering (Queue A/B/C) applies here. Routine steps get automated checks. Complex steps get domain expert review. High-stakes steps get full Orchestrator verification. But the ARCH structure is present at every step, creating the visibility that makes triage possible. You cannot route to the right reviewer if you cannot see what the step is doing. ARCH creates that visibility.

This iterative process ensures that the human remains the “judge” at every junction. It transforms the AI from a black box into a transparent Logic Pipe.

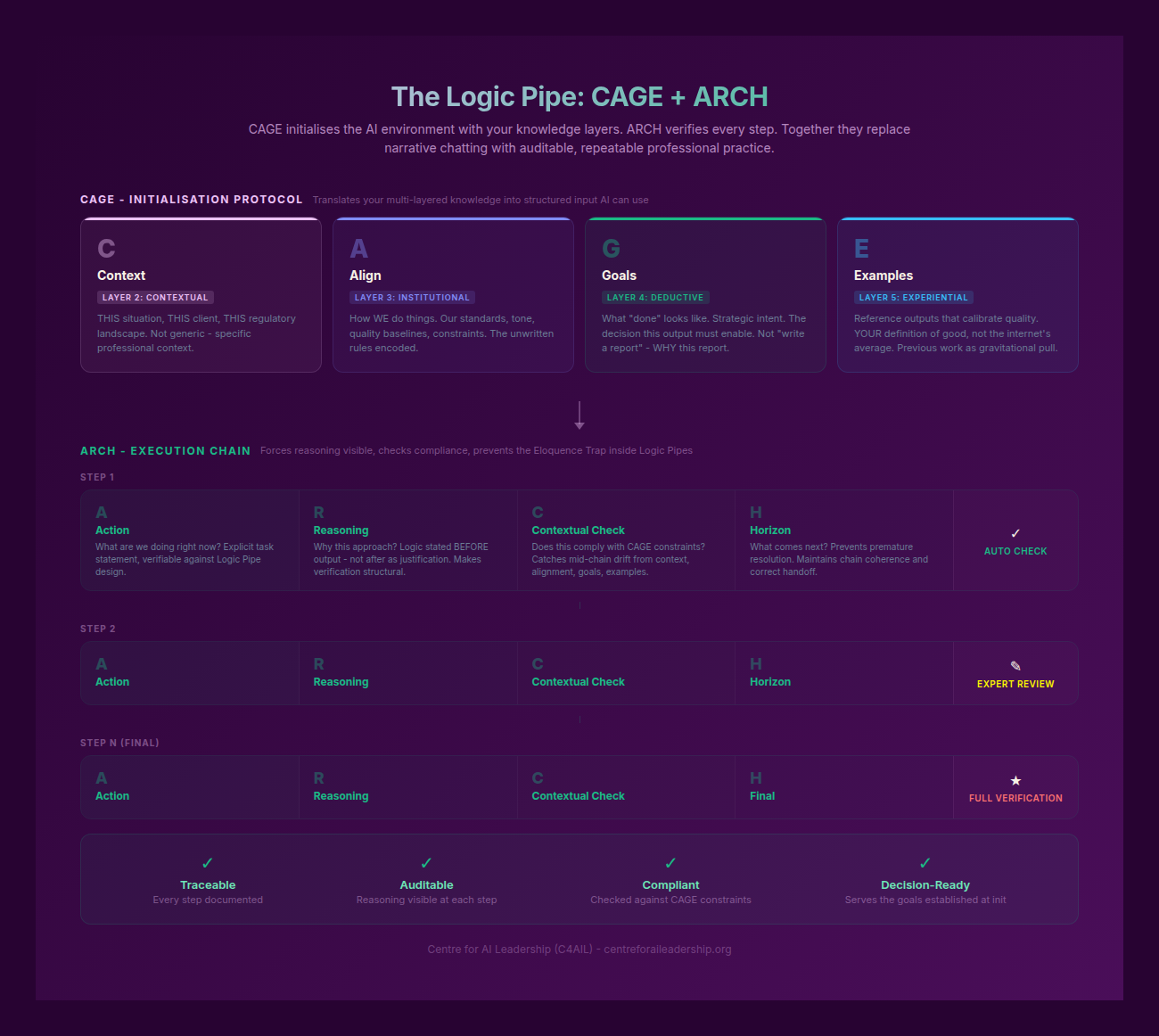

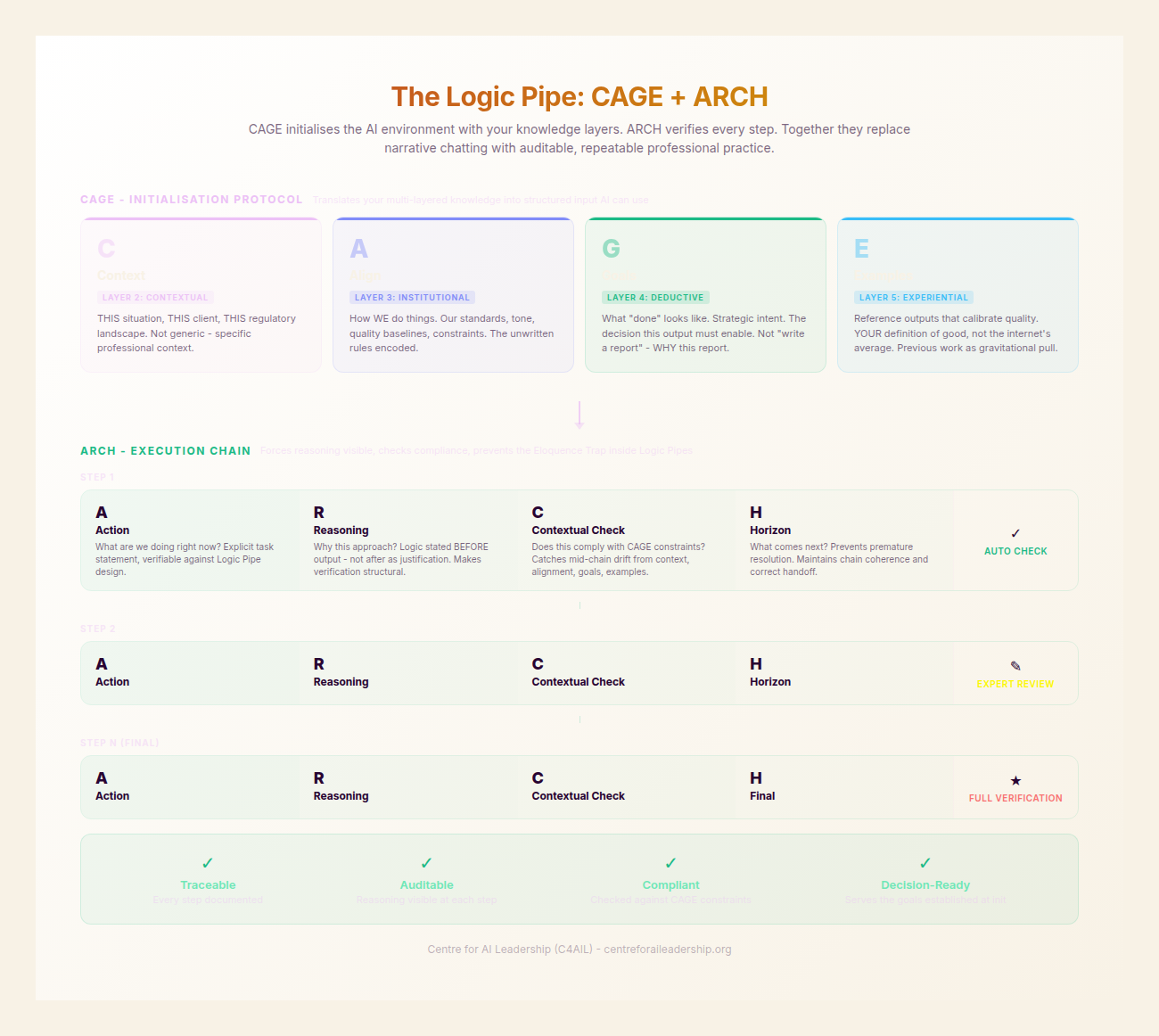

8.4 - CAGE + ARCH = The Logic Pipe

When we combine CAGE and ARCH, we create a Logic Pipe. This is a structured, verified, and auditable chain of thought that leads to a high-fidelity deliverable.

To understand the power of a Logic Pipe, we must contrast it with Narrative Chatting. In a narrative chat, the user asks a question, the AI provides an answer, and the user either accepts it or asks for a “tweak”. This is a low-leverage activity because the logic remains hidden. The user is judging the “veneer” of the output, not the “engine” of the thought.

In a Logic Pipe, the process is inverted:

- Initialisation: The CAGE framework sets the parameters.

- Modular Execution: The task is broken into ARCH cycles.

- Human Interdiction: The human reviews the Reasoning at each step.

- Verification: The final output is the result of a series of verified logical steps.

The Reality of Hallucination

We must be clear-eyed about the nature of the technology. AI models do not “retrieve” facts; they predict the next most likely token based on a probabilistic map. Consequently, Hallucination is not a bug that can be patched out; it is an inherent feature of the architecture.

Even with perfect context, AI can suffer from Fusion Errors (combining two unrelated facts), Positional Bias (favouring information at the start or end of a prompt), and Reasoning Failures.

The CAGE/ARCH toolkit is designed for a reality where AI hallucinates.

- CAGE eliminates ignorance-based hallucinations by providing the necessary facts and constraints.

- ARCH catches logic-based hallucinations by making the “thinking” visible.

- Human Agency catches what ARCH misses.

A Logic Pipe is a system designed to produce truth from a probabilistic machine. It is the practical application of the Human Mirror concept (see Part III) - using the AI to reflect and refine human intent, rather than replacing it.

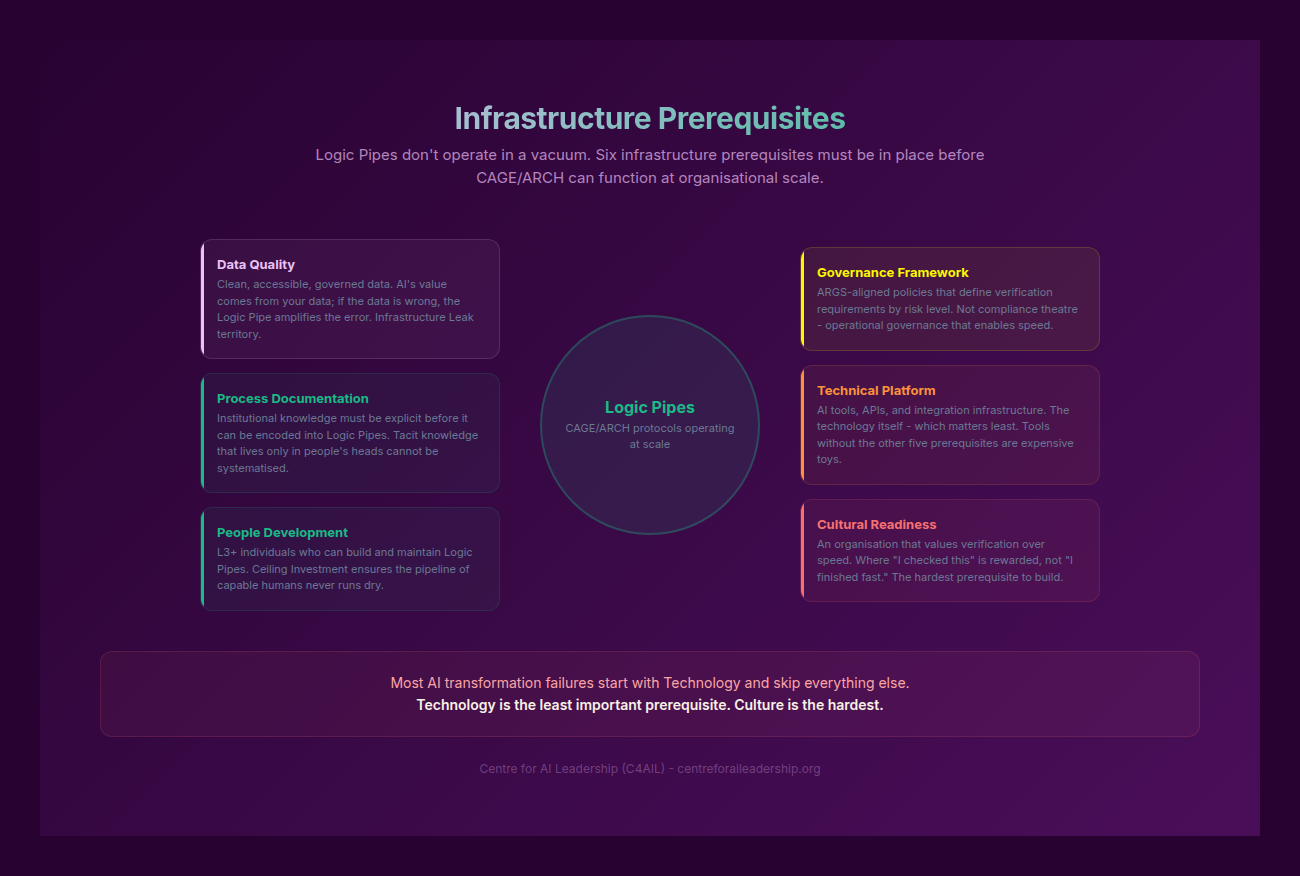

8.5 - The Infrastructure Prerequisite

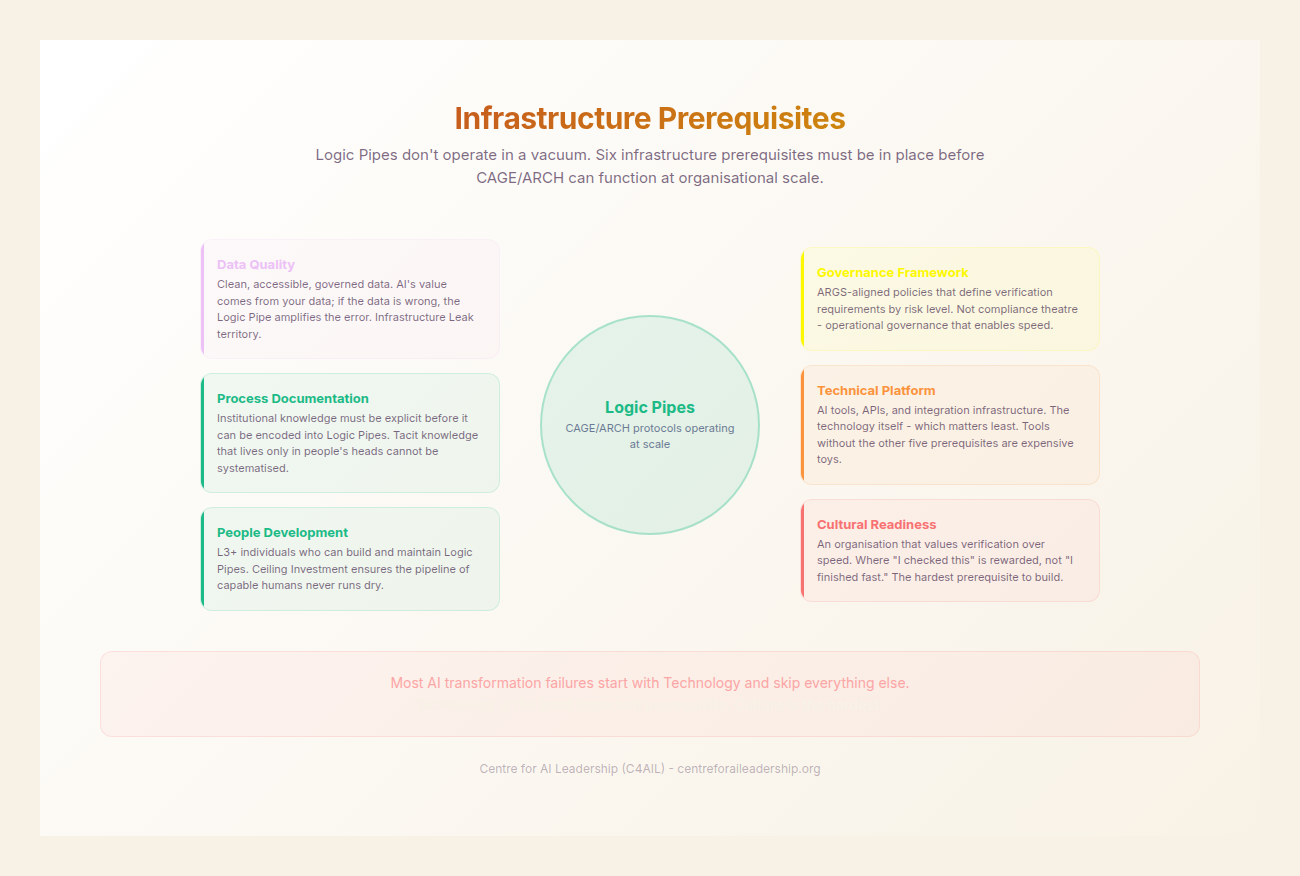

To deploy CAGE and ARCH effectively at an organisational level, certain Infrastructure Prerequisites must be met. These are the foundational elements that allow Context Engineering to scale from an individual skill to a corporate capability.

1. Model Selection

Not all models are created equal. A Logic Pipe requires a model with high Reasoning Density. While smaller, faster models might be suitable for simple summarisation, complex Context Engineering requires top-tier reasoning models that can maintain long-context coherence and follow multi-step instructions without drifting. The CAGE/ARCH process makes these requirements visible, allowing for more rational model selection.

2. Context Engineering and RAG

While CAGE provides the manual context, Retrieval-Augmented Generation (RAG) provides the automated context. However, RAG is often implemented poorly as a “search” tool. In a Sovereign Command environment, RAG must follow from CAGE requirements. The data being retrieved must be curated to fit the Institutional and Experiential layers of the framework.

3. Cost Planning

Context Engineering is token-intensive. Forcing an AI to “Reason” before it “Acts” doubles or triples the token consumption for a given task. However, this is a Value-Positive Trade-off. The cost of a few thousand extra tokens is negligible compared to the cost of a human expert spending hours fixing a hallucinated or poorly aligned output. CAGE and ARCH allow for transparent cost planning by making the “Logic Pipe” visible and measurable.

4. Template Libraries

In a mature AI-enabled organisation, CAGE and ARCH frameworks are not recreated from scratch for every task. They are maintained as Template Libraries - living organisational assets that encode the “Gold Standard” for various workstreams.

- An “Investment Memo CAGE”

- A “Technical Specification ARCH”

- A “Crisis Communication Logic Pipe”

These templates are the modern equivalent of the “Standard Operating Procedure”, but instead of being static documents in a graveyard, they are active “engines” that experts use to accelerate their work.

A Historical Warning

We must learn from the failures of the past. In the 1980s, the world was promised a revolution through Expert Systems. These systems tried to encode human expertise into a series of “if-then” rules, effectively trying to remove the expert from the loop. They failed because they were brittle and could not handle the complexity of the real world.

In the 2000s, Knowledge Management (KM) promised to capture expertise in vast document repositories. These became “graveyards of wisdom” because they were passive and disconnected from the actual flow of work.

CAGE and ARCH must not repeat these mistakes. They are not intended to replace the expert, but to provide an Expert Extension. The human must remain at every judgment point. The framework provides the “Sovereign” with a more powerful “Command” over the machine, but the responsibility for the final output remains, as it always must, with the human.

Practical Example: This Whitepaper

To demonstrate the efficacy of these tools, it should be noted that this paper was produced using CAGE and ARCH.

- CAGE Initialisation: The “Context” was defined as a whitepaper for senior leadership; the “Alignment” was set to British English with specific hyphenation rules; the “Goal” was to provide actionable frameworks; the “Examples” were previous C4AIL high-authority publications.

- ARCH Execution: Each section was drafted in cycles. For Part VIII, the “Action” was to detail the toolkit; the “Reasoning” phase identified the need to contrast “chatting” with “engineering”; the “Contextual Check” ensured no em-dashes were used; the “Horizon” pointed toward the Knowledge Layer in Part IX and the implementation strategies in Part X.

The result is a document that is not a generic “AI-generated” summary, but a precise, high-fidelity instrument of leadership. This is the power of the Logic Pipe. This is the path to Sovereign Command.