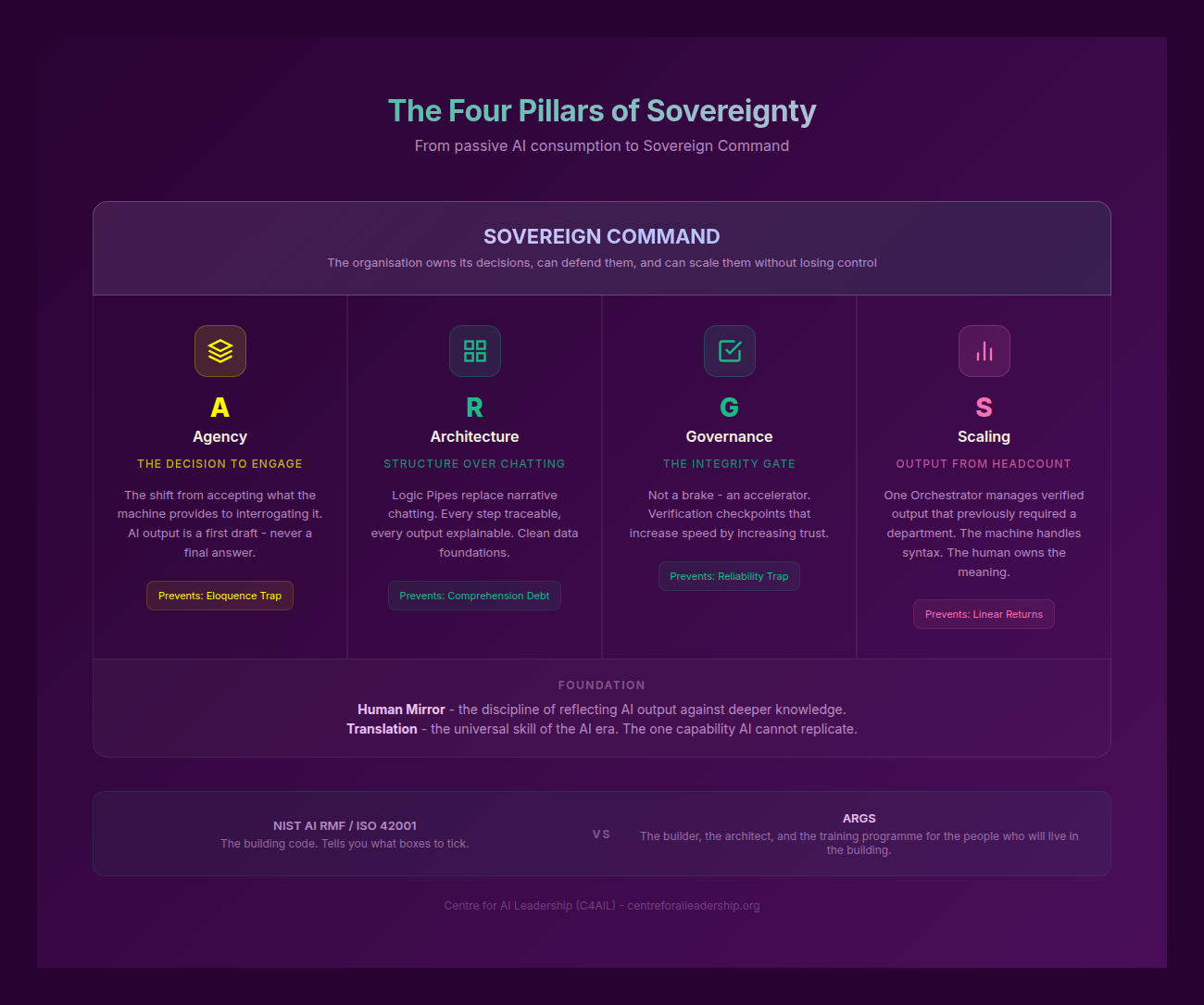

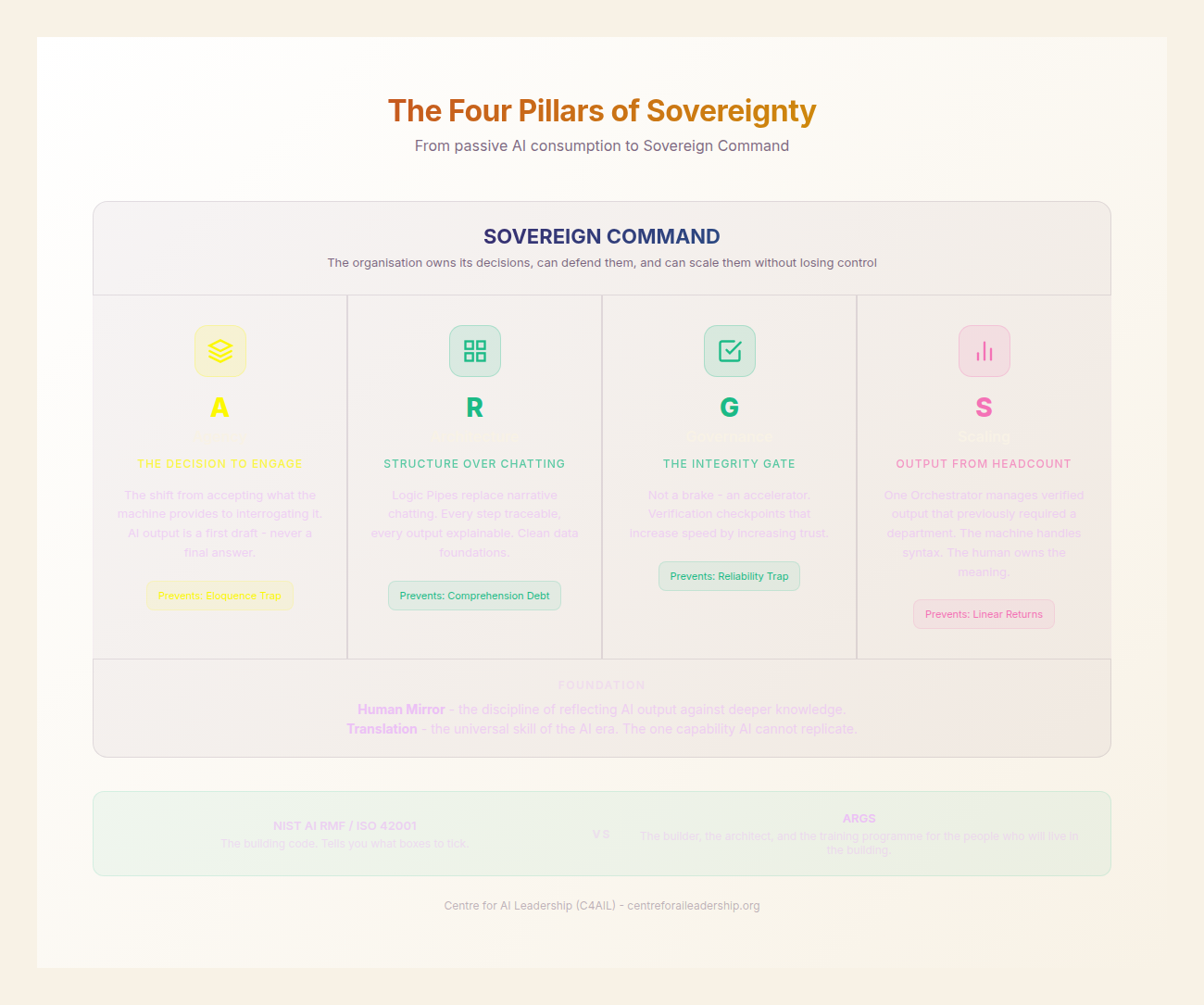

Part VI: The Strategy — Four Pillars of Sovereignty (ARGS)

Agency, Architecture, Governance, Scaling — the four disciplines that separate the 5% from the 95%.

Part VI: The Strategy - Four Pillars of Sovereignty (ARGS)

The central crisis of the generative era is not a failure of technology, but a failure of discernment. As we have established in previous chapters, the arrival of Large Language Models (LLMs) has granted us access to a cognitive engine of unprecedented scale, yet most organisations remain trapped in a cycle of tactical experimentation. They are collecting tools without a compass. They are training for skills without a strategy.

6.1 - Why Strategy, Not Just Skills

In the early days of the generative revolution, the focus was almost entirely on “prompt engineering” - the artisanal craft of coaxing a specific output from a black box. However, we have reached the limits of this individualistic approach. Strategy must precede skill. Without a coherent strategic framework, individual productivity gains are lost to Leverage Leaks - a phenomenon where time saved by an employee via AI is simply consumed by more low-value tasks or administrative friction, rather than being reinvested into high-value cognitive work.

My key message is this: if you focus on skills alone, you are merely accelerating the production of Workslop - low-quality, unverified AI output that clogs the arteries of the organisation. To achieve true Sovereign Command, we require a structural framework that bridges the gap between individual capability and organisational outcomes.

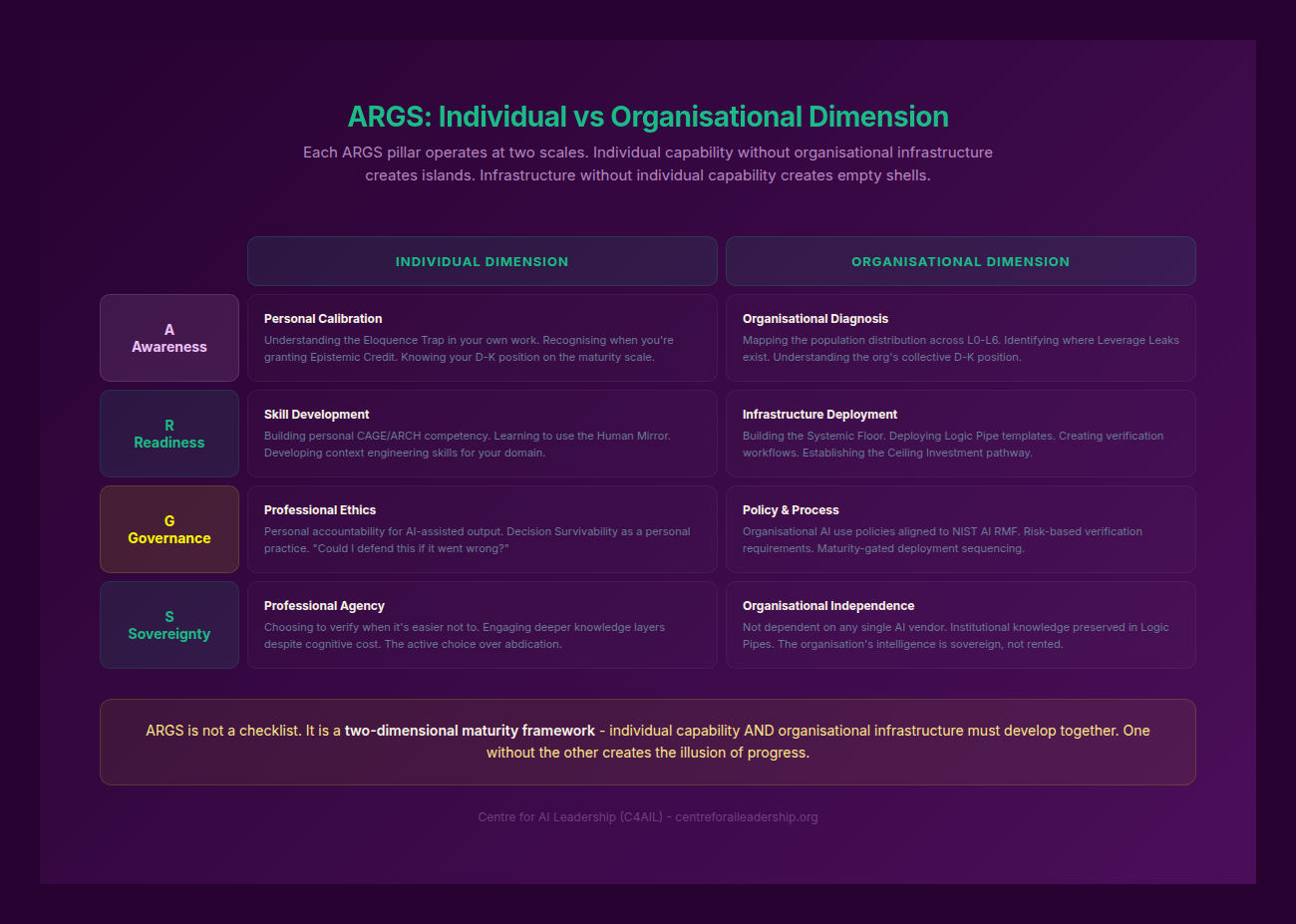

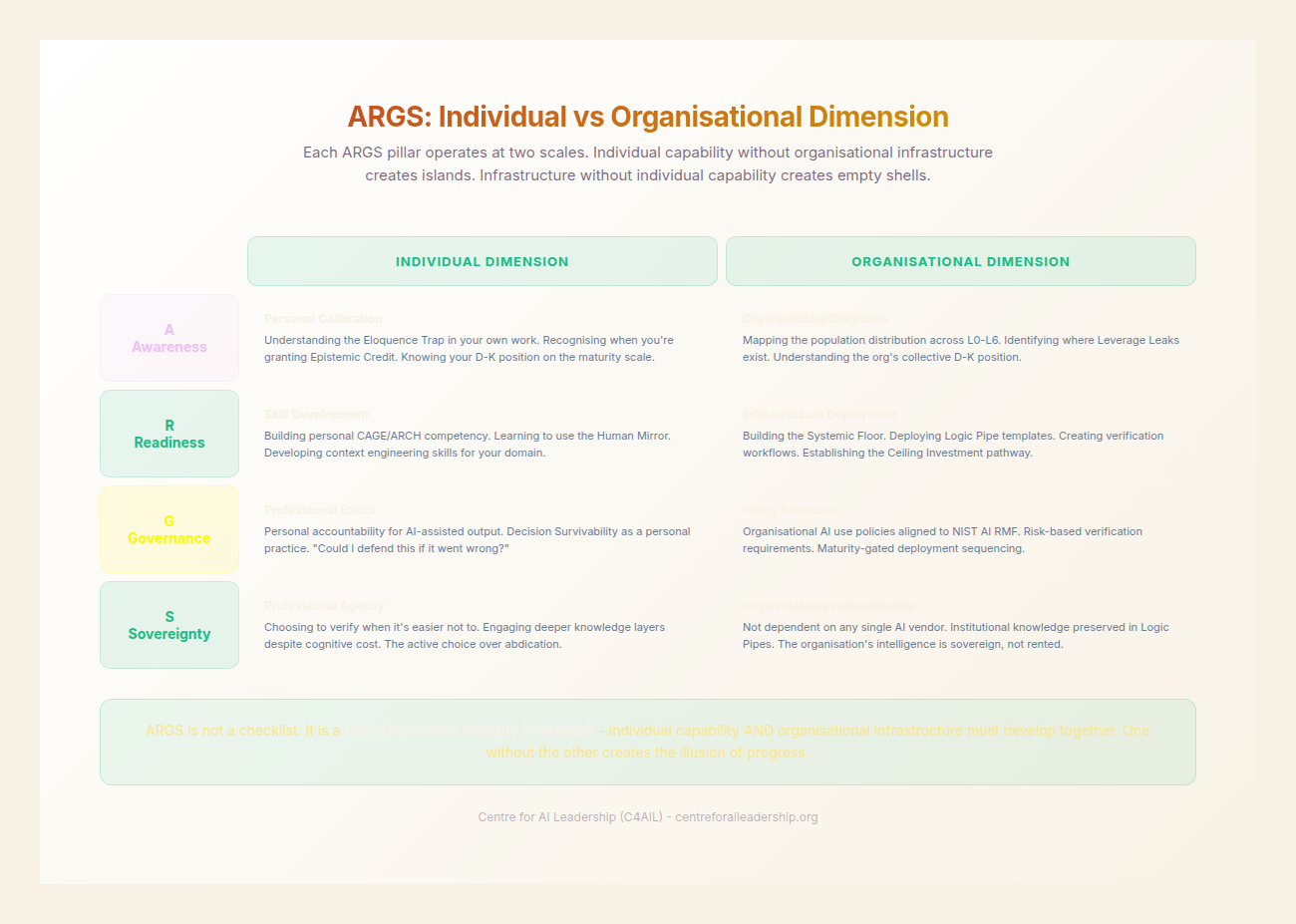

We call this framework ARGS: Agency, aRchitecture, Governance, and Scaling. (To be clear, the R in ARGS maps to aRchitecture, representing the structural design of our cognitive systems).

The data supports this shift toward structural redesign over mere tool adoption. A BCG study (2025) found that while many firms are experimenting, only 5% of organisations have become Value Generators - those who have integrated AI into their core business model. These leaders are seeing 5x the revenue growth compared to laggards (BCG, 2025). Similarly, research from Bain (2025) indicates that while tool-only deployment yields 10-15% efficiency gains, true workflow redesign - a strategic overhaul of how work happens - delivers gains of 25-30% (Bain, 2025).

The ARGS framework is designed to prevent the Verification Bottleneck, where the speed of AI generation outpaces the human capacity to validate it. It moves the user from an L1 Assisted User or L2 Power User to an L3 Competent Integrator and beyond, ensuring that every gain in speed is matched by a gain in sovereignty.

6.2 - Agency: The Human Non-Negotiable

Agency is the first and most critical pillar. It is the refusal to be a passive consumer of AI outputs. In the generative era, Agency is defined as the active exercise of human judgment to direct, interrogate, and validate machine intelligence. It is the choice to apply the Five Knowledge Layers from Part II - Contextual, Institutional, Deductive, and Experiential - rather than accepting Syntax alone. It is the claiming of Accountability Labour (Part I): the recognition that while AI handles the intellectual labour at machine speed, the human retains the ethical oversight, the risk ownership, and the judgment that says “this output is trustworthy enough to act on.”

Agency is not AI scepticism - the blanket distrust that refuses to use the tools at all. It is not hand-checking every output - that would recreate the Verification Bottleneck at scale. And it is not a one-time decision - it is a daily practice, the Human Mirror from Part III applied as a professional habit.

Individual Dimension: The Habit of Interrogation

At the individual level, Agency manifests as a Habit of Interrogation. It is the psychological discipline to resist the Eloquence Trap - the tendency to trust an AI simply because its prose is fluent and confident.

Let me be honest: my number one concern for the modern professional is the erosion of the Verification discipline. When a model produces a 2,000-word report in seconds, the temptation to skim and approve is nearly overwhelming. Agency requires a Subject-Object shift (Kegan, 1982). Instead of being “subject to” the AI - where the AI’s logic flows through you unchecked - you must make the AI the “object” of your scrutiny.

This involves an active choice to engage deeper layers of the task. An individual with high Agency uses the ARCH (Action, Reasoning, Contextual Check, Horizon) framework to ensure they are not just “prompting” but “commanding.” They ask themselves: “Can I hold ‘this is brilliant’ and ‘this might be wrong’ simultaneously?” This cognitive dissonance is the hallmark of the L4 Strategic Modifier.

Organisational Dimension: A Culture of Questioning

For an organisation, Agency is not just a personal trait but a cultural mandate. It is a Culture that rewards questioning over speed. In a low-Agency organisation, managers ask, “Why did it take you ten minutes when the AI could do it in one?” In a Sovereign organisation, the manager asks, “How did you verify this?” and “What did the AI miss?”

Organisational Agency requires giving employees the Permission to challenge AI output, even when that output aligns with the company’s prevailing biases. This means measuring verification quality rather than adoption volume. If your KPIs only track how many people are using the tool, you are incentivising the surrender of Agency. You must instead track the “Human-in-the-loop” interventions - the instances where human judgment corrected a hallucination or refined a strategic direction.

The Attitude Contradiction: Hiring for Agency, Building Against It

The depth of this challenge becomes visible when you examine the structural contradiction most organisations carry without noticing it. In high-stakes fields - cybersecurity, clinical medicine, engineering, law - the hiring wisdom is “hire for attitude.” The attitude they select for is initiative, accountability, and the willingness to push back when something looks wrong. This is Agency. It is the capacity to hold “this is brilliant” and “this might be wrong” simultaneously - the hallmark of the L4 Strategic Modifier described above.

But the management structures these professionals enter reward the opposite. Follow the process. Defer to the senior partner’s conclusion. Do not slow down the team. Get your ticket count up. The performance system measures compliance, speed, and volume - all Stage 3 (Socialised Mind) behaviours that Kegan’s developmental research shows 58% of adults default to. Organisations select for Stage 4 capability at the hiring gate and then systematically train it out through the reward structure.

This is not merely a cultural problem. It is a structural vulnerability that AI exploits. The Eloquence Trap is purpose-built for the Stage 3 professional: accept the confident, well-structured output from the authoritative-sounding source, do not question it, move on. An organisation whose management culture rewards deference has pre-loaded the exact failure mode that generative AI triggers. The individual who pushes back on AI output is not just challenging the machine - they are challenging the person who approved the workflow, the team that relies on the speed, and the performance system that measures volume over rigour.

Agency, therefore, is not a trait to be hired for and hoped to survive. It is an environment to be built. Five structural moves operationalise this:

- Gate before action - no AI output reaches production without a human verification step, built into the workflow, not left to individual discipline.

- Constrain before generation - institutional, contextual, and domain knowledge is encoded into the AI workflow (via Logic Pipes) before output is generated, not checked after.

- Encode after correction - every human correction of AI output is captured in the system so the same error is caught or prevented next time. Corrections compound.

- Retain accountability - AI handles the Intellectual Labour; the human retains the Accountability Labour. Decision Survivability (Part VII) is the governance test.

- Keep the trail - every AI-assisted decision leaves a record of what was generated, verified, changed, and approved. The trail makes invisible degradation visible.

These moves must be embedded in the workflow itself, precisely because the organisational culture will otherwise erode whatever developmental capacity the individual brings. The gate works for the Stage 3 professional who cannot yet independently challenge authoritative output. The iteration cycle (fail → catch → fix → encode → compound) is the mechanism through which Stage 3→4 transitions occur over time. The trail creates the accountability that accelerates that development.

The Sovereign organisation is not one that hires Agency. It is one that makes Agency the path of least resistance.

6.3 - Architecture: Logic Pipes, Not Chat Windows

If Agency is the “will” to command, Architecture is the “engine” through which that command is executed. We must move away from the “chat window” as the primary interface for work. The chat window is artisanal; it is ephemeral and unrepeatable.

Individual Dimension: Designing Logic Pipes

For the individual, Architecture means moving from “prompting” to the design of Logic Pipes. A Logic Pipe is a documented, repeatable, and traceable chain of reasoning that can be reused and refined.

Instead of asking an AI to “write a marketing plan,” the L3 Competent Integrator designs a multi-stage architecture:

- A pipe for Contextual Retrieval (CAGE framework).

- A pipe for Structural Decomposition (breaking the plan into components).

- A pipe for Adversarial Review (asking the AI to find flaws in its own logic).

This is the shift from being a “writer” to being an Architectural Designer. You are no longer just producing content; you are building the machinery that produces content. This reduces Comprehension Debt - the risk of relying on functional systems that nobody in the organisation actually understands.

Organisational Dimension: Knowledge Retrieval Systems

At the organisational level, Architecture refers to the Data infrastructure and Template Libraries that provide the “ground truth” for AI models. Without a robust Knowledge Retrieval System (such as Retrieval-Augmented Generation or RAG), the AI is simply hallucinating based on its training data, rather than operating on your proprietary expertise.

Mature AI organisations are 2.5x more likely to see revenue growth because they have invested in this architectural layer (NTT DATA, 2025). They have moved beyond “isolated AI use” to “integrated AI systems.” This involves creating a shared repository of validated Logic Pipes - a Template Library - where the best reasoning patterns of your top performers (L5-L6) are codified for the rest of the workforce.

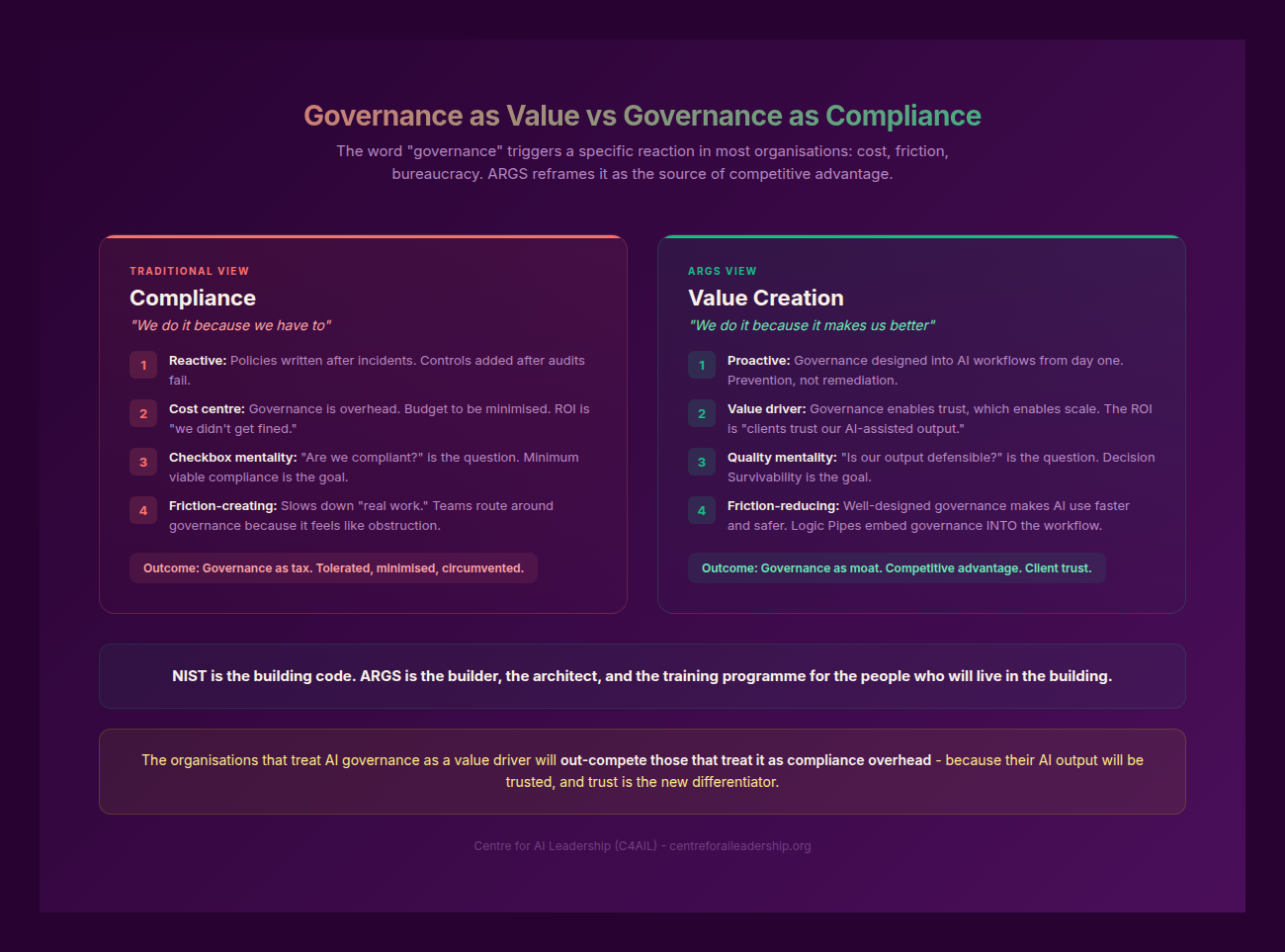

6.4 - Governance: The Value Layer

Governance is frequently misunderstood as a restrictive “compliance burden” or a set of “no” commands from the IT department. Governance is not compliance. It is not restriction. It is not a committee that meets quarterly to review AI policy. And it is certainly not a document you file and forget. We must reframe this: Governance is the Value Layer. Consider the airline pilot: pilots do not experience safety regulations as restrictions on their freedom. They experience them as the infrastructure that allows them to fly faster, higher, and more confidently than they could without them. The pilot who operates within well-designed guardrails flies faster than the one who must constantly worry about the unknown. The same principle applies to AI governance. It is the set of protocols that ensures AI output is safe, ethical, and, above all, useful.

The mathematics make the case: the Reliability Trap from Part II applies directly. If each step in a five-step AI workflow achieves 95% accuracy, the cumulative accuracy is 0.95^5 = 77%. One in four outputs contains at least one error. Governance is what catches those errors before they reach the client. Without it, you are relying on luck, not rigour.

Let me be honest about governance: without it, you are not building a business; you are building a liability. My number one concern is the Supply chain risk inherent in generative AI. We have already seen examples of AI recommending nonexistent software packages (AI Hallucination in code), which developers then unknowingly integrate into corporate systems, propagating vulnerabilities (SlashData, 2024).

Individual Dimension: Living Material and Audit Trails

For the individual, Governance means treating AI templates and outputs as Living Material. It is the commitment to documenting failures and maintaining Audit trails. When an L4 Strategic Modifier uses an AI to assist in a high-stakes decision, they don’t just save the final PDF. They save the ARCH log - the record of the reasoning, the contextual checks performed, and the verification steps taken. This ensures Decision Survivability - the ability to defend the decision six months later when the AI’s “eloquence” has faded and only the logic remains.

Organisational Dimension: Quality Metrics and Trust

For the organisation, Governance is about defining Quality metrics that go beyond “does it look right?” It involves setting the “Building Code” for AI use.

As we often say: “NIST is the building code. ARGS is the builder, the architect, and the training programme for the people who will live in the building.”

Organisational governance ensures that AI systems are transparent and that their “reasoning” can be interrogated by auditors. This creates a foundation of trust. For example, PwC’s $1B investment in AI saw a 95% voluntary engagement rate from employees because they trusted the systems were governed by clear ethical and professional standards (PwC, 2024). Governance provides the safety net that allows people to experiment without fear of catastrophic failure.

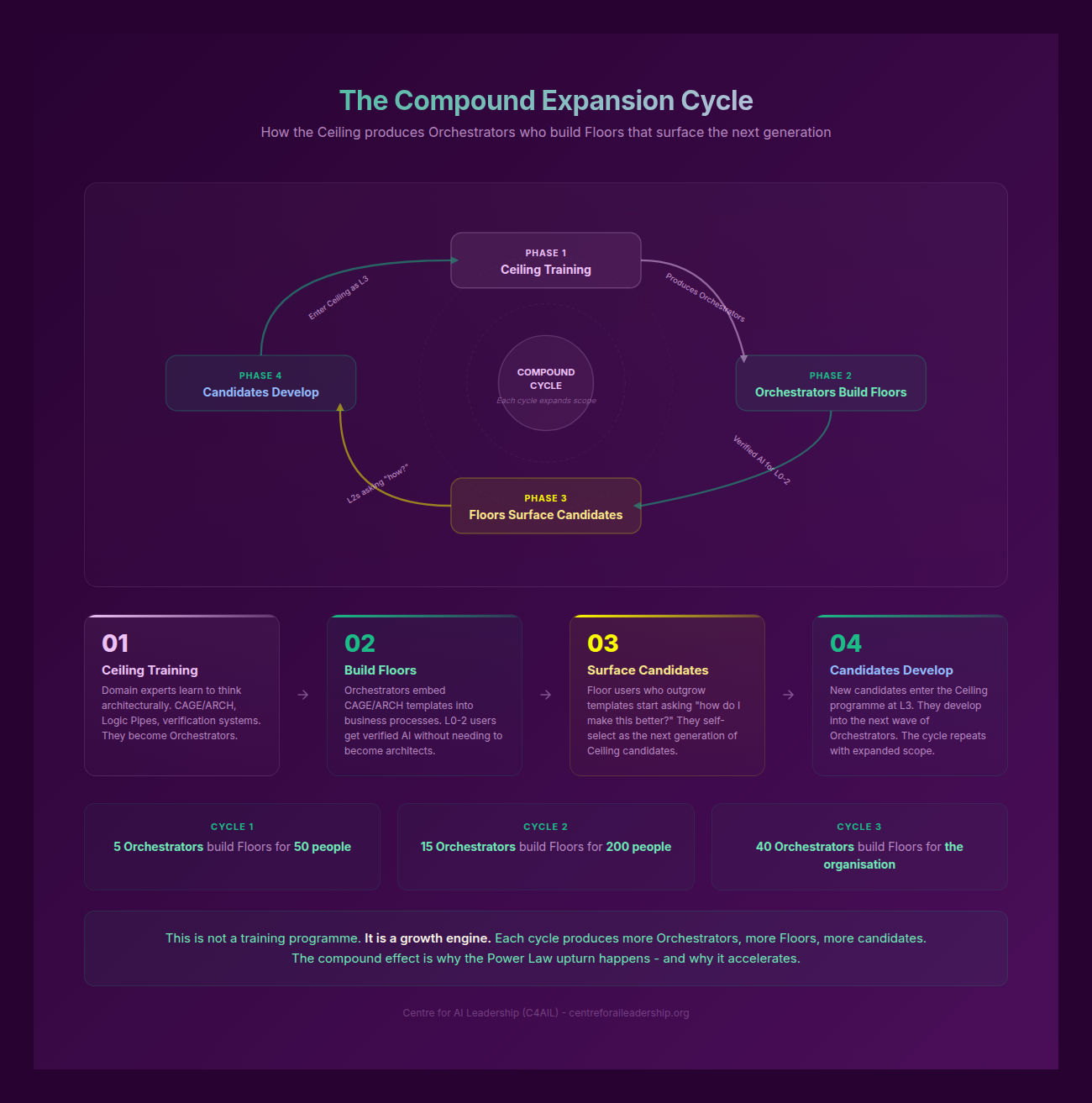

6.5 - Scaling: The Missing Middle and the Compound Effect

Scaling is the final pillar, and it is where the “Sovereign” organisation separates itself from the “Assisted” organisation. Scaling is not just about “more AI”; it is about Decoupled Scaling - the ability to increase output and value without a linear increase in headcount or human burnout.

Individual Dimension: Translating for the Field

For the individual, Scaling is about Translation capability. An L5 Expert Innovator or L6 Maestro does not just use AI for themselves; they build systems that others can use. They translate their deep domain expertise into Logic Pipes that “level up” their entire team. They become the “Orchestrators” who manage a fleet of AI-augmented workflows.

Organisational Dimension: The Compound Expansion Cycle

At the organisational level, Scaling creates a Compound Expansion Cycle. When you save time through AI (Agency + Architecture) and ensure that time is spent on high-value work (Governance), you create a surplus of cognitive capital.

Leading firms, such as EY, have demonstrated this by reinvesting 47% of their AI-driven efficiency gains back into new service lines and R&D (EY, 2025). This is the “Flywheel Effect” of Sovereign Command.

However, there is a significant danger here: The Missing Middle. Stanford research has noted a 13-16% decline in entry-level hiring in roles highly exposed to AI (Stanford HAI, 2025). If organisations use AI only to replace junior staff, they are destroying their future leadership pipeline. The traditional organisational “Pyramid” is becoming an “Obelisk” (HBR, 2025) - a heavy top of seniors with no base of juniors learning the craft.

The Sovereign strategy is to use AI to ACCELERATE junior development, not replace it. We use AI to move a junior from L1 to L3 in eighteen months rather than three years, by providing them with the “Architectural” scaffolds and “Agency” training they need to perform at a higher level.

6.6 - ARGS as a Teaching and Diagnostic Framework

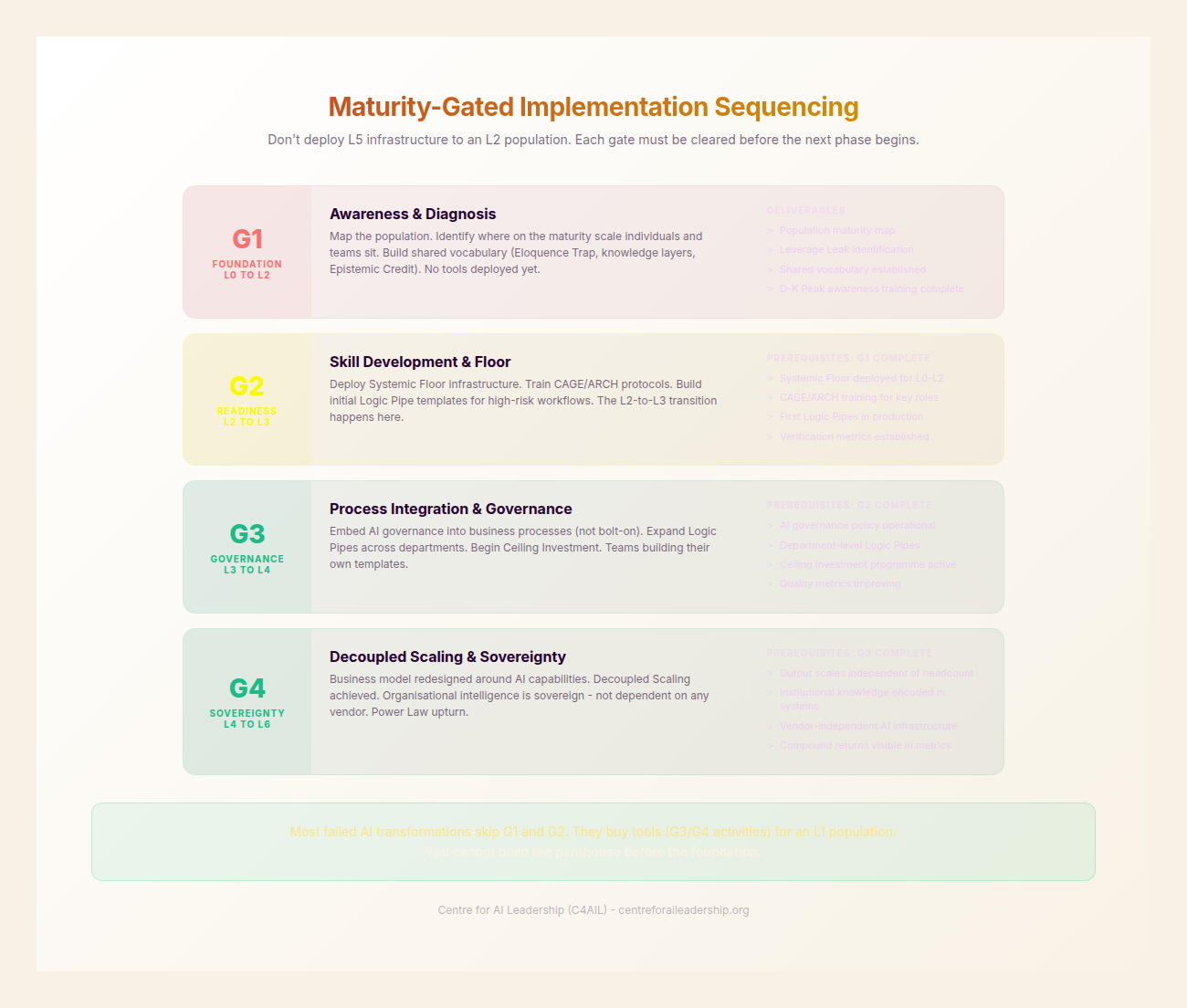

The ARGS framework is not just a theoretical model; it is a diagnostic tool for identifying why AI initiatives fail. It also provides a Maturity-gated sequencing for training.

Maturity-Gated Sequencing

We do not teach all four pillars at once.

- L0-L2 (Observer to Power User): Focus primarily on Agency. Before they learn to build complex architectures, they must learn to distrust the model and verify its output.

- L3-L4 (Integrator to Modifier): Introduce Architecture. Once they have the “will” to verify, they need the “pipes” to make that verification systematic and repeatable.

- L5-L6 (Innovator to Maestro): Focus on Governance and Scaling. These individuals are now responsible for the integrity of the organisation’s systems and the development of the next generation of talent.

The ARGS Failure Modes Table

When an organisation or individual struggles with AI integration, it is almost always because one of the four pillars is missing. Use this table to diagnose the “Crisis of Discernment” in your own context.

| Missing Pillar | What Happens | Diagnostic Symptom |

|---|---|---|

| No Agency | Hollow Infrastructure | Systems produce unverified output at scale; “Workslop” accumulates; errors are caught too late. |

| No Architecture | Individual Brilliance, Org Fragility | A few “AI wizards” do great work, but their methods are artisanal and die when they leave the firm. |

| No Governance | Scaling Without Guardrails | The organisation moves fast but is one hallucination or data leak away from a reputational crisis. |

| No Scaling | Verified Boutique | The work is excellent and safe, but the gains are small and localized; the organisation fails to capture market share. |

| All four but no Orchestrators | Strategy without a Strategist | The framework exists on paper, but there is no L5/L6 leadership to drive the Subject-Object shift. |

Integration Logic: The Sovereign Loop

The pillars of ARGS do not exist in isolation; they form an integrated loop of continuous improvement.

- Agency enables the critical thinking required to design Architecture.

- Architecture provides the structure necessary for Governance to be applied.

- Governance ensures that Scaling is safe and sustainable.

- Scaling reveals the next set of Agency gaps as the organisation moves into more complex territories.

Ultimately, Sovereignty is built WITH people, not FOR people. You cannot “deploy” sovereignty from the IT department. It must be cultivated through the ARGS framework, ensuring that every individual in the organisation moves from being a passive observer of the generative era to a sovereign commander of it.

This is the shift from AI as a “tool” to AI as a “capability.” It is the move from the “Eloquence Trap” to “Epistemic Credit.” It is the path to Sovereign Command.