Part V: The Role — Intelligence Orchestrator

The Intelligence Orchestrator — the capability every organisation needs but most lack. Not a role you hire for; a capability you develop.

Part V: The Role - Intelligence Orchestrator

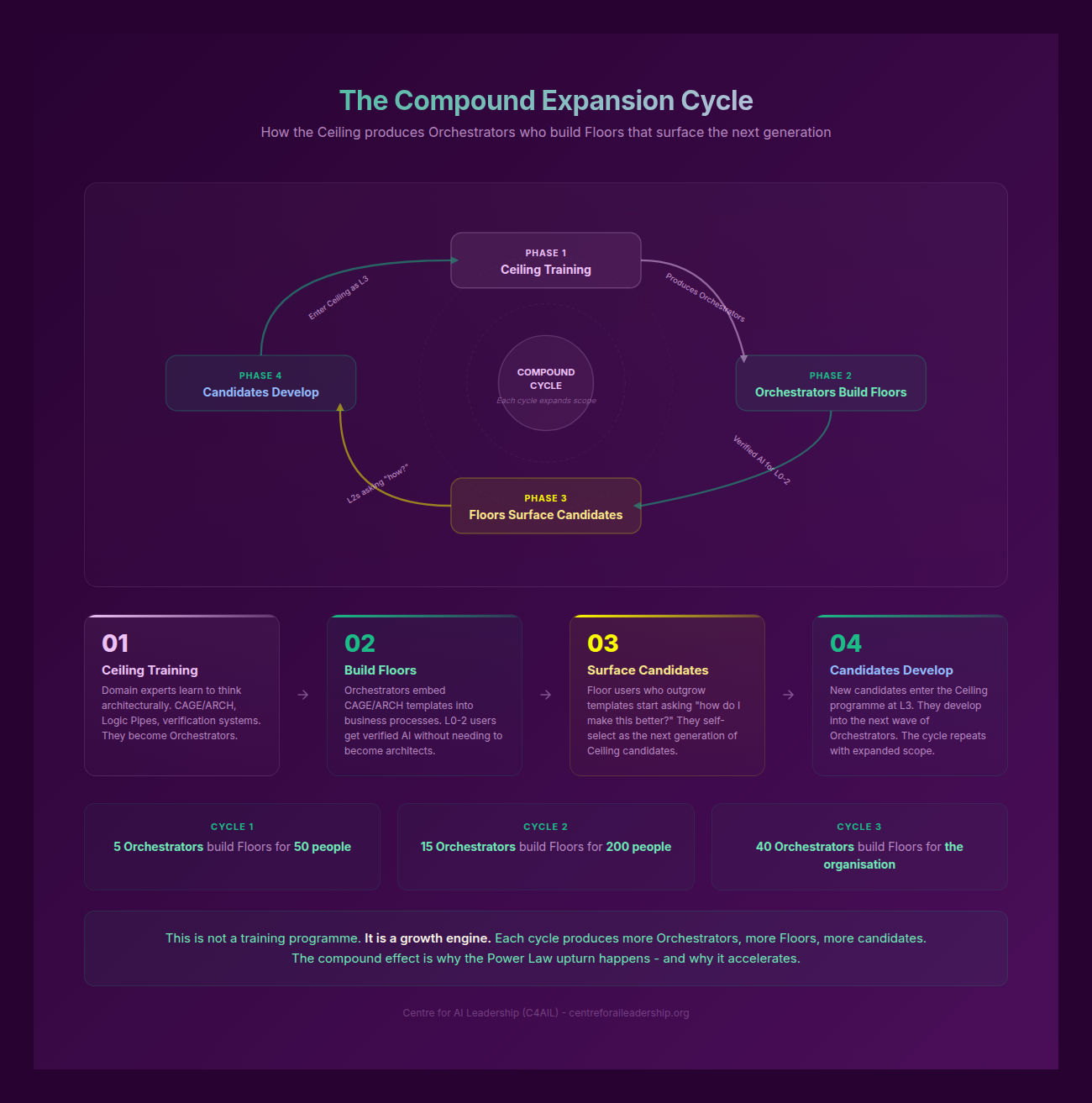

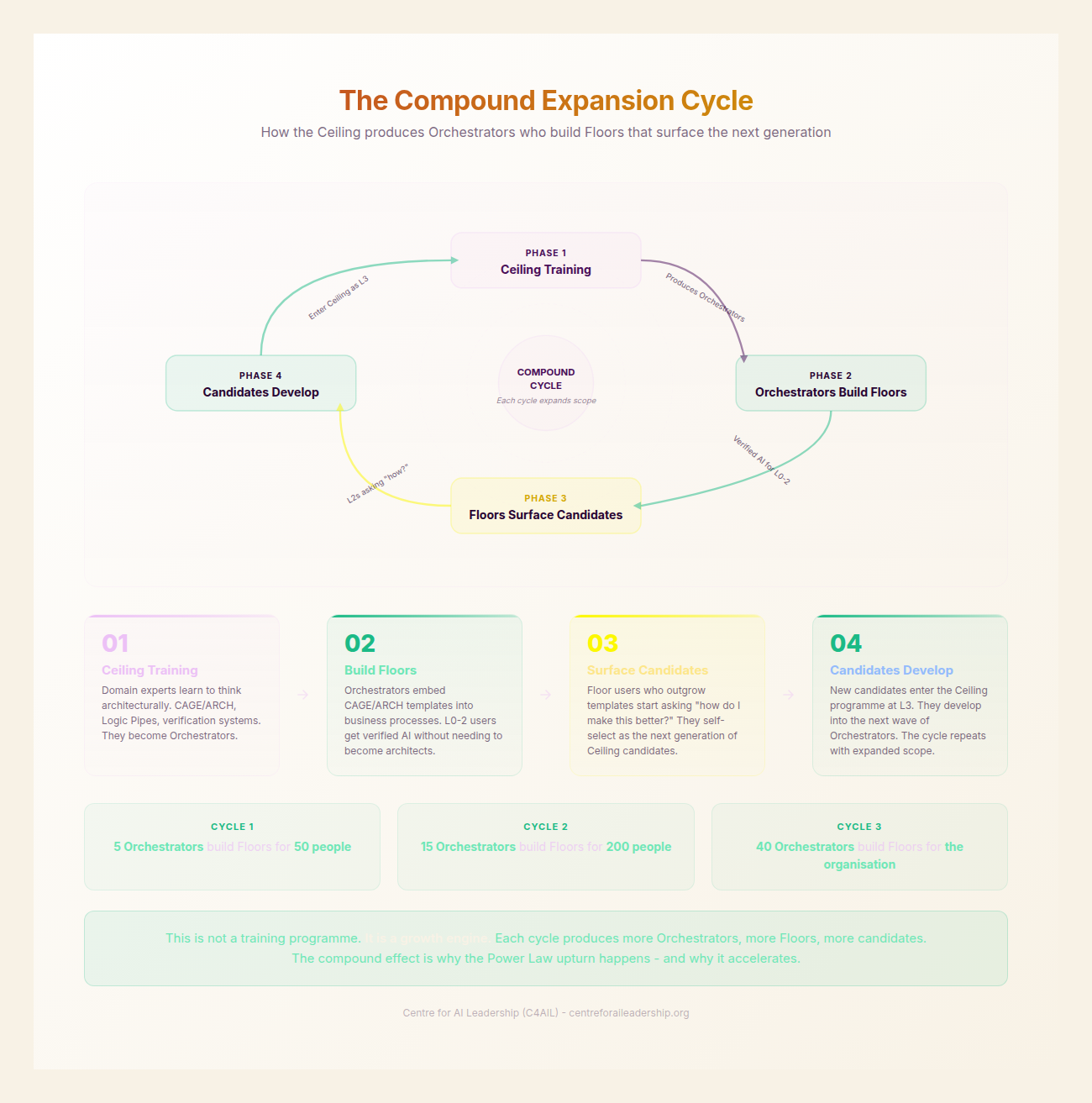

My key message is this: the Orchestrator is not a role you hire for. It is a capability you develop in the people who already know your domain. The generative era has reached a point of inflection where the primary constraint on value is no longer the availability of intelligence, but the capacity to direct it. As we have established in previous sections, the Eloquence Trap lures the unwary into a state of false security, where the fluent output of Large Language Models (LLMs) is mistaken for rigorous thought. To navigate this, organisations require more than just Passengers (L0-2) or even skilled Architects (L3-4). They require a new class of professional: the Orchestrator (L5-6).

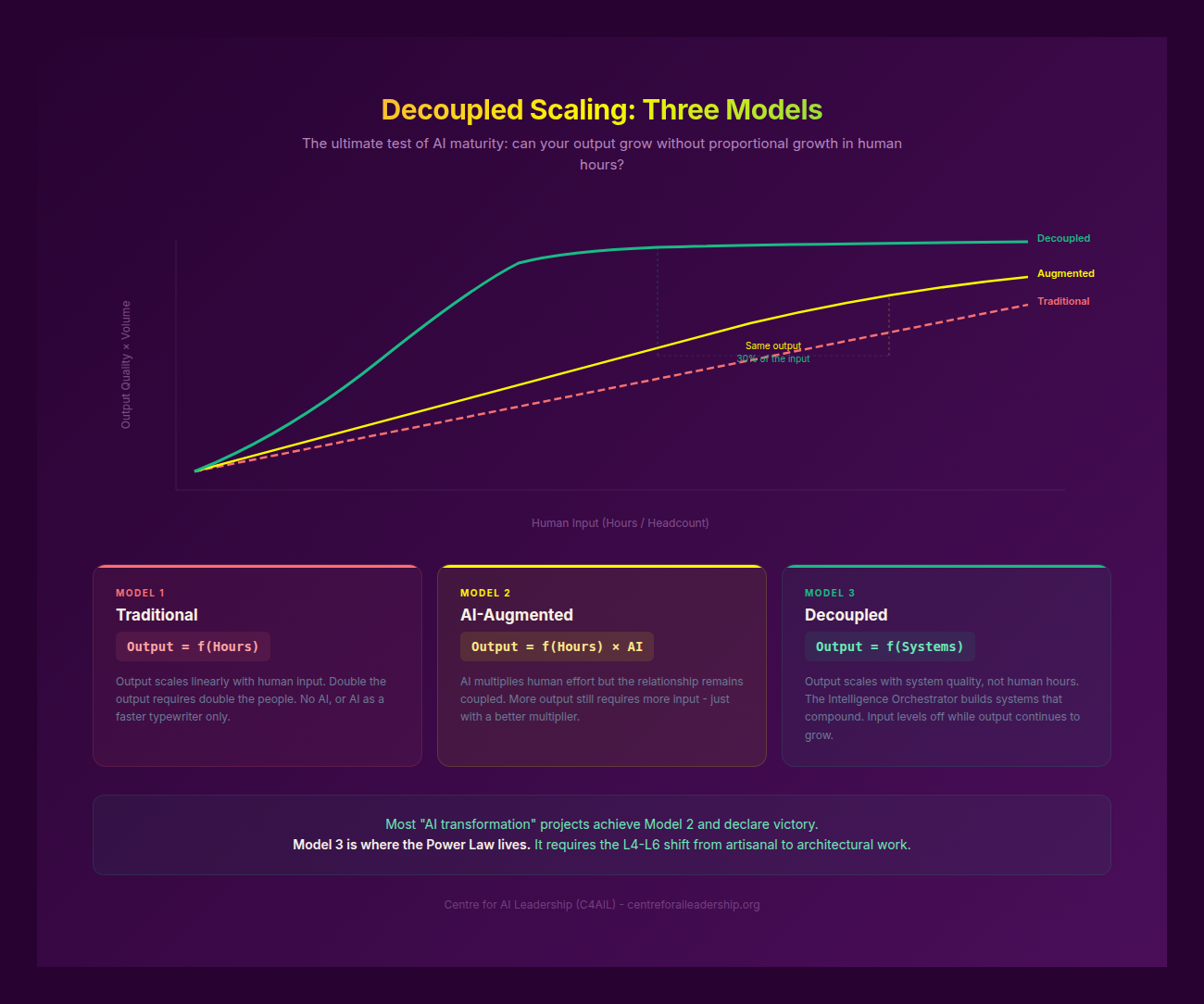

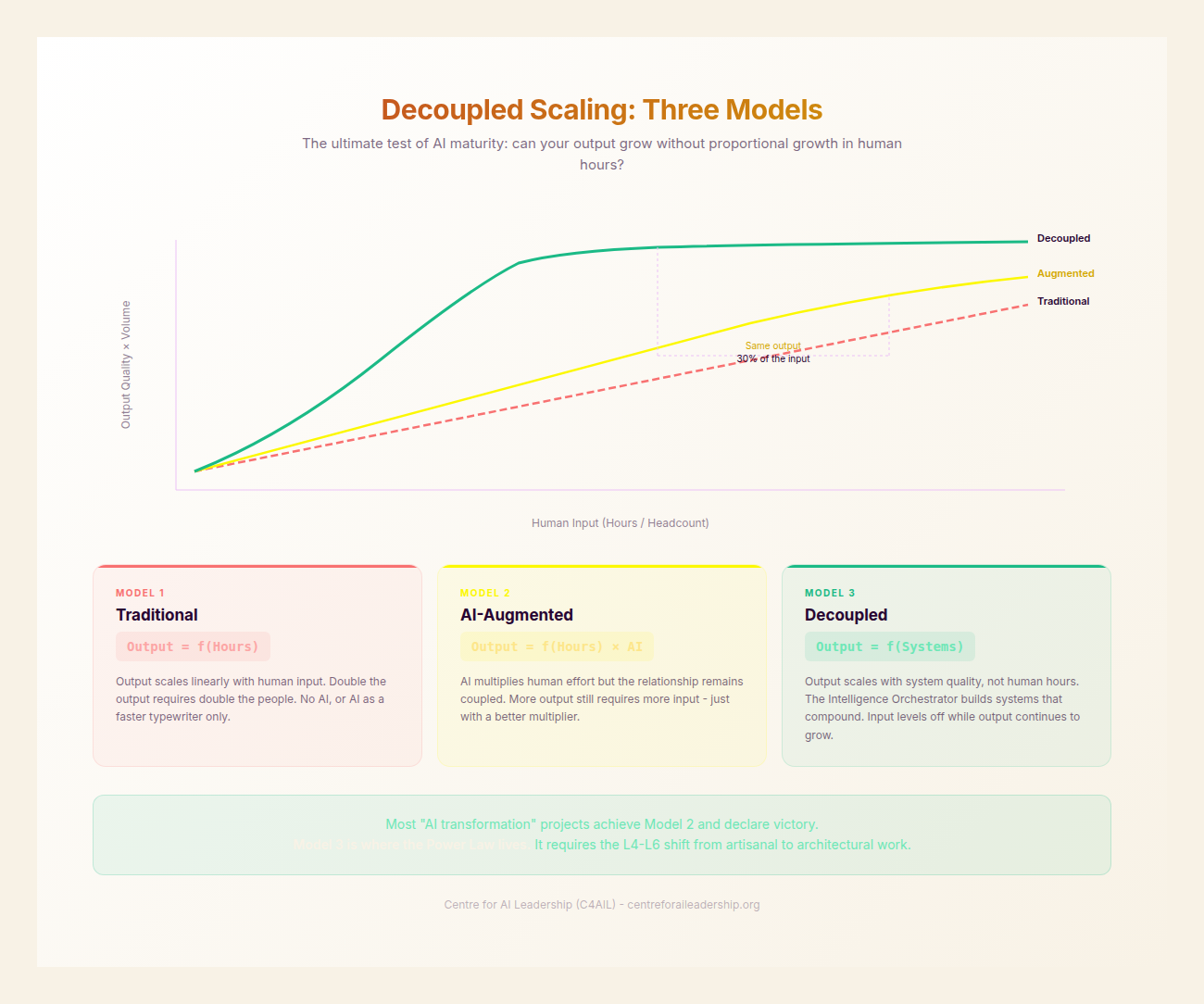

The Orchestrator is the terminal evolution of the knowledge worker in the age of Decoupled Scaling. They are not merely “good at AI”; they are the designers of the Logic Pipes through which organisational intelligence flows. While the Architect builds the tool, the Orchestrator builds the system that makes the tool redundant for routine tasks, allowing human expertise to be applied only where the Effort Gradient is steepest and the stakes are highest.

5.1 - The Double-Threat Profile

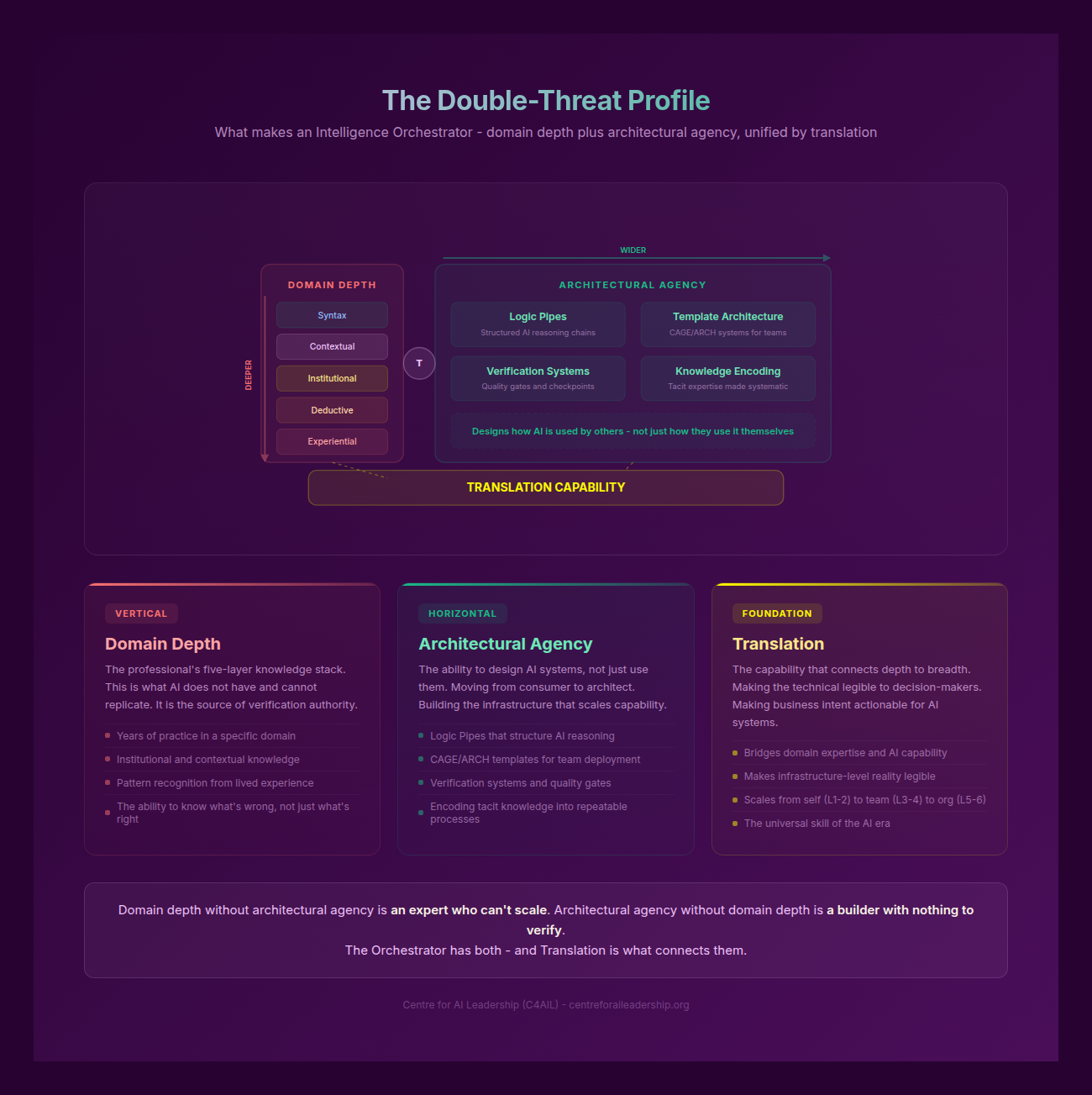

The Orchestrator is defined by a Double-Threat Profile. This is not a generalist role, nor is it a purely technical one. It is a fusion of deep, lived expertise and a high degree of architectural agency. This profile is best visualised as a T-shaped structure, but with a specific, generative-era composition.

The Vertical Bar: Deep Domain Expertise

The vertical bar of the Orchestrator is composed of the Five knowledge layers we identified in Part II: Syntax, Contextual, Institutional, Deductive, and Experiential knowledge. This is the “what” and the “why” of the work.

An Orchestrator in a legal firm is not a prompt engineer; they are a senior litigator who understands the Institutional nuances of how a specific judge interprets a specific statute. They possess Experiential knowledge -the “scar tissue” of past failures -that allows them to spot a subtle hallucination in an AI-generated brief that a junior associate would miss. Without this vertical bar, the worker falls into the Verification Bottleneck. They can generate vast amounts of content, but they lack the Epistemic Credit to verify it. They are essentially producing Workslop: high-volume, low-utility output that creates more work for others to fix.

The Horizontal Bar: Architectural Agency

The horizontal bar represents the ability to design AI systems, create CAGE (Context, Align, Goals, Examples) templates, and implement ARCH (Action, Reasoning, Contextual Check, Horizon) verification workflows. This is the “how” of the generative era.

Architectural agency is the shift from being a user of a tool to being a designer of a process. It involves the ability to take a complex, multi-step human task and decompose it into a series of automated Logic Pipes. The Orchestrator does not just “ask” the AI to write a report; they design a multi-stage workflow where one agent gathers data, another synthesises it against a CAGE template, a third checks it for logical fallacies using ARCH, and a fourth formats it for the specific Institutional requirements of the board.

The Foundation: Translation Capability

At the intersection of these two bars lies the Translation capability. This was discussed in Part III as the ability to bridge the gap between human intent and machine execution. The Orchestrator is essentially the “McKinsey Analytics Translator” grown up. While the 2010s-era translator acted as a middleman between data scientists and business units, that specific, siloed role is being rapidly commoditised (McKinsey 2025).

In its place, the domain-expert-with-agency model is ascending. The Orchestrator does not need a middleman because they are “Bilingual” (Tambe 2025). They speak the language of the business and the language of the latent space. Recent labour data from Lightcast and Revelio suggests that the highest wage premiums are no longer going to pure technical AI roles, but to “Bilingual” workers who can apply AI within a specific domain (Tambe 2025).

Neither bar works in isolation. Domain expertise without architecture leads to the Verification Bottleneck, where the expert is overwhelmed by the sheer volume of AI output they must manually check. Conversely, architectural agency without domain expertise leads to the scaling of Workslop, where efficient systems are built to produce irrelevant or dangerous content. The Orchestrator is the only role that resolves this tension.

5.2 - What the Orchestrator Does Daily (A Character Study)

To understand the Orchestrator, we must look beyond the job description and into the daily rhythm of their work. Consider Sarah, an L5 Orchestrator at a global manufacturing firm. Sarah is not a software engineer; she is a Supply Chain Director with twenty years of experience.

Tuesday Morning: The Shift to Architecture

Sarah’s Tuesday morning does not begin with her writing emails or drafting reports. It begins with Template Governance. She opens her dashboard to review the performance of the custom GPTs and agentic workflows she has designed for her department.

She is looking for drift. She checks the logs of the “Supplier Risk Evaluator” (a system she built using a complex CAGE framework) to see how it handled a recent spike in logistics disruptions in the Red Sea. She notices that the AI is becoming too conservative, flagging routine delays as “Critical”.

Sarah does not “fix” the output. She updates the CAGE template. She encodes new Institutional knowledge -a specific set of “red lines” regarding shipping insurance -into the Examples section of the template. Her primary output is ARCHITECTURE, not content. She is pruning the Logic Pipes to ensure the water stays clear.

Content Tiering: The Queue System

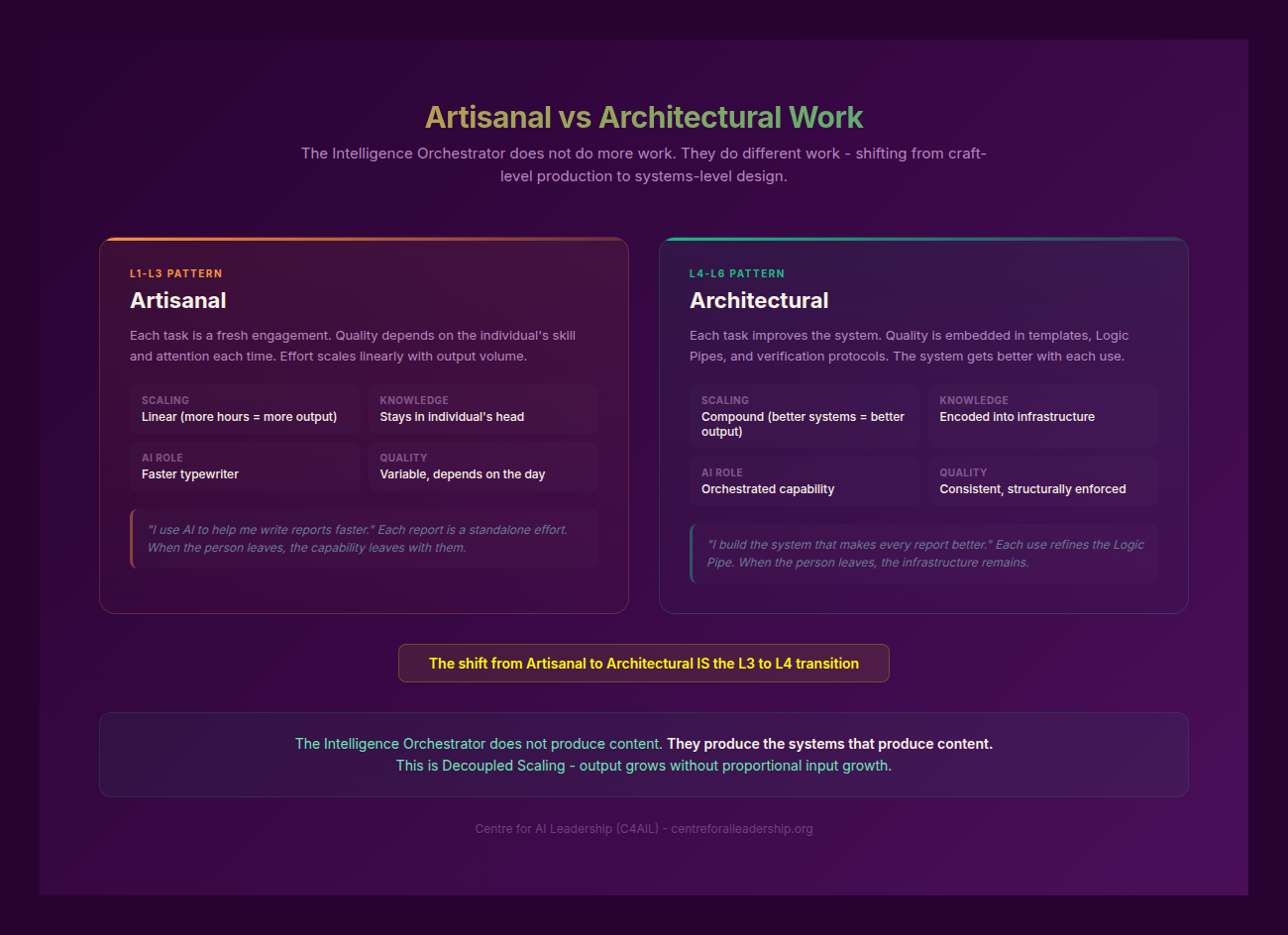

Sarah manages her department’s output through Content Tiering. She has moved away from the “artisanal” model where every document is hand-crafted, to an “architectural” model where content is routed through three queues:

- Queue A (Routine): These are standard logistics updates and internal memos. They are auto-verified by ARCH checks. If the AI’s Reasoning matches the Contextual Check, the document is sent without human review. This is Decoupled Scaling in action. Sarah has designed the system so that the volume of Queue A can double without requiring a single extra hour of her time.

- Queue B (Domain-sensitive): These are supplier negotiations and quarterly forecasts. The AI generates the first draft, but it is flagged for review by a domain expert (a junior manager). The manager uses the Human Mirror to check for nuances the AI might have missed.

- Queue C (High-stakes/Novel): These are strategic pivots or multi-billion dollar contract renewals. Here, Sarah operates in Cyborg mode. She is in a tight, iterative loop with the AI, using it to simulate adversarial viewpoints and stress-test her own Deductive reasoning.

The Five Orchestrator Habits

Sarah’s effectiveness stems from four specific habits that distinguish her from a Power User (L2) or a Competent Integrator (L3).

1. Think in workflows, not interactions. Sarah never views a prompt as a one-off. Every time she interacts with an AI, she asks: “Is this a workflow?” If she finds herself doing something twice, she builds a template. She has moved from the artisanal “I will write this” to the architectural “I will build a system that writes this.”

2. Encode tacit knowledge into templates. Sarah understands Polanyi’s Paradox -the idea that “we know more than we can tell.” To overcome this, she spends time “downloading” her Experiential knowledge into her systems. When she builds a CAGE template for contract review, she doesn’t just give it the “Goals”. She gives it a “Failure Catalogue” -a list of every mistake she has seen a supplier make in the last twenty years. She encodes decision trees and “worked examples” that reflect the firm’s unique culture.

3. Design for failure modes they’ve experienced. Because Sarah has the vertical bar of domain expertise, she knows exactly where the AI is likely to trip. She doesn’t wait for the AI to hallucinate; she designs the ARCH check to specifically look for the “last time we did X, Y broke” scenarios. Her systems are resilient because they are built on the foundation of her past failures.

4. Hold strategic AND granular simultaneously. Sarah can translate between a boardroom-level decision (“we need to reduce logistics risk exposure by 30%”) and a ground-level implementation detail (“the CAGE template for supplier evaluation needs a field for ‘previous force majeure events in this corridor’”). This dual vision - the ability to zoom between the strategic and the operational without losing either - is what makes her a diplomat of intelligence between the executive suite and the operational floor.

5. Govern templates as living material. Sarah treats her prompts and templates like a garden, not a building. They are not “set and forget.” She is constantly weeding out outdated Context, pruning the Logic Pipes, and grafting on new Institutional knowledge as the market changes.

What Sarah Does NOT Do

It is equally important to define the boundaries of the role. Sarah does not personally produce every output of her department. She does not act as a “help desk” for AI tools; she expects her team to have their own Translation capability. She does not write prompts for others. Most importantly, she does not replace domain experts; she empowers them to move from being Passengers to being Architects themselves.

5.3 - The Development Path: From Architect to Orchestrator

The journey to becoming an Orchestrator is not a linear progression of technical skill. It is a cognitive shift. It requires moving through the levels of the C4AIL framework, specifically clearing the transition from Architect (L3-4) to Orchestrator (L5-6).

The Baseline: L3 Competent Integrator

To even begin the path, a worker must be an L3 Competent Integrator. This is someone who has already cleared the “triple compound” of generative fluency: they can hold opposing views in their head, they apply the Human Mirror to every output, and they understand the Logic Pipes of the models they use. They are no longer Passengers; they are taking active control of the machine.

Trigger 1: Frustration with Repetition (L3 → L4)

The move from L3 to L4 Strategic Modifier is usually triggered by a profound frustration with repetition. The worker realises that even though they are “good at AI,” they are still doing too much manual work. They begin to shift their focus from “how do I do this task?” to “how do I build a system for this task?” This is where they start mastering the CAGE framework at scale.

Trigger 2: Team Responsibility (L3 → L4)

The second trigger is the responsibility for others. When an L3 worker is put in charge of a team, they quickly realise that they cannot be the Verification Bottleneck for everyone. They are forced to develop Architectural Agency so they can delegate tasks to AI-human hybrids rather than just humans.

Trigger 3: Scale Pressure (L4 → L5)

The transition to L5 Expert Innovator (the first stage of the Orchestrator) occurs when the volume of work exceeds even the most efficient human-AI partnership. This is the Scale Pressure. At this point, the worker must embrace Decoupled Scaling. They must trust their ARCH verification workflows enough to let Queue A tasks run without human intervention.

Trigger 4: Structured Training and Exposure (L4 → L5)

Unlike the lower levels, which can often be reached through self-study and “tinkering,” the Orchestrator level usually requires structured training and exposure to cross-functional systems. They need to understand how their Logic Pipes connect to the broader organisational infrastructure.

Evidence from EverWorker (2025) suggests that “business operators” who were given structured training in architectural design outperformed consultant-led AI projects by 3x. The reason is simple: the operators had the vertical bar of domain expertise. They just needed the horizontal bar of architectural agency. This proves that the Orchestrator path is trainable; it is not an innate gift but a deliberate professional development.

The Cognitive Leap

It is worth noting that while Robert Kegan’s Stage 5 (the “Self-Transforming Mind”) is a useful framing for the highest levels of leadership, the Orchestrator role does not require a full cognitive transformation of that magnitude -which is rare, occurring in only about 1% of adults. Instead, it requires a “domain-specific systems thinking.” This is a lower, more attainable bar. It is the ability to see one’s own work not as a series of tasks, but as a system of information flows.

The Orchestrator does not need to understand transformer architectures or the mathematics of backpropagation. They need to understand the architecture of their own expertise.

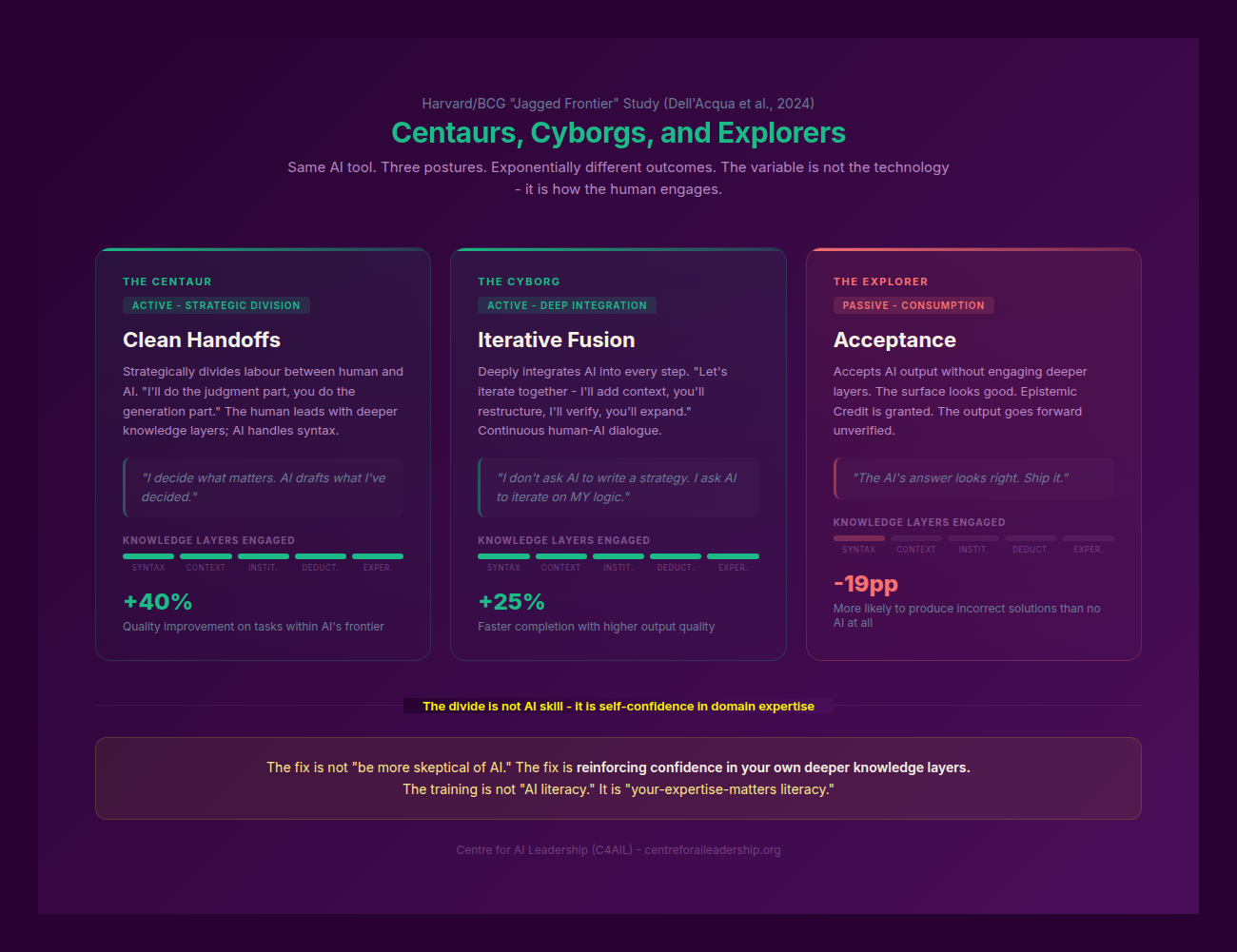

5.4 - The Centaur, the Cyborg, and the Explorer

An Orchestrator is a master of “cognitive posture.” They do not approach every task with the same relationship to the AI. Instead, they switch between three distinct modes of operation: the Centaur, the Cyborg, and the Explorer.

The Centaur: Human Strategy, AI Execution

The Centaur model, famously proposed by Garry Kasparov in the context of “Advanced Chess,” involves a clear division of labour. The human provides the strategy, the direction, and the final verification; the AI provides the raw processing power and execution.

In Sarah’s world, the Centaur mode is the primary posture for Queue B tasks. She (or her team) sets the parameters, defines the CAGE template, and then lets the AI “run” the draft. The human remains “on the loop,” checking the final output against the Human Mirror. The formula is: “Weak human + machine + good process > strong computer alone.” The Orchestrator’s role here is to design the “good process.”

The Cyborg: Human-AI Fusion

The Cyborg mode is a much tighter, iterative loop. There is no clear division of labour; the human and the AI are fused at the task level. The human starts a sentence, the AI finishes it; the AI suggests a logic, the human critiques it; the human provides a nuance, the AI integrates it.

This is the primary posture for Queue C tasks -those high-stakes, novel problems that require the highest level of Deductive and Experiential knowledge. Goldman Sachs has recently moved many of its 46,500 employees toward this fused state, particularly in legal and compliance functions, where the “fused” worker can navigate complex regulatory environments faster than a human or an AI alone (Goldman Sachs 2024). The Orchestrator knows that you cannot Cyborg a routine task -it’s a waste of cognitive energy -but you must Cyborg a crisis.

The Explorer: Creative Discovery

The Explorer mode is about using AI for browsing, learning, and experimenting. It is not about “producing” an output, but about finding unseen patterns or simulating adversarial viewpoints.

An Orchestrator uses Explorer mode to stress-test their own templates. They might ask an AI: “Find ten ways a malicious actor could exploit the logic in this contract review template.” Or: “Simulate a board meeting where the directors are highly sceptical of this logistics pivot.” The Explorer mode allows the Orchestrator to expand their own Contextual knowledge by using the AI as a sounding board for “what if” scenarios.

Choosing the Right Mode

The hallmark of the Orchestrator is the ability to choose the right mode for the right task. They do not Cyborg a Queue A task (which would be an inefficient use of their time), and they do not Centaur a Queue C task (which would be a dangerous abdication of their expertise). They match the cognitive posture to the Effort Gradient of the work.

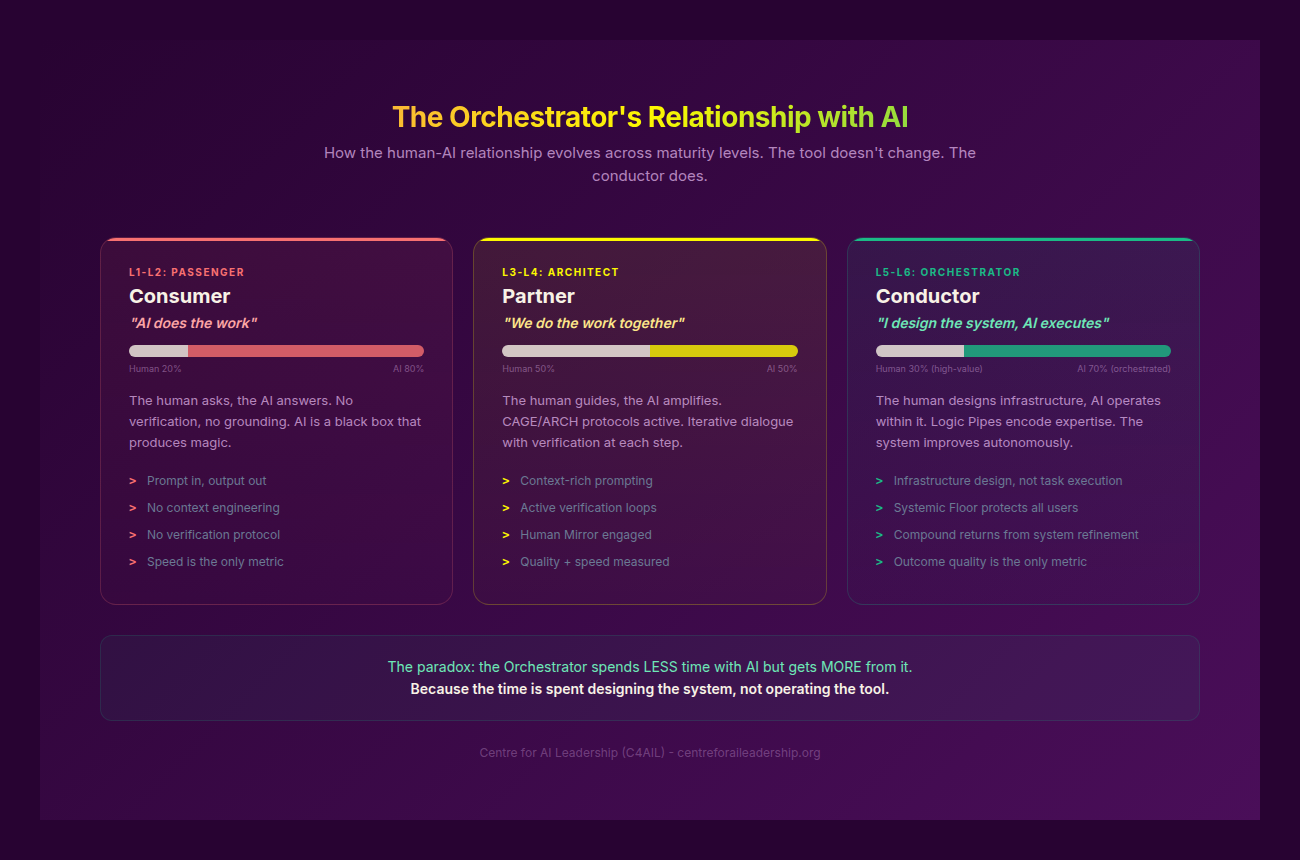

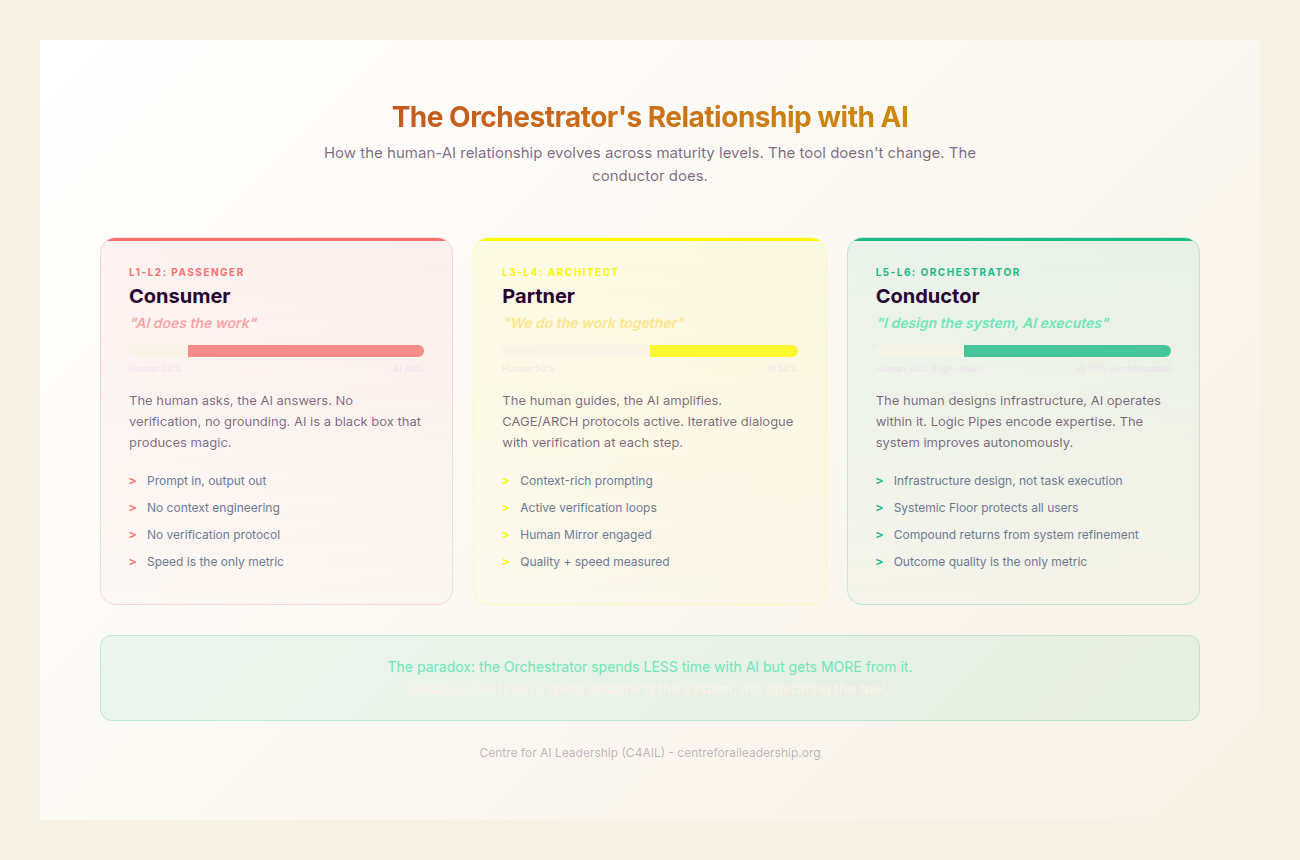

5.5 - The Orchestrator’s Relationship with AI

The relationship between the Orchestrator and the machine is fundamentally different from that of a lower-level user. It is a three-level progression of maturity:

- L1-2 (Passenger): AI is a “tool I use.” The relationship is transactional.

- L3-4 (Architect): AI is a “tool I interrogate.” The relationship is critical and investigative.

- L5-6 (Orchestrator): AI is a “medium I design through.” The relationship is foundational.

The Orchestrator as a Designer of Mediums

When the Orchestrator looks at an LLM, they don’t see a chatbot. They see a vast, high-dimensional space of possibilities that can be shaped by their expertise. They treat the AI as a “medium” -like clay or code -that can be moulded into a specific organisational shape.

As AI becomes more agentic - moving from “chat” to “do” - the Orchestrator becomes more important, not less. In a world of autonomous agents, the person who designs the “Rules of Engagement” (the CAGE templates and ARCH checks) is the person who holds the power. The Orchestrator is the one who ensures that the agents are not just active, but aligned with the firm’s Institutional and Experiential realities.

This answers the question that inevitably arises: “Who orchestrates the orchestration?” The answer terminates at the human. When you have multiple AI agents working in concert, their consensus can amplify the Eloquence Trap - three agents agreeing on a wrong answer is more persuasive than one. The Orchestrator is the irreducible human element who breaks that consensus when their deeper knowledge layers say otherwise.

The Power of Encoded Systems

One of the most critical aspects of the Orchestrator role is that their work persists beyond their physical presence. In the old “artisanal” model, when a senior expert like Sarah left the firm, her knowledge left with her. In the “architectural” model, her CAGE templates and Logic Pipes remain.

By encoding her tacit knowledge into the system, Sarah has addressed the “key person dependency” that plagues many organisations. Her expertise has been commoditised into a departmental asset. This is the ultimate expression of Decoupled Scaling: the ability for the organisation’s intelligence to grow independently of its headcount.

The Power Law Payoff: Multiplicative Value

The value of an Orchestrator is not additive; it is multiplicative. A single Orchestrator can produce department-level output by designing systems that others use. This is the Power Law Payoff.

The evidence for this payoff is becoming clear in the financial data of the early adopters:

- GitHub: By empowering their developers to move from “writing code” to “orchestrating code” via Copilot, GitHub has seen a Revenue Per Employee (RPE) of $4.26M, compared to an industry average of $960K (TRG 2025).

- JPMorgan: The firm has doubled its “AI-augmented” operations specialists from 3% to 6% of the workforce. These specialists -essentially Orchestrators in the making -have seen 40-50% efficiency gains in complex tasks like KYC (Know Your Customer) and fraud detection (JPMorgan 2024).

- PwC: Following a $1B investment in AI training, PwC reported a 95% voluntary engagement rate with their AI tools. More importantly, they saw 20-30% efficiency gains not by replacing people, but by allowing their senior auditors to move into Orchestrator roles, where they manage the Logic Pipes of the audit process rather than the manual data entry (PwC 2025).

These are the 5% of “Value Generators” identified by BCG (2025) - organisations achieving 5x revenue growth compared to laggards. They succeed because they invested in the human capability to architect AI systems, not merely to use AI tools. The contrast with the Workday finding is stark: 40% of AI time savings are currently lost to rework in organisations that deploy tools without Orchestrators to design the verification systems.

The Orchestrator does not just do their job better; they raise the baseline for everyone who uses the systems they build. They are the architects of the new Sovereign Command, ensuring that as the volume of machine intelligence grows, the level of human discernment grows with it. They are the final answer to the Eloquence Trap, proving that in the generative era, the greatest skill is not the ability to speak to the machine, but the ability to think through it.