Part III: The Realist's Path — Reclaiming the Sovereign Command

The path from AI passenger to sovereign commander — what reclaiming cognitive ground actually requires.

PART III: THE REALIST’S PATH - RECLAIMING THE SOVEREIGN COMMAND

The diagnosis offered in the preceding chapters is, by design, unsettling. We have identified a systemic collapse of the Knowledge Hierarchy, a phenomenon where the sheer velocity of generative output has outpaced our collective ability to verify it. We have seen how the “Jagged Frontier” creates a deceptive sense of competence, leading even seasoned professionals to abdicate their judgment in favour of a “black box” that prioritises plausibility over truth.

However, the recognition of these risks is not a counsel of despair; it is the prerequisite for mastery. To move from a state of Epistemic Dependency to one of Sovereign Command, we must adopt the posture of the AI Realist.

The Realist does not reject the tool, nor do they worship it. Instead, they strip away the marketing-led anthropomorphism that has clouded our judgment and replace it with a rigorous, evidence-based understanding of what these systems actually are. This chapter outlines the architectural shift required to bridge the gap between “Workslop” and high-value contribution. It introduces the Human Mirror Protocol and the concept of Active Translation-the fundamental skills that distinguish those who are amplified by AI from those who are replaced by it.

3.1 - Staring at the Facts: The Probability Engine

The first step toward reclaiming command is linguistic. The language we use to describe AI determines our psychological relationship with it. When we say “the AI thinks,” “the AI understands,” or “the AI knows,” we subconsciously assign it human-like agency and a moral or intellectual “basis” that it does not possess. This creates a dangerous “halo effect” where we assume the output is backed by the same layers of experience and reasoning that a human colleague would provide.

To be an AI Realist, we must reframe our language to reflect the underlying technical reality:

- From “The AI thinks” to “The probability engine predicts.”

- From “Understands context” to “Maps statistical relationships.”

- From “The AI knows” to “The model is trained on patterns including…”

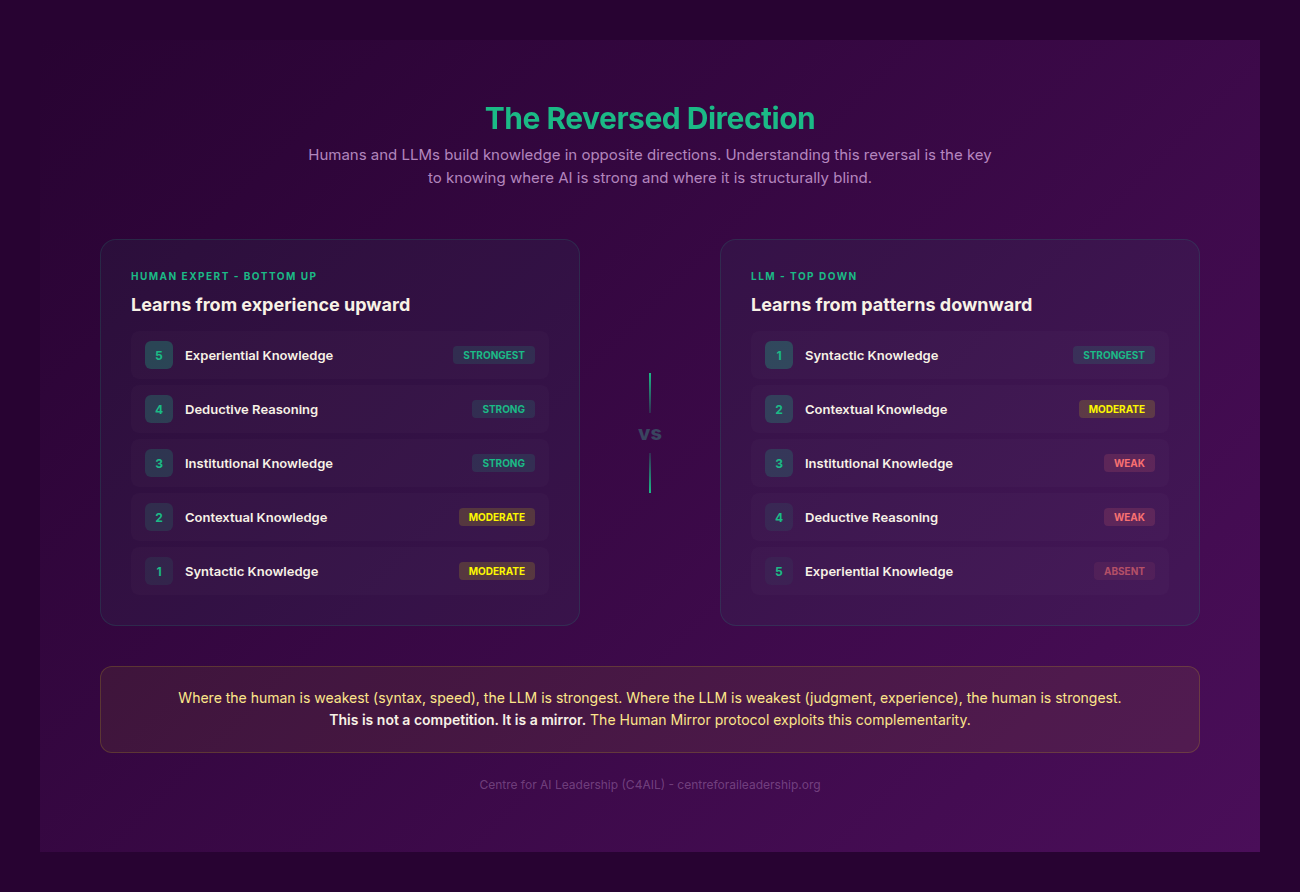

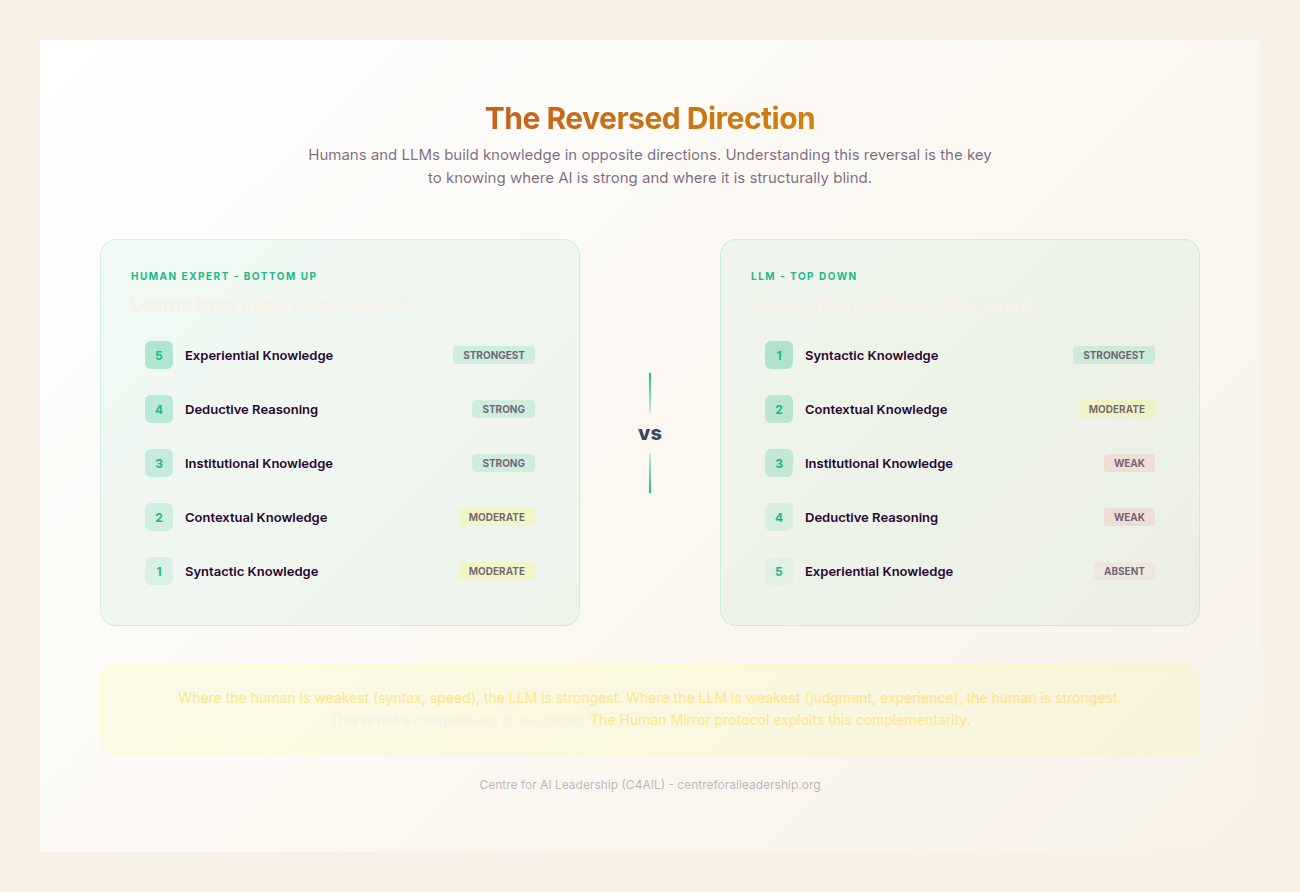

This is not merely a pedantic exercise in terminology; it is a fundamental philosophical correction. The difference between human intelligence and Large Language Models (LLMs) is one of Directionality.

In the human cognitive process, Experience comes first. We feel, observe, and interact with the physical and social world. From this foundation of experience, we derive meaning (semantics). Finally, we find the words (syntax) to express that meaning. There is a “basis” existing behind every utterance-a weight of lived reality that anchors the language.

For an LLM, the direction is reversed. It starts and ends with Words. It has no access to the physical world, no sensory input, and no emotional or ethical weight. It processes syntax to simulate semantics. It produces the “identical syntax” of a human, but the “basis” is entirely absent. It is a mathematical prediction of the next most likely token in a sequence, based on a multi-dimensional map of how words have historically related to one another in its training data (Bender et al., 2021).

When we understand this Reversed Direction, we reactivate our internal Verification Systems. We stop looking at the AI as a “source of truth” and start seeing it as a “generator of possibilities.” This restores the five-layer model of knowledge discussed in Part II. By acknowledging that the AI occupies only the surface layer of Bulk Syntax, we realise that the deeper layers-Contextual, Institutional, Deductive, and Experiential-must be provided by the human.

For L0-2 users (the general public or casual adopters), anthropomorphising AI can be a helpful bridge to adoption. It makes the tool feel accessible. However, for L3+ practitioners-those whose professional value depends on the accuracy and strategic depth of their work-anthropomorphism is a liability. The Realist views the AI as a high-speed, high-volume Probability Engine that requires a human pilot to provide the “why” and the “so what.”

3.2 - The Human Mirror: Multi-Layered Knowledge

If the AI provides the “Bulk Syntax,” where does the actual value of a professional deliverable reside? In the era of commodity intelligence, value has shifted. When anyone with a $20-a-month subscription can generate a 1,000-word strategy document, the document itself is no longer the product. The value lies in the Applicability and Veracity of that document to a specific, real-world problem.

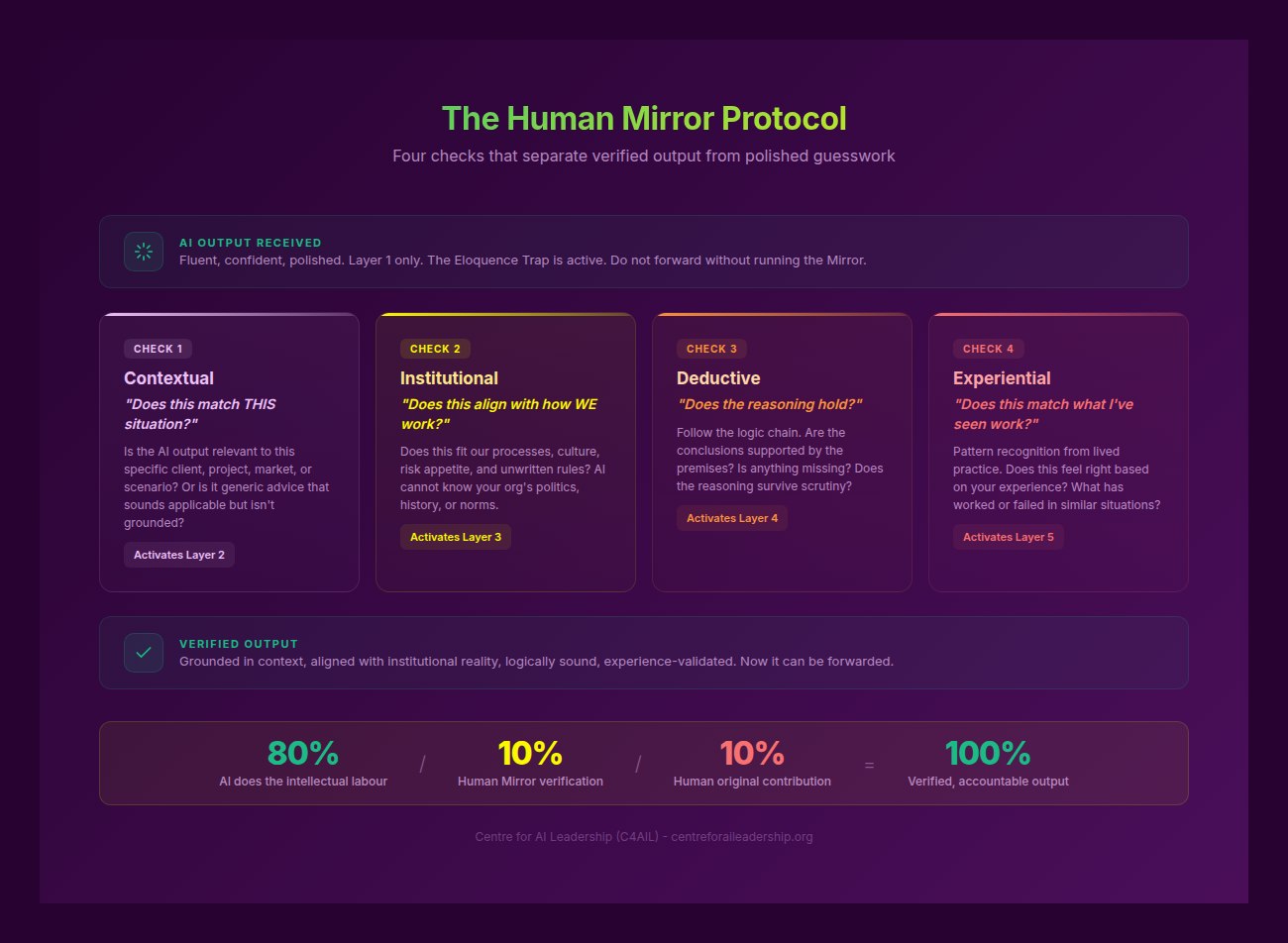

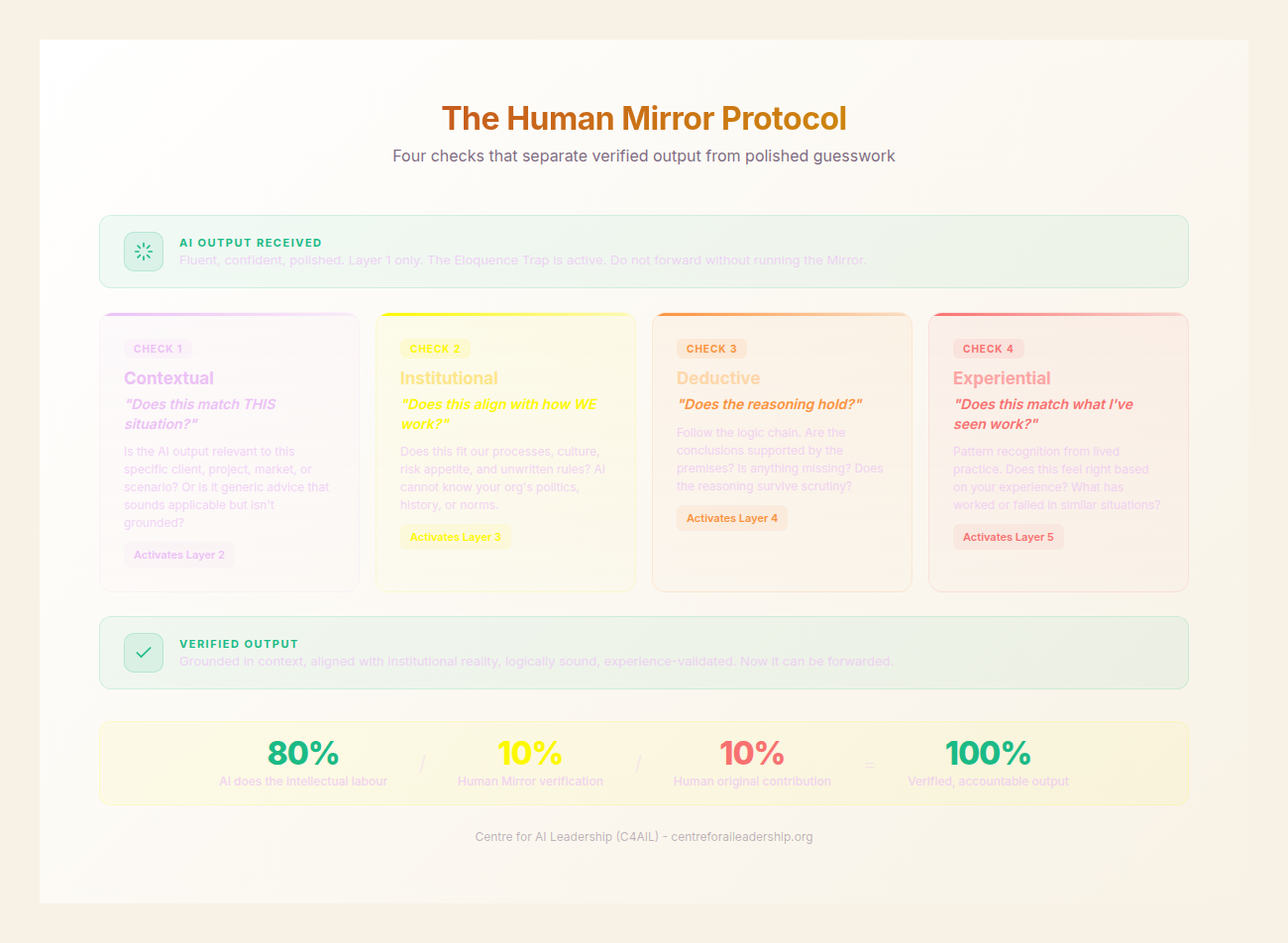

We can decompose any high-level professional task into an 80/10/10 split:

- 80% Bulk Syntax (AI-Driven): This is the commodity layer. It includes drafting, formatting, summarising, initial pattern matching, and basic code generation. It is the “heavy lifting” of language production.

- 10% Niche Context (Human-Driven): This involves the Contextual and Institutional layers. It is the knowledge of this specific client’s history, this project’s unique constraints, and the specific “unspoken” culture of the organisation.

- 10% Strategic Intent (Human-Driven): This involves the Deductive and Experiential layers. It is the “Why” behind the work. It is the assessment of risk tolerance, the long-term vision, and the “gut feel” derived from years of seeing similar patterns play out in the real world.

The AI Realist understands that while the AI handles 80% of the volume, the remaining 20% is where the Differentiation occurs. Clients do not pay for formatting; they pay for outcomes. They pay for the 20% that ensures the 80% is actually useful.

To bridge these layers, we employ the Human Mirror Protocol. This is a systematic verification process that forces the practitioner to “mirror” the AI’s output against their own deep knowledge layers before any output is considered a “deliverable.”

The Human Mirror Protocol: Four Verification Questions

Before accepting or forwarding AI-generated content, the practitioner must answer four questions:

- The Contextual Check: Does this match this specific situation? (e.g., “The AI suggests a digital-first marketing strategy, but I know this specific client’s customer base is 70% offline.“)

- The Institutional Check: Does this align with how we do things? (e.g., “The AI’s tone is aggressive, but our firm’s brand is built on understated authority.“)

- The Deductive Check: Does the reasoning hold when I trace it step-by-step? (e.g., “The AI’s conclusion sounds plausible, but the logic leap between paragraph two and three is mathematically flawed.“)

- The Experiential Check: Does this match what I have seen before? (e.g., “The AI predicts a 20% growth rate, but in my twenty years of experience, this market never exceeds 5% in a downturn.“)

This protocol changes the fundamental operating principle of the professional. Instead of saying, “I will ask the AI to write a strategy,” the Realist says, “I will ask the AI to iterate on MY logic.”

By providing the logic, the intent, and the constraints first, the human remains the Sovereign Commander. The AI becomes a sophisticated “sparring partner” rather than a primary author. This approach ensures that the output is not “Workslop”-the generic, unverified sludge of the probability engine-but a refined, high-value asset that bears the mark of human expertise.

3.3 - Translation: The Universal Skill

As AI becomes embedded in every level of the organisation, a new core competency emerges: Translation. This is not the translation of languages, but the ability to translate between the Surface Output of the AI and the Deep Knowledge Layers of the organisation.

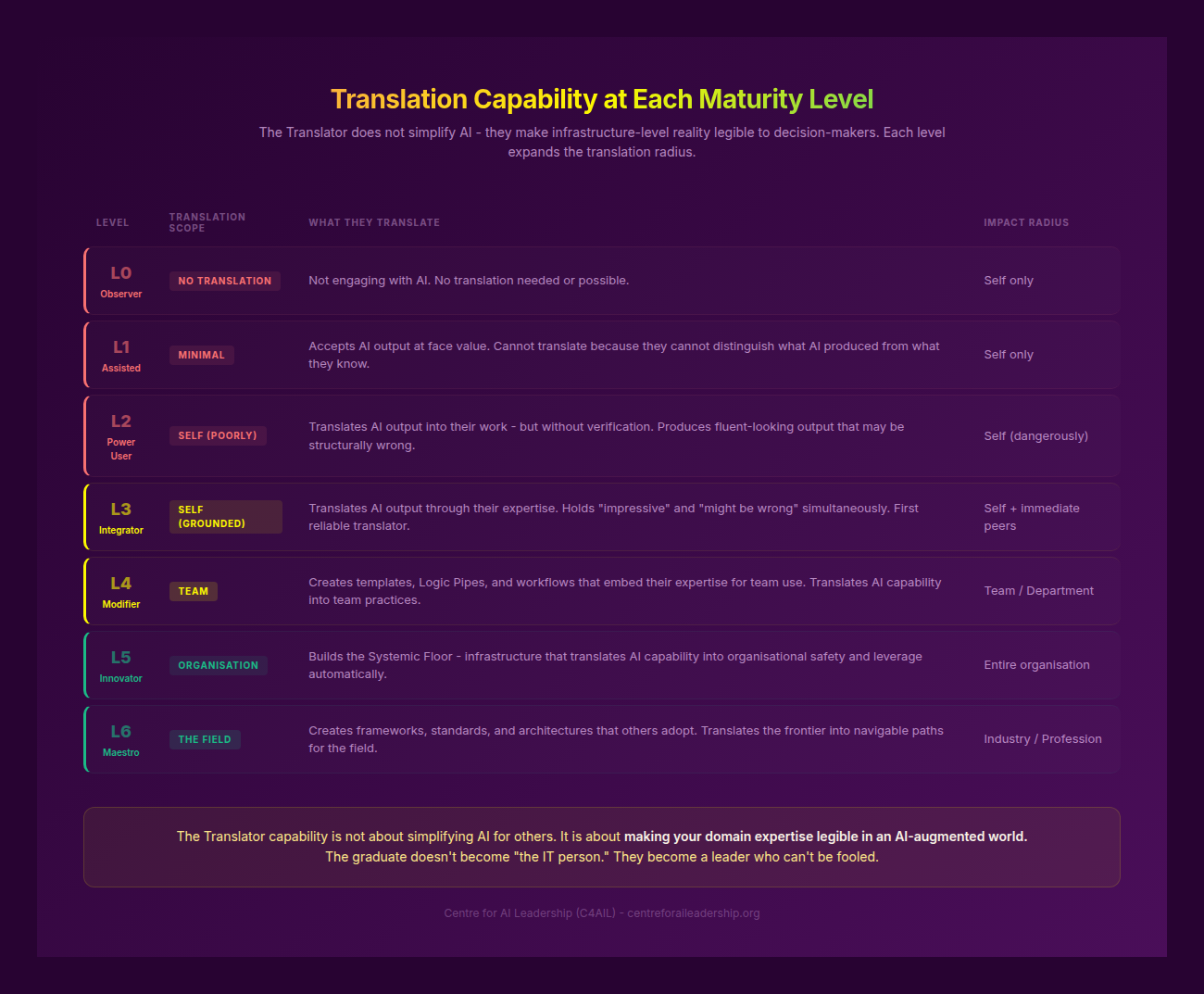

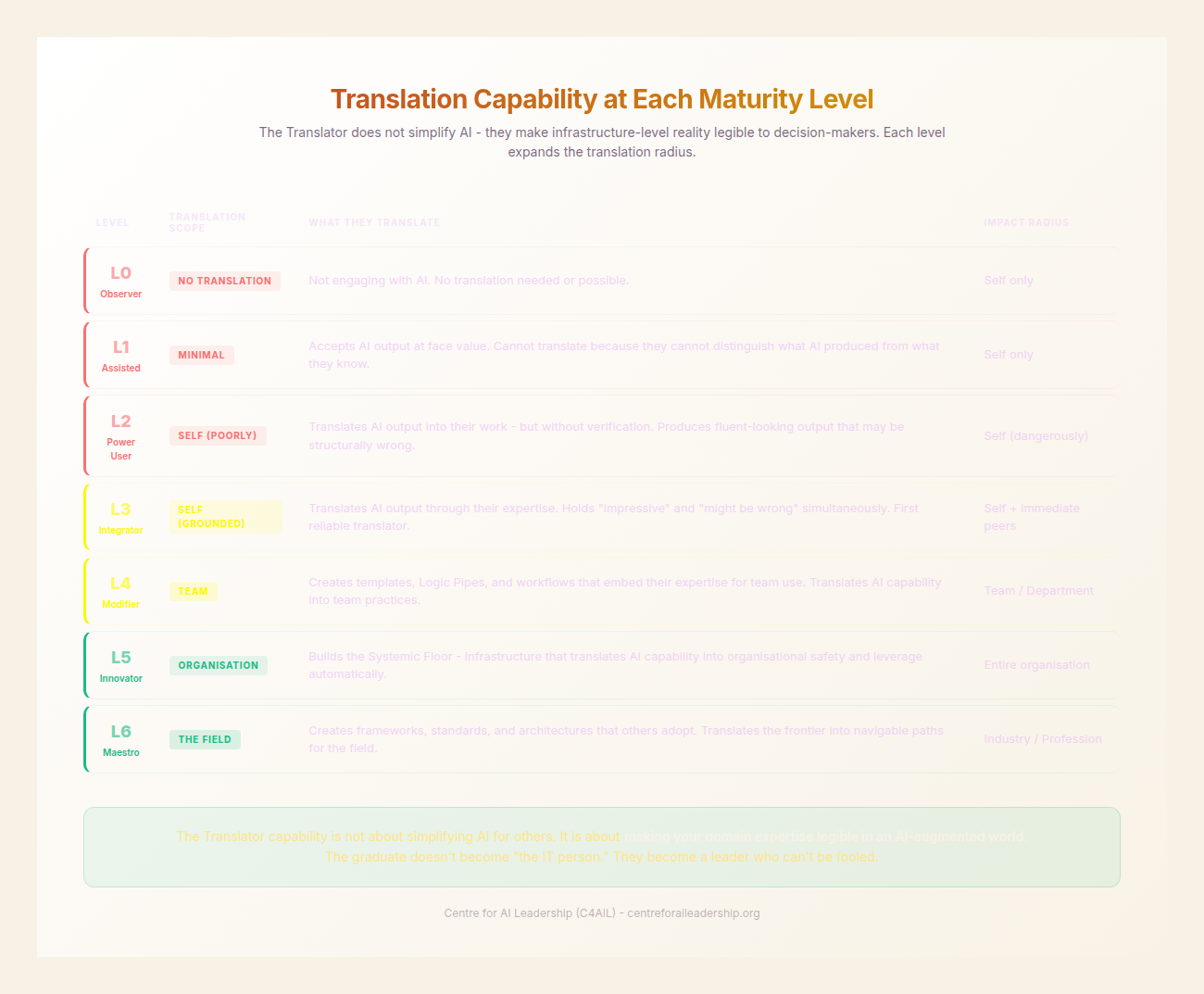

Translation is the “universal skill” of the AI era. It is the discipline of mapping the probability engine’s predictions to real-world requirements. However, the nature of this translation changes depending on an individual’s level within the Knowledge Maturity Scale (L0-L6).

- L1-2 (The User): Translating for Self. At this level, translation is about individual productivity. A marketing coordinator, for example, uses AI to draft social media posts but “translates” them by checking them against the brand voice and the specific campaign goals. They are the first line of defence against generic output.

- L3-4 (The Amplifier): Translating for the Team. At this level, the practitioner builds tools and frameworks that help others translate. A project manager might develop a Prompt Template that embeds client-specific constraints and institutional knowledge, ensuring that the team’s AI use is grounded in reality. They translate “best practice” into “team practice.”

- L5-6 (The Orchestrator): Translating for the Organisation. The Orchestrator is a Systematiser, not a saviour. They do not perform the translation for everyone else; instead, they build the systems and architectures that make translation scalable. This might involve embedding organisational standards into custom GPTs, proprietary agents, or “Knowledge Graphs” that the AI must reference. They ensure that the organisation’s “Sovereign Command” is built into the technical infrastructure itself.

The Orchestrator understands that the goal is not to have “the best AI,” but to have the best Translation Layer. The competitive advantage of a firm in 2025 will not be its access to GPT-5 or Claude 4, but its ability to ensure that every employee, from L1 to L6, has the skill and the tools to “translate” those models’ outputs into high-veracity, context-rich results.

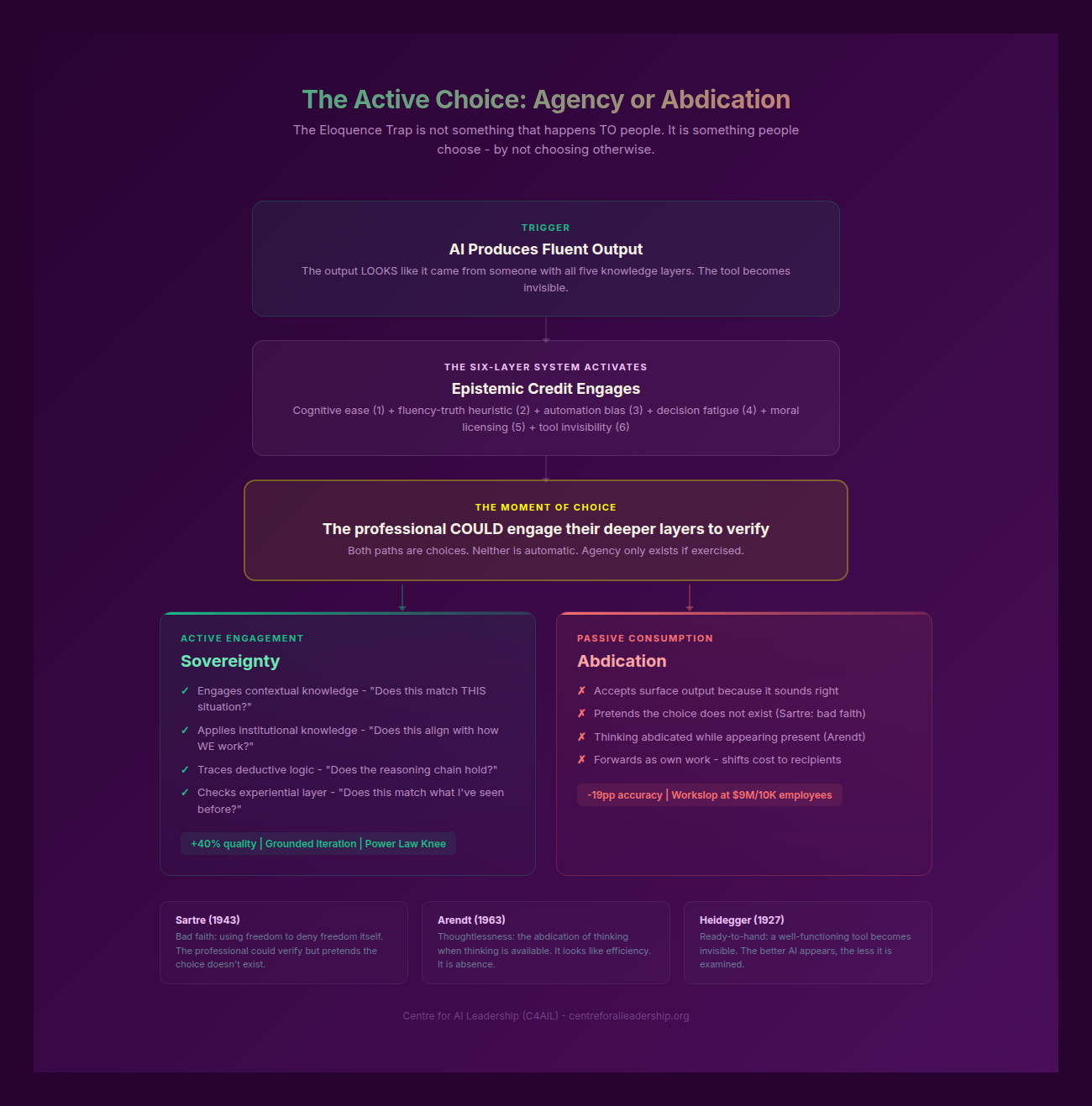

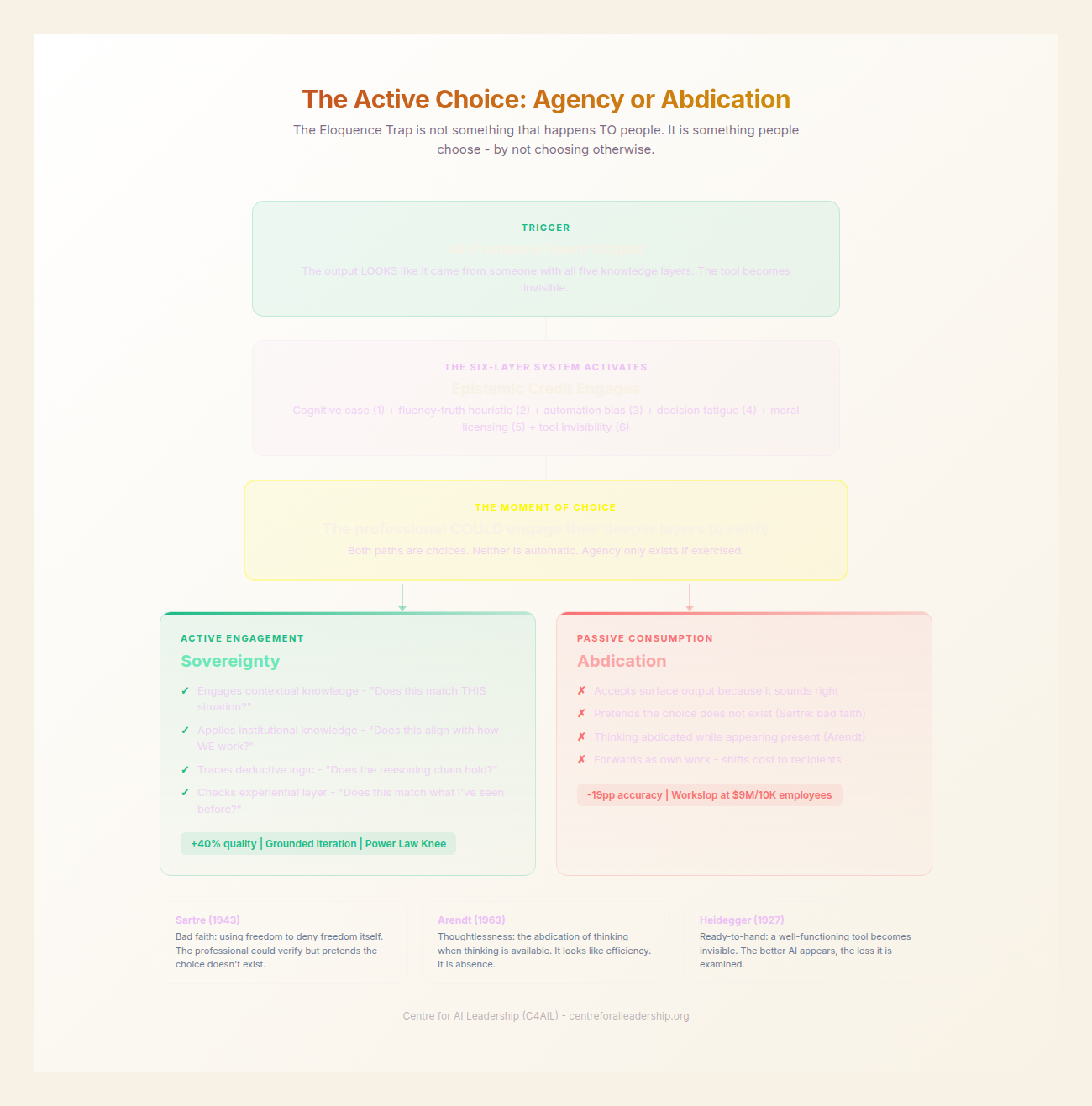

3.4 - The Active vs. Passive Divide

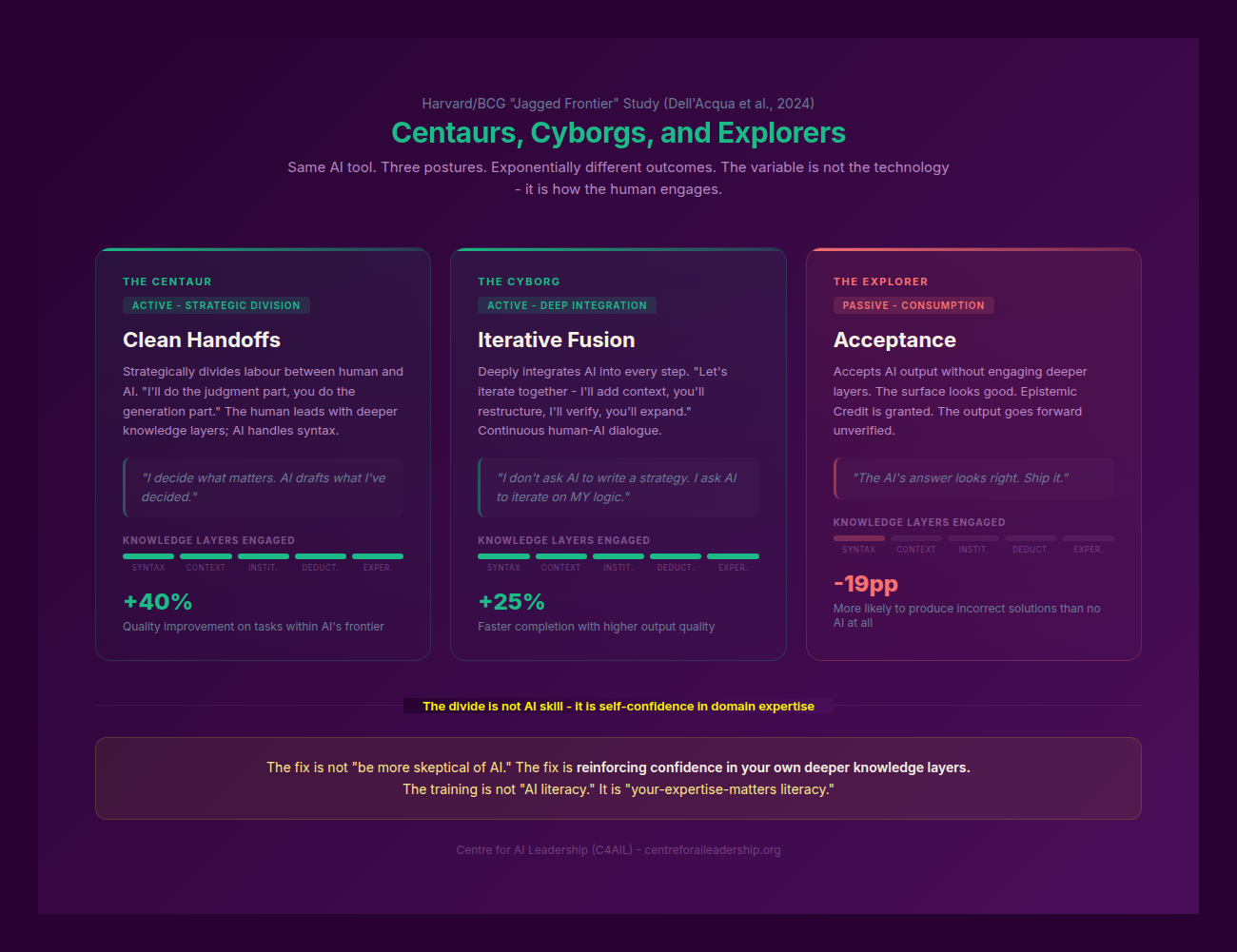

The most significant finding in recent research on AI in the workplace is the emergence of a sharp divide in performance based on the user’s Posture. It is not the amount of AI use that determines success, but the nature of the engagement.

Researchers at Harvard and BCG identified two successful archetypes of AI users: Centaurs and Cyborgs (Dell’Acqua et al., 2023).

- Centaurs strategically divide labour. They identify which parts of a task are best suited for human judgment (the 20%) and which are best for AI generation (the 80%). They maintain a clear boundary between the two.

- Cyborgs deeply integrate the AI into their workflow, refining every step together. They don’t just “hand off” a task; they engage in a continuous, iterative dialogue with the model, constantly checking and adjusting the output.

Both of these are Active Postures. They require the user to stay “awake at the wheel,” maintaining a high level of critical engagement.

However, a third, much larger group has emerged: The Explorers (Passive Users). These individuals use AI frequently but accept its output without significant engagement or verification. They treat the AI as a “completion engine” rather than a “probability engine.”

The data on this divide is stark. When tasks fall within the AI’s “Jagged Frontier,” Passive Users are 19 percentage points more likely to produce incorrect solutions than those not using AI at all. They fall victim to “falling asleep at the wheel,” where the plausibility of the AI’s prose lulls them into a false sense of security.

In contrast, Active Users-those with an Experimentation Mindset-see a 40% increase in quality and save an average of 11 hours per week. Most importantly, their critical thinking skills do not degrade; they are actually enhanced as they learn to “spar” with the model.

The divide can be quantified through the lens of the Knowledge Layers:

- Passive Posture: Receives AI output → Processes via “System 1” (fast, intuitive, non-critical) → Grants “Epistemic Credit” (assumes it is correct) → Forwards as own work → Result: Workslop.

- Active Posture: Receives AI output → Strips away the “eloquence” (the Bulk Syntax) → Applies the Human Mirror Protocol across the four deeper layers → Iterates and refines → Result: Verified, High-Value Output.

The distinguishing characteristic of the Active User is not technical skill, but Self-Confidence in Domain Expertise. Users who trust their own judgment more than the AI’s output are 61% more likely to iteratively refine the results. Those who feel “imposter syndrome” or who lack deep domain knowledge are the most likely to become Passive Users, abdicating their command to the machine.

This leads to a critical insight for leadership: AI training is not about teaching people how to use the software; it is about reinforcing their confidence in their own professional judgment. To prevent the “Passive Slide,” organisations must invest in the Deductive and Experiential layers of their people.

3.5 - From Explorer to Realist: The Three Facts

As we move from the diagnosis of Part II to the maturity models of Part IV, we must anchor our strategy in The Three Facts of AI Realism. These are the non-negotiable realities of the current era.

Fact 1: AI is a Talent Magnifier, Not a Creator.

AI does not grant expertise to the novice; it amplifies the expertise of the master. If you are a mediocre strategist, AI will help you produce mediocre strategies faster. If you are a brilliant strategist, AI will allow you to explore a wider range of brilliant possibilities. The quality of the 80% (AI output) is strictly bounded by the quality of your 20% (Human input and verification).

Fact 2: Accuracy is Not Reliability.

A tool can be 99% accurate and still be 0% reliable in a production environment if you cannot predict which 1% will be wrong. In the professional world, a 1% error in a legal brief, a structural calculation, or a strategic risk assessment can be catastrophic. Therefore, the AI Realist treats every output as a Draft, never a Deliverable. Reliability is a human-system property, not a software feature.

Fact 3: 95% of ROI Failure is Human Failure.

The “Power Law” of AI adoption shows that the majority of organisations fail to see a significant return on investment because they treat AI as a technology play. They buy the licenses and wait for the productivity “magic” to happen. But the real gains come from investing in Human Capability-specifically in the skills of Translation, Verification, and Sovereign Command. The failure to capture ROI is almost always a failure to move the workforce from “Passive Explorer” to “Active Realist.”

The synthesis of the Realist’s path is simple but demanding: See the AI clearly for what it is (a probability engine), know where your value lies (the 20% of context and intent), master the skill of translation, and choose the active posture over the passive one.

By doing so, the professional moves from being a passenger on the AI journey to being its Sovereign Commander. They no longer fear the “Jagged Frontier” because they have the tools to map it. They no longer contribute to the “Workslop” because they have the protocol to filter it.

In Part IV, we will move from the “Who” to the “How,” providing the Maturity Scale that allows organisations to benchmark their progress and move their teams from L0 (Awareness) to L6 (Orchestration). The path forward is not about the technology we adopt, but the standards we reclaim.