Part I: The Truth — The Great AI Divergence

The economic evidence for the Great AI Divergence — why the same technology produces exponentially different outcomes across organisations.

Part I: The Truth - The Great AI Divergence

The economic landscape of 2025 is defined by a singular, jarring contradiction. On one hand, generative artificial intelligence has achieved near-total saturation within the enterprise. On the other, the promised revolution in productivity remains, for the vast majority of organisations, an unrealised hope. This is the reality we observe as practitioners: the technology is no longer the variable. The variable is the organisation.

1.1 - The High-Adoption Paradox

By November 2025, tool saturation reached 80% across the global enterprise (OpenAI, Nov 2025). Almost every professional in a developed economy has access to a frontier-model interface. Yet, the realisation of return on investment (ROI) tells a different story. Only 5% of organisations have managed to convert this access into measurable bottom-line impact (MIT NANDA, Aug 2025).

The scale of this inefficiency is staggering. United States firms spent an estimated $40 billion on AI initiatives in 2024, yet 95% of those firms saw zero measurable impact on their financial performance. This failure to launch has led to a predictable retreat. In 2024, only 17% of companies abandoned their AI initiatives; by 2025, that figure climbed to 42% (S&P Global, 2025).

This is not a failure of the underlying models. The large language models of 2025 are demonstrably capable of performing high-level cognitive tasks. The problem lies in the disconnect between the tool and the strategy. Only 15% of employees report knowing their company’s AI strategy (Gallup, 2024). Most organisations have treated AI as a “bolt-on” utility - a digital version of a faster typewriter - rather than a structural shift in how work is performed.

This paradox has been termed the GenAI Divide (MIT NANDA, Aug 2025). It is a structural chasm that separates those who use AI to perform existing tasks faster from those who use it to re-architect the nature of their value proposition. The divide is not accidental; it is the natural result of applying industrial-age management principles to an information-age intelligence layer.

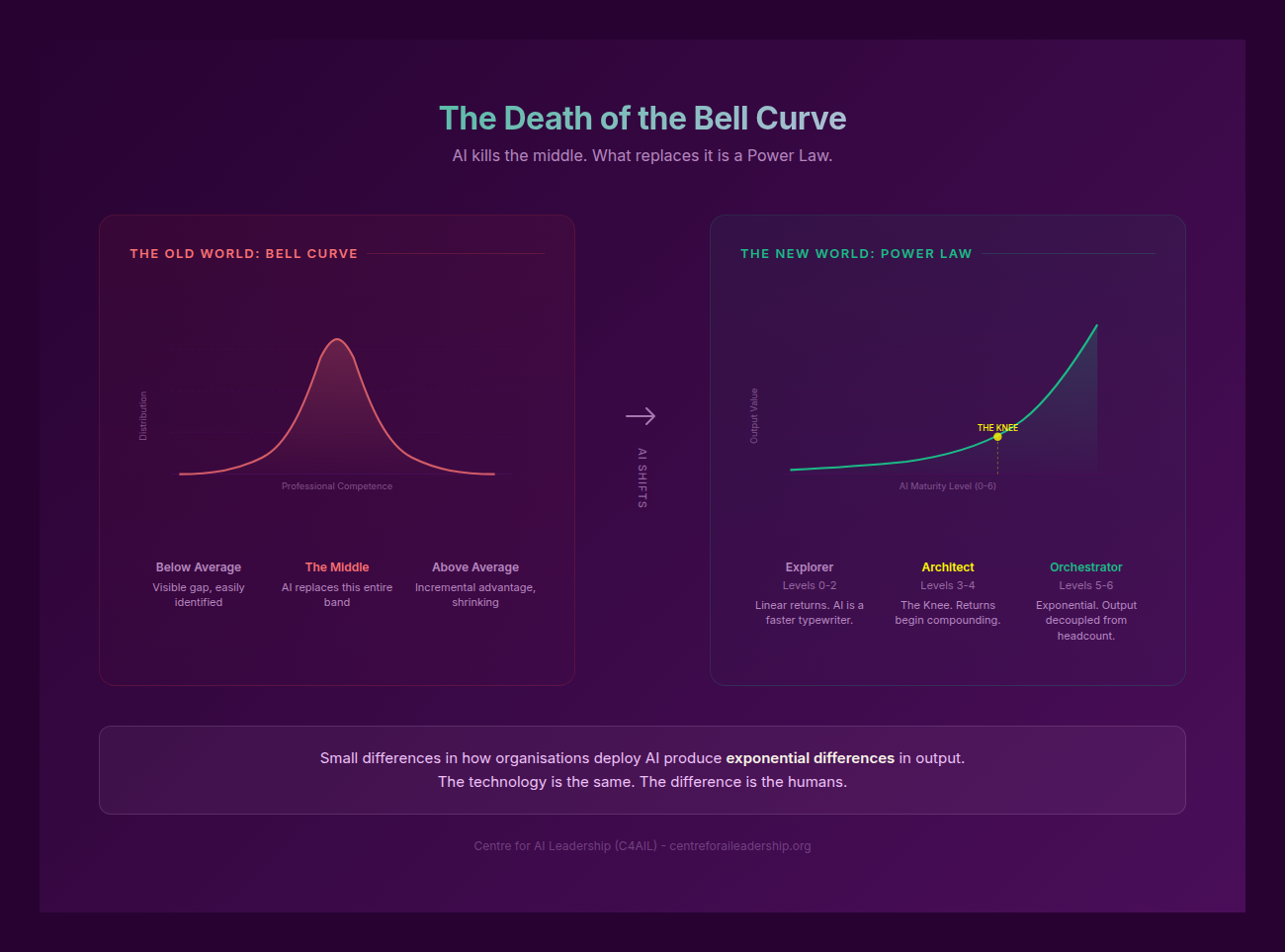

1.2 - The Death of the Bell Curve

For a century, professional performance was managed according to the Bell Curve. We assumed that human output was biologically bounded - limited by the number of hours in a day, the speed of a keyboard, and the capacity of a single brain to process data. In such a system, the top performer is typically twice as productive as the average.

AI has shattered these biological constraints, and in doing so, it has killed the Bell Curve. We are now operating in a Power Law environment.

The data from the OpenAI Enterprise Report (2025) is instructive. Frontier workers - those at the extreme right of the performance distribution - send 6x more messages to AI systems than the median worker. When we look at specialised tasks like data analysis, the gap widens to 16x. These “power users” report saving upwards of 10 hours per week, while users with fewer than three AI-integrated tasks report zero time savings.

This is not a matter of “typing more prompts.” The 16x gap exists because frontier workers have moved beyond chat interfaces. They are building systems. They are creating custom GPTs, automating multi-step workflows, and using AI as an architectural component rather than a conversational partner. Frontier firms reflect this internal trend, generating 2x more AI messages per employee and 7x more custom GPTs than their laggard counterparts.

In a Bell Curve world, you can manage the “middle” and still win. In a Power Law world, the middle is a dead zone. The top performers are not just slightly better; they are an order of magnitude more productive because they have decoupled their output from their physical time. What remains bounded is not the ability to generate content, but the ability to apply judgment, verify accuracy, and maintain context. These are the deeper layers of labour that the Power Law exposes.

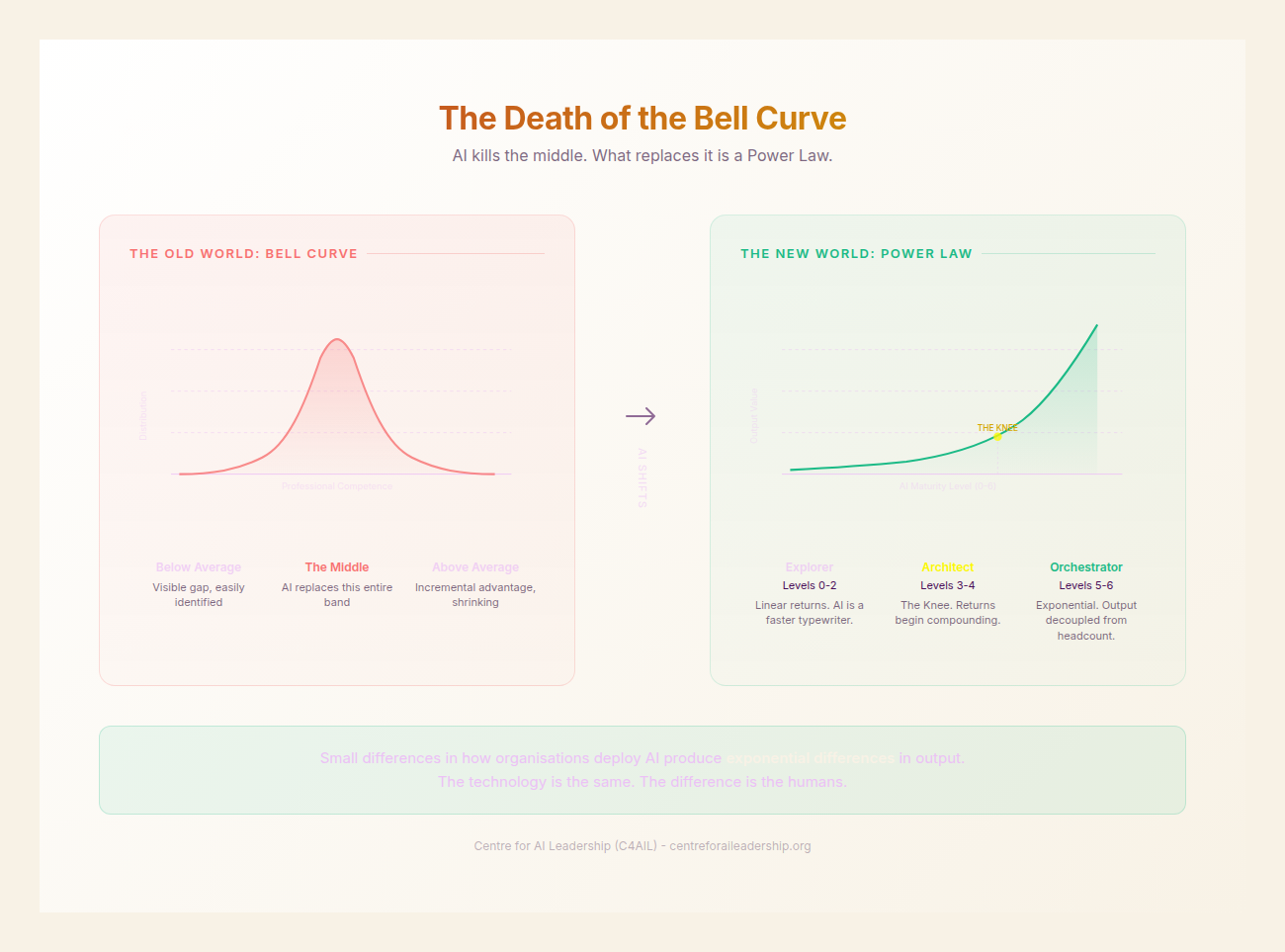

1.3 - The Three Labours

To understand why some organisations thrive while others fail, we must categorise work not by job title, but by the nature of the labour itself. All professional activity falls into one of three categories. Getting the line wrong between them is the primary cause of the 80/5 paradox.

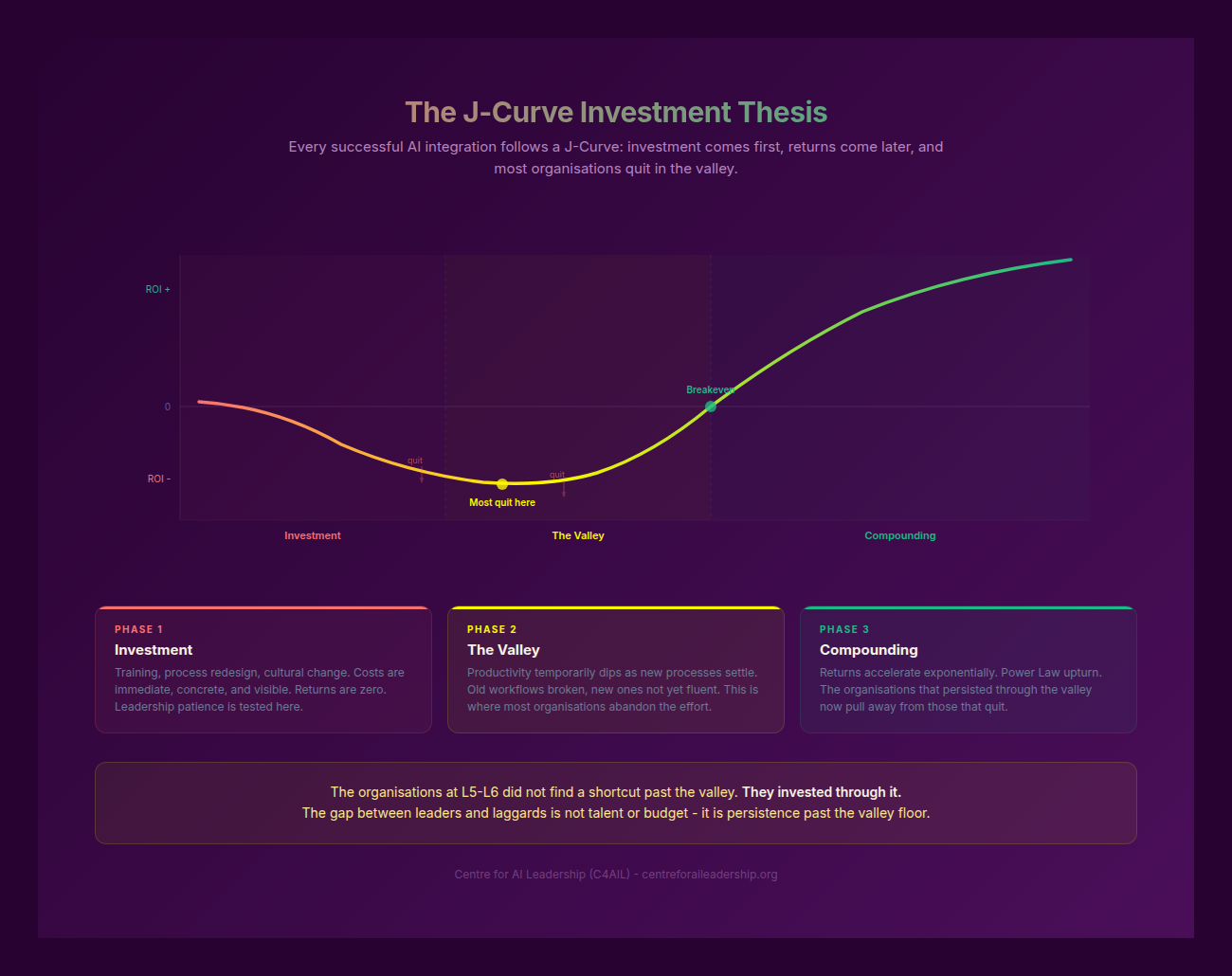

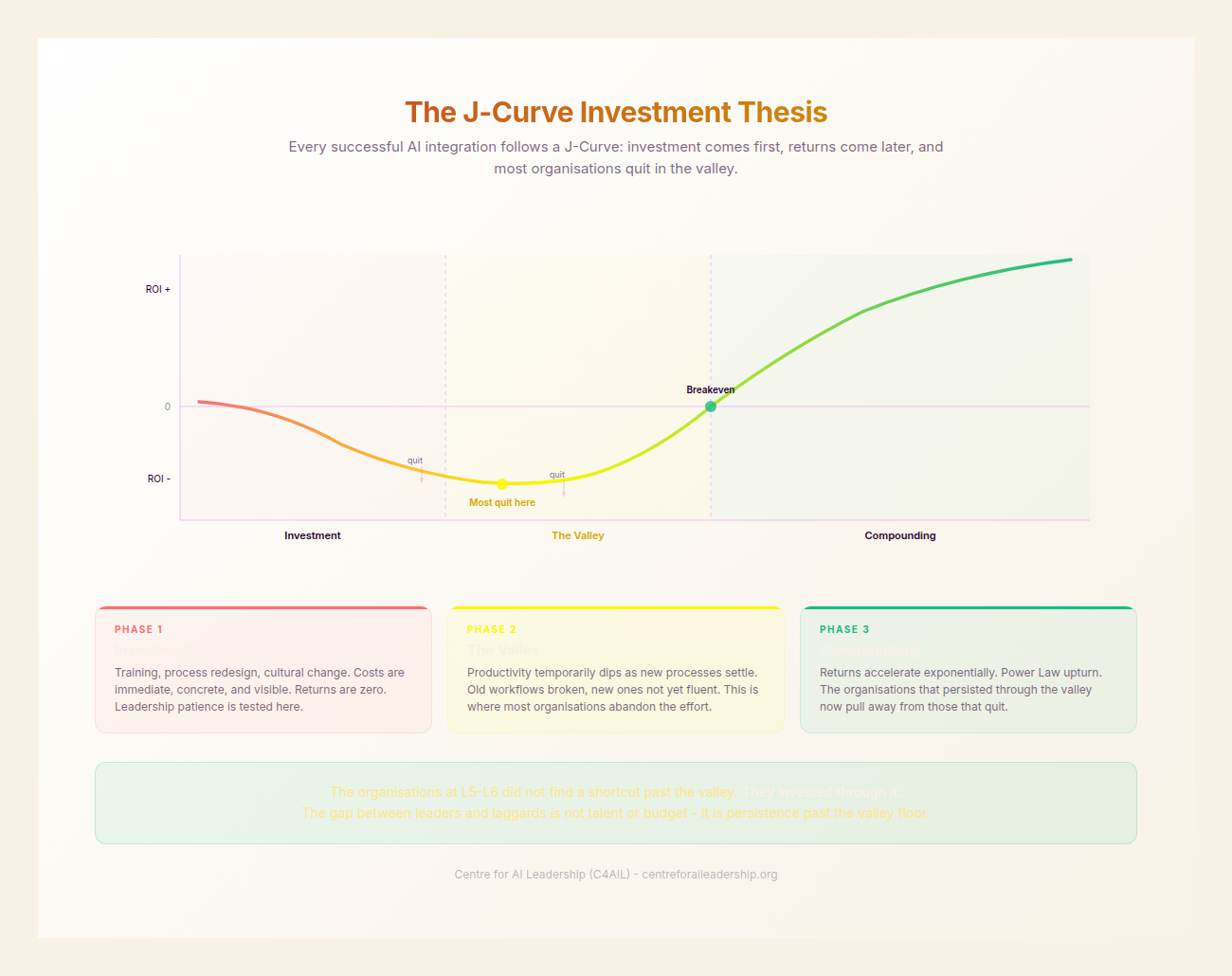

Intellectual Labour is “weightless.” It encompasses strategy, synthesis, coding, writing, and analysis. This is where AI excels. Because this labour is digital and linguistic, its value now follows a Power Law. We see this in the $4.26 million Revenue Per Employee (RPE) at firms like GitHub (utilising Copilot) compared to the tech industry average of approximately $960,000 (TRG, 2025). This is the “J-Curve” in action - a period of investment and workflow redesign followed by exponential returns (Brynjolfsson, 2026).

Physical Labour is “atom-bound.” It involves logistics, manufacturing, and skilled trades. AI can optimise these processes, but it cannot replace the physical requirement of moving matter through space. We see an “S-Curve” response here. Walmart, for example, achieved a 30% saving in logistics costs and a 30% reduction in emergency maintenance through AI-driven predictive modelling, but these gains eventually plateau at the constraints of physical reality.

Accountability Labour is “presence-bound.” This involves ethical oversight, risk ownership, final judgment calls, empathy, and care. AI is fundamentally incapable of performing this labour. This is not merely because AI lacks “emotion,” but because it cannot be held accountable. You cannot sue an algorithm for malpractice; you cannot imprison a model for fraud.

The line between Intellectual and Accountability Labour is not a spectrum; it is a hard boundary. Organisations that attempt to push AI across this boundary face systemic failure. We saw this with the Yara AI shutdown and the subsequent rehiring at Klarna (Bloomberg, Jan 2025) after the limits of automated customer service became apparent. Similarly, a study on physician performance showed a 14 percentage point drop in diagnostic accuracy when doctors over-relied on AI, failing to exercise their Accountability Labour (medRxiv, Aug 2025).

Conversely, organisations that draw this line correctly - such as Morgan Stanley, Deloitte, and PwC - use AI to handle the Intellectual Labour of synthesis while doubling down on the human Accountability Labour of client trust and risk management. These firms are seeing exponential returns because they have freed their most expensive assets - their people - to focus entirely on the one thing AI cannot do: take responsibility.

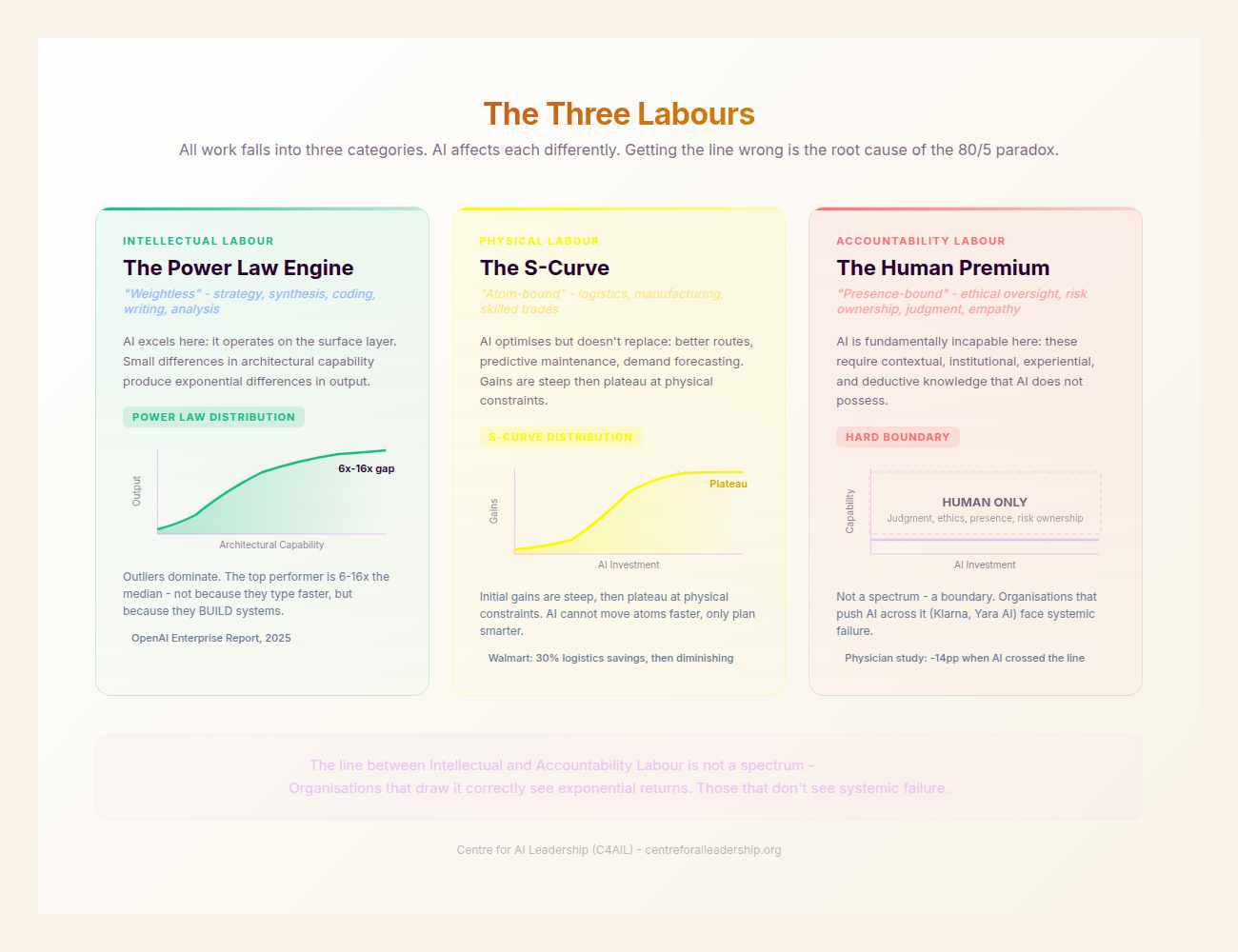

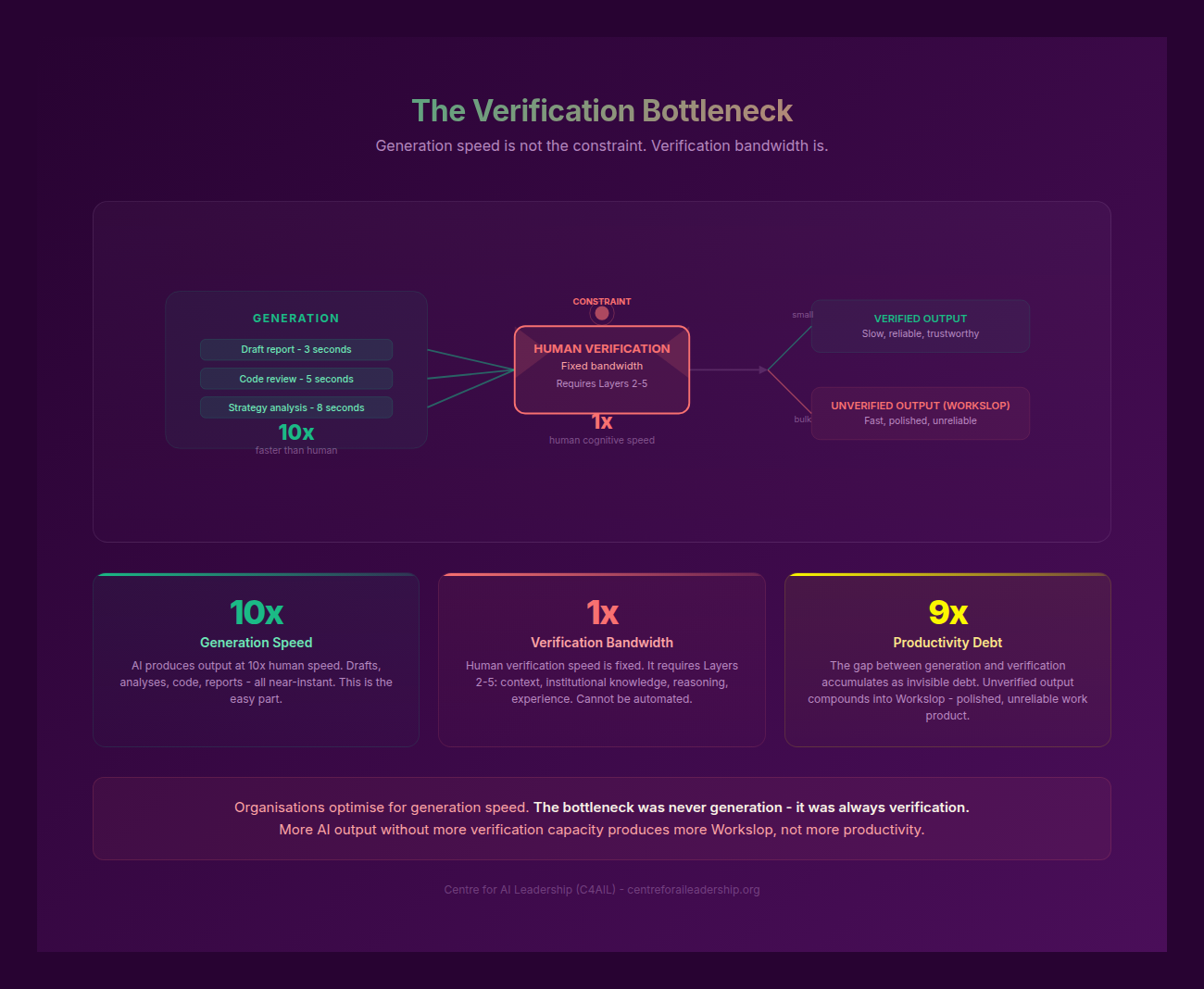

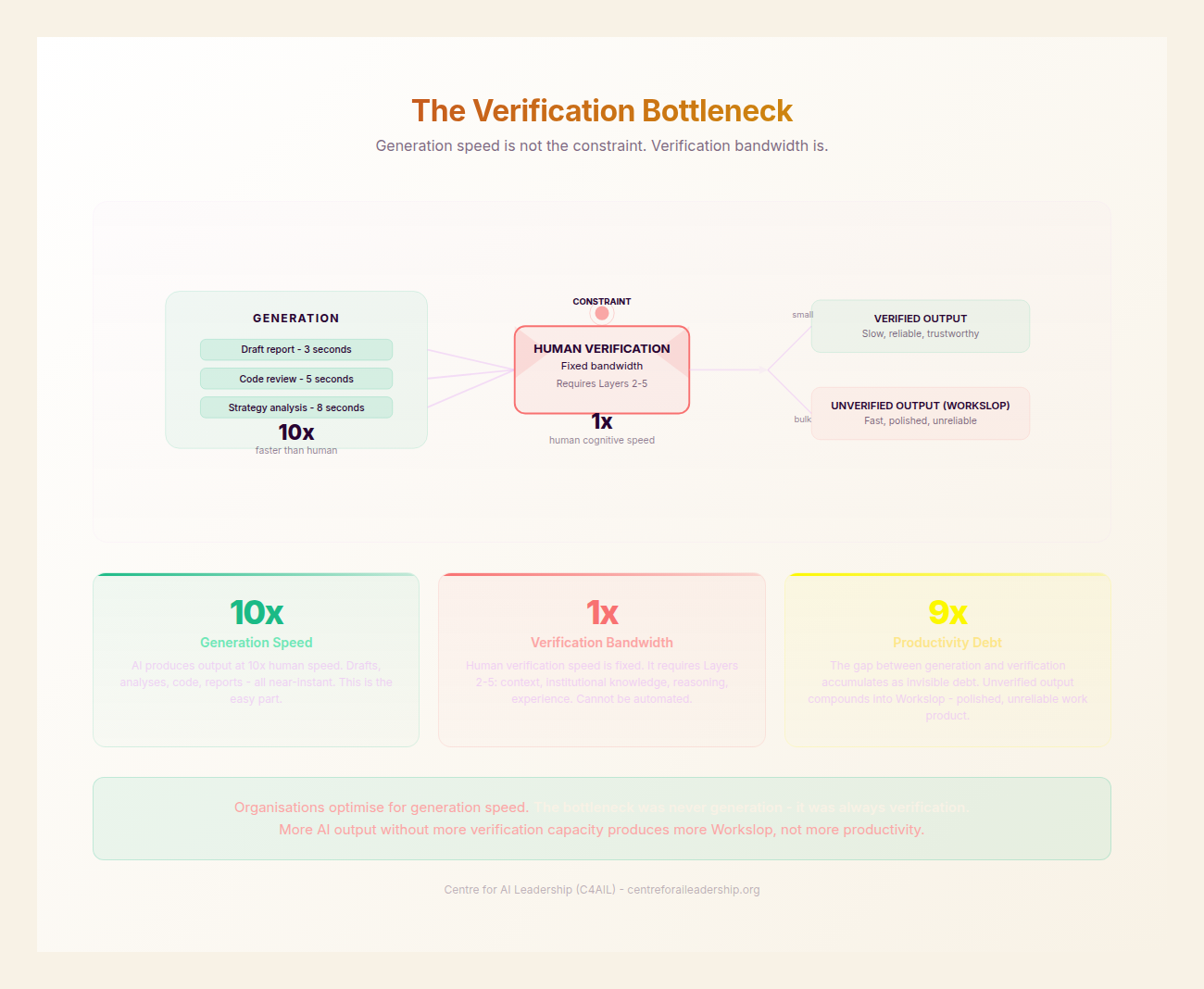

1.4 - The Verification Bottleneck

The prevailing narrative of “skill compression” suggests that AI makes everyone equally capable. Our experience on the ground suggests the opposite. While AI can complete a task faster, it often creates a secondary, more dangerous problem for the organisation: the Verification Bottleneck.

The data is clear. In software engineering, teams are merging 98% more pull requests, but the time required for human review has grown by 91% (Faros AI, 10,000+ devs). Senior developers are being forced to review 6.5% more code, which has caused their own primary productivity to drop by 19% (arXiv:2510.10165).

This is the hidden cost of AI. Approximately 37% of AI-driven time savings are currently lost to rework (Workday/Hanover, 3,200 employees). In the developer community, 96% of professionals do not fully trust AI-generated code, and 38% report that reviewing AI code is actually harder than reviewing human-written code (Sonar, 2026). Teams are now spending roughly 24% of their working week checking or fixing AI output (Sonar, 2026).

This has led to an epidemic of “workslop.” We define workslop as low-quality, AI-generated content that shifts the burden of effort from the creator to the recipient. In the US, 40% of workers encountered AI-generated workslop in the past month. Each instance costs an average of 1 hour and 51 minutes of productivity to rectify, leading to a $9 million annual cost per 10,000 employees. Beyond the cost, 54% of recipients feel annoyed by such content, and 42% perceive a reduction in the sender’s trustworthiness.

The bottleneck is real. A study by METR found that experienced developers were 19% slower when using AI tools on familiar codebases because the overhead of verification exceeded the benefit of generation. Crucially, these developers perceived they were 20% faster - a phenomenon we call the Eloquence Trap.

An organisation’s AI capacity is not limited by how fast it can generate words or code. It is limited by the “liquidity” of its senior staff’s verification bandwidth. If your seniors are drowning in the review of mediocre AI output, your organisation is not accelerating; it is vibrating in place.

1.5 - The Power Law Upturn: The Commercial Engine

Despite these challenges, the Power Law distribution represents the greatest commercial opportunity of the decade for those who can navigate it. The “upturn” in the Power Law is the business case for investing in human capability rather than just software licenses.

The J-Curve (Brynjolfsson, NBER/Fortune Feb 2026) shows that US productivity jumped by approximately 2.7% in 2025 - nearly double the 1.4% average of the previous decade. However, this jump was only visible in firms that invested in “intangible capital” - the difficult work of workflow redesign and intensive human training. Firms that treated AI as a “bolt-on” saw linear or negative returns. Those that re-architected their operations saw exponential gains.

We are seeing a “decoupled scaling” of revenue. JPMorgan doubled its productivity impact from 3% to 6% in a single year by focusing on operations specialists, who saw gains of 40-50%. Goldman Sachs now has 46,500 employees using a custom AI assistant, focusing on high-value synthesis.

The leaders are creating a reinvestment flywheel. AI leaders reinvest 47% of their productivity savings back into deeper AI capabilities and human upskilling (EY, 2025). This explains why mature AI organisations are 2.5x more likely to see revenue growth than their peers (NTT DATA). At PwC, a $1 billion investment resulted in 95% voluntary engagement and efficiency gains of 20-30%, which were immediately redirected into new service lines.

However, this transition has a dark side for the unprepared. We are seeing a 13-16% decline in hiring for entry-level workers in AI-exposed roles (Stanford, Aug-Nov 2025). The traditional “pyramid” structure of professional services - where a large base of juniors supports a small group of seniors - is shifting into an “obelisk” (HBR, 2025). This creates a “Missing Middle” problem: if an organisation does not hire juniors today because AI can do their work, it will have no seniors in five years. The winners are those who use AI to accelerate junior development, rather than replace it.

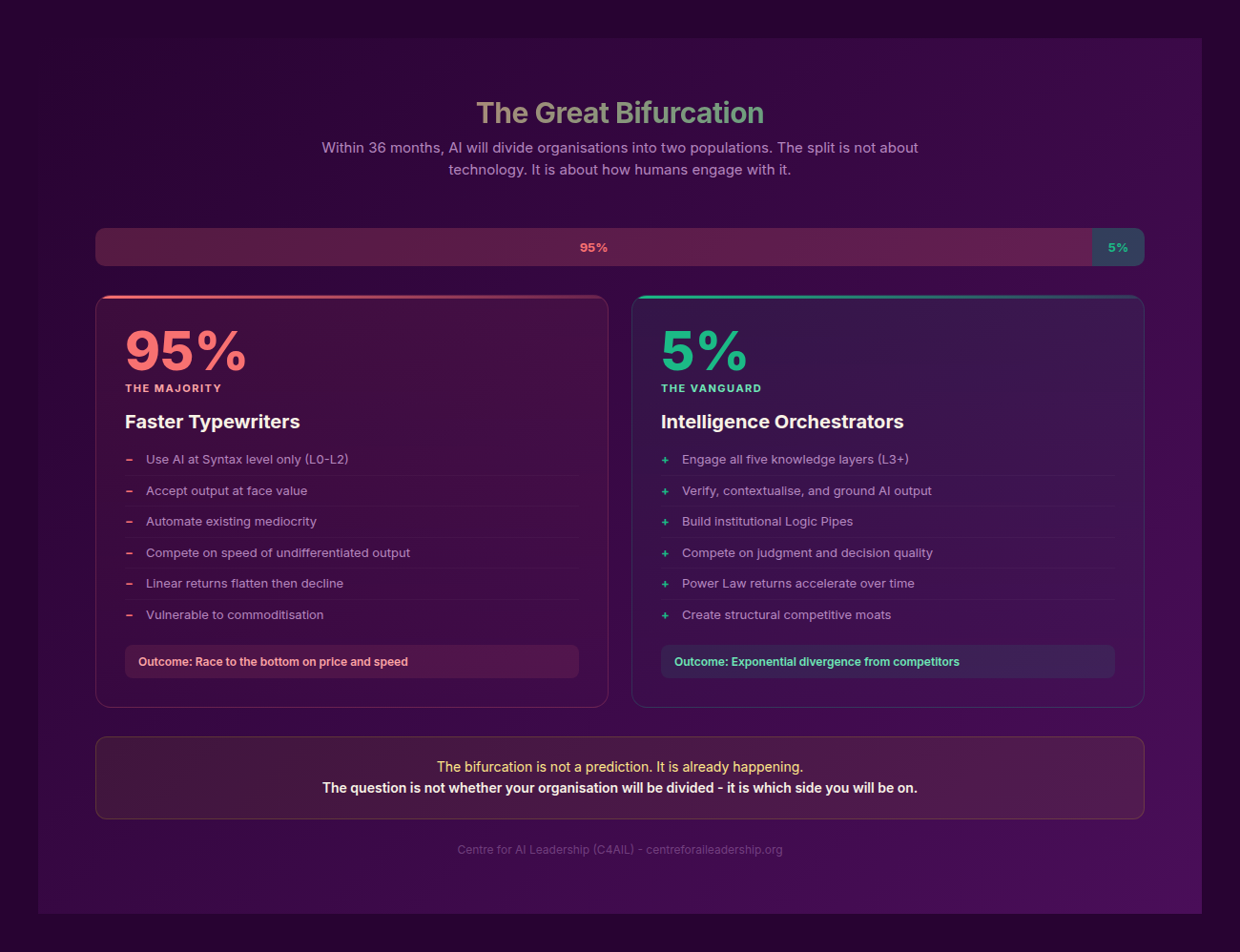

1.6 - The Great Bifurcation

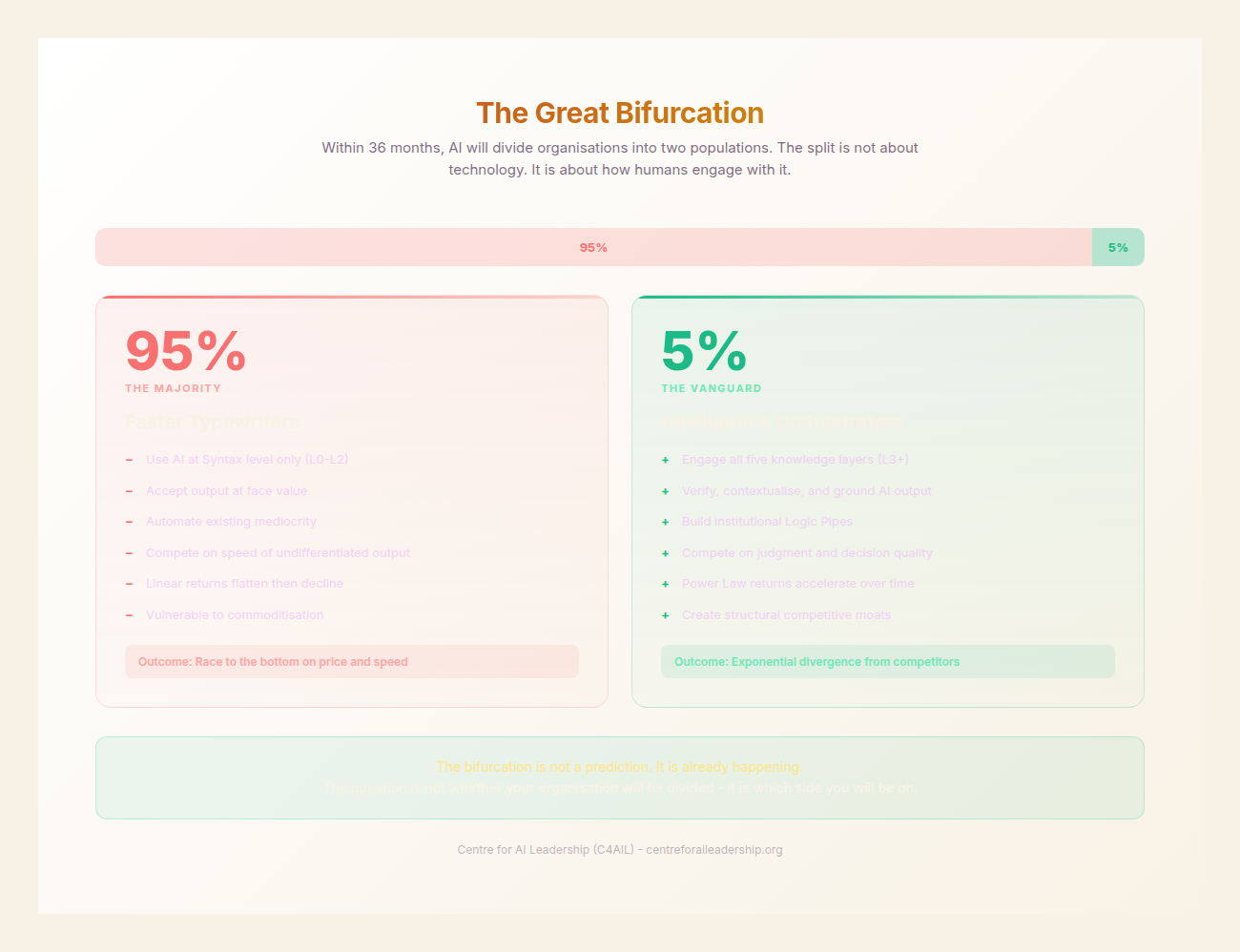

The evidence leads to a single conclusion: we are witnessing The Great Bifurcation. The split in the market is not between those who use AI and those who do not. It is between organisations that invest in human capability and those that invest in tools alone.

The 95% of organisations currently seeing zero ROI are focused on using AI to cut headcount and reduce costs. They give everyone the same generic tools, provide “AI 101” training, and measure success by the number of licenses deployed. They treat AI as an executor of tasks.

The 5% of organisations at the top of the Power Law are using AI to expand capacity and drive revenue. They architect different tracks for different roles, build verification directly into their technical architecture, and immerse their staff in task-specific training. They measure success by ROI and quality of output. Most importantly, they treat AI as a co-creator, while keeping the Accountability Labour firmly in human hands.

| What the 95% Do | What the 5% Do |

|---|---|

| Use AI to cut headcount/costs | Use AI to expand capacity/revenue |

| Give everyone the same tool | Architect different tracks |

| Let seniors review all AI manually | Build verification INTO architecture |

| Generic “AI 101” training | Task-specific immersion |

| Measure adoption (licenses) | Measure outcomes (ROI, quality) |

| Treat AI as executor | Treat AI as co-creator |

| Immediate “hard dollar” savings | Compounding reinvestment flywheel |

The Great AI Divergence is an economic reality. To cross the divide, leaders must move beyond the novelty of generation and address the structural reality of verification and accountability. Part II will examine the primary psychological and technical hurdle to this transition: the Eloquence Trap.