The AI Guildhall: Building What the Factory Cannot

The operational design of the AI Guildhall — shared developmental infrastructure for building AI practitioners through progressive accountability, the Forge pedagogical engine, portfolio-based assessment, and AI-accelerated knowledge transfer.

The AI Guildhall: Building What the Factory Cannot

Paper 8: The Product

Centre for AI Leadership (C4AIL) - 2026

The Trainer Paradox

Here is the problem that stops every AI capability programme before it starts: you need Level 4 practitioners to develop Level 4 practitioners. And Level 4 practitioners do not exist yet — not in meaningful numbers, not in most organisations, not in most countries.

Stage transitions in expertise take time. Moving from competent user to someone who can architect AI systems — who can build the Logic Pipes, design the CAGE templates, construct verification engines — takes two to three years of progressive, accountable practice. Moving from Architect to Orchestrator, someone who governs entire AI ecosystems and develops the people within them, takes another two to three years. And moving from Orchestrator to Trainer — someone who can reliably develop others through these same transitions — requires something that cannot be taught in a classroom: the lived experience of having made consequential decisions, failed, reflected, and grown through the full cycle.

This is a bootstrap problem. The system cannot produce Trainers without already having them.

But bootstrap problems have a known solution. You start with the small number of people who already have the capability — the handful of practitioners who reached L4-5 through years of cross-domain work, the ones who were building these skills before anyone had a name for them — and you build infrastructure around them so their capacity multiplies.

The AI Guildhall is that infrastructure.

Why Individual Organisations Cannot Solve This Alone

Imagine Company A invests two years developing an AI Orchestrator. They send this person through progressive challenges, give them real accountability, surround them with mentors, and absorb the cost of the mistakes that are an essential part of the learning process. After two years, Company B hires that person at a 40% premium.

Company A has just subsidised Company B’s AI capability. The rational response? Stop investing. Let someone else take the risk.

This is not a hypothetical. It is Kathleen Thelen’s collective action failure, playing out in real time across the AI capability landscape. When the benefits of development are portable but the costs are local, individual firms under-invest. Every company waits for someone else to build the talent pipeline. Nobody builds it. The market fails.

The problem is worse for small and medium enterprises. A 200-person firm cannot maintain what we call an AI Guildhall Studio — a supervised practice environment with L4+ practitioners available for real-time mentoring, review, and challenge. They do not have enough Orchestrators on staff. They do not have the volume of diverse AI problems to create the progressive challenge environment that development requires. They cannot absorb the cost of mistakes during the learning phase without risking the business.

Germany solved an analogous problem centuries ago with mandatory IHK and HWK chambers — shared institutions where firms collectively funded apprenticeship infrastructure, master craftsmen examined journeymen from other workshops, and the cost of developing the next generation was distributed across the economy rather than borne by whichever firm happened to hire the apprentice first.

Here is the uncomfortable historical fact: no country that destroyed its guild system has successfully rebuilt one from scratch. The nations with the strongest vocational capability today — Germany, Switzerland, Austria, the Nordic countries — are the ones that evolved their guilds rather than abolished them. Britain dismantled its guilds in the name of free markets and has spent two centuries trying to reinvent the apprenticeship infrastructure it lost.

The AI Guildhall is the modern equivalent. Not a throwback to medieval craft regulation, but shared developmental infrastructure across organisations that are individually too small or too exposed to the poaching problem to build it alone.

What the Guildhall Is

The Guildhall is not a training provider. It does not sell courses. It is not a platform where you watch videos and earn badges. There is no shortage of those, and they have not solved the problem.

The Guildhall is a developmental environment — a space, both physical and virtual, where practitioners develop through progressive accountability rather than progressive content consumption. The difference matters. Content consumption produces people who know about AI. Progressive accountability produces people who can be trusted with AI.

The environment provides five accountability mechanisms that individual organisations struggle to maintain alone:

Graduated autonomy. Practitioners encounter progressively harder challenges — not in a simulation, but in real work with real constraints. An Architect candidate does not study Logic Pipe design in the abstract; they build one for a real organisational problem, with a real deadline, and a real stakeholder who needs it to work.

Consequential decisions. The work has stakes. Not the artificial stakes of a graded assignment, but the genuine stakes of someone depending on the output. This is what separates development from training. You cannot develop judgment without consequences.

Reflective accountability. After-action reviews, structured peer review, and honest assessment of what went wrong. Not as a punitive exercise, but as the mechanism through which tacit knowledge — the kind that cannot be written in a manual — transfers between practitioners.

The signing moment. At defined milestones, practitioners submit portfolio work and put their name on it. Not “here is what I learned” but “here is what I built, here is my reasoning, and I stand behind it.” This is the moment where epistemic credit is claimed and judged.

Community of practice. Practitioners from different organisations working alongside each other, reviewing each other’s work, challenging each other’s assumptions. The cross-pollination is essential — an Architect who has only ever built Logic Pipes for one industry develops narrower judgment than one who has seen how the same principles apply across healthcare, finance, and manufacturing.

The physical and virtual expression of this is what we call the AI Guildhall Studio: a supervised practice space that operates in what Amy Edmondson calls the Learning Zone. High psychological safety — you can admit what you do not know without career consequences — combined with high accountability — the work is real, the review is honest, and the standards are maintained. Most organisations achieve one or the other. The Guildhall is designed to hold both simultaneously.

The Studio also serves as the Guildhall’s front door. Anyone — regardless of background or prior experience — can walk into a Studio event, bring a problem, and get guided help applying AI to solve it. The developmental function and the open-access function are not separate events; they are the same event serving two populations. Explorers get help at the stations. Practitioners staff those stations and develop through the act of facilitating. The conversion from Explorer to Practitioner happens naturally — the people who want to go deeper ask how.

What the Guildhall Is Actually Building

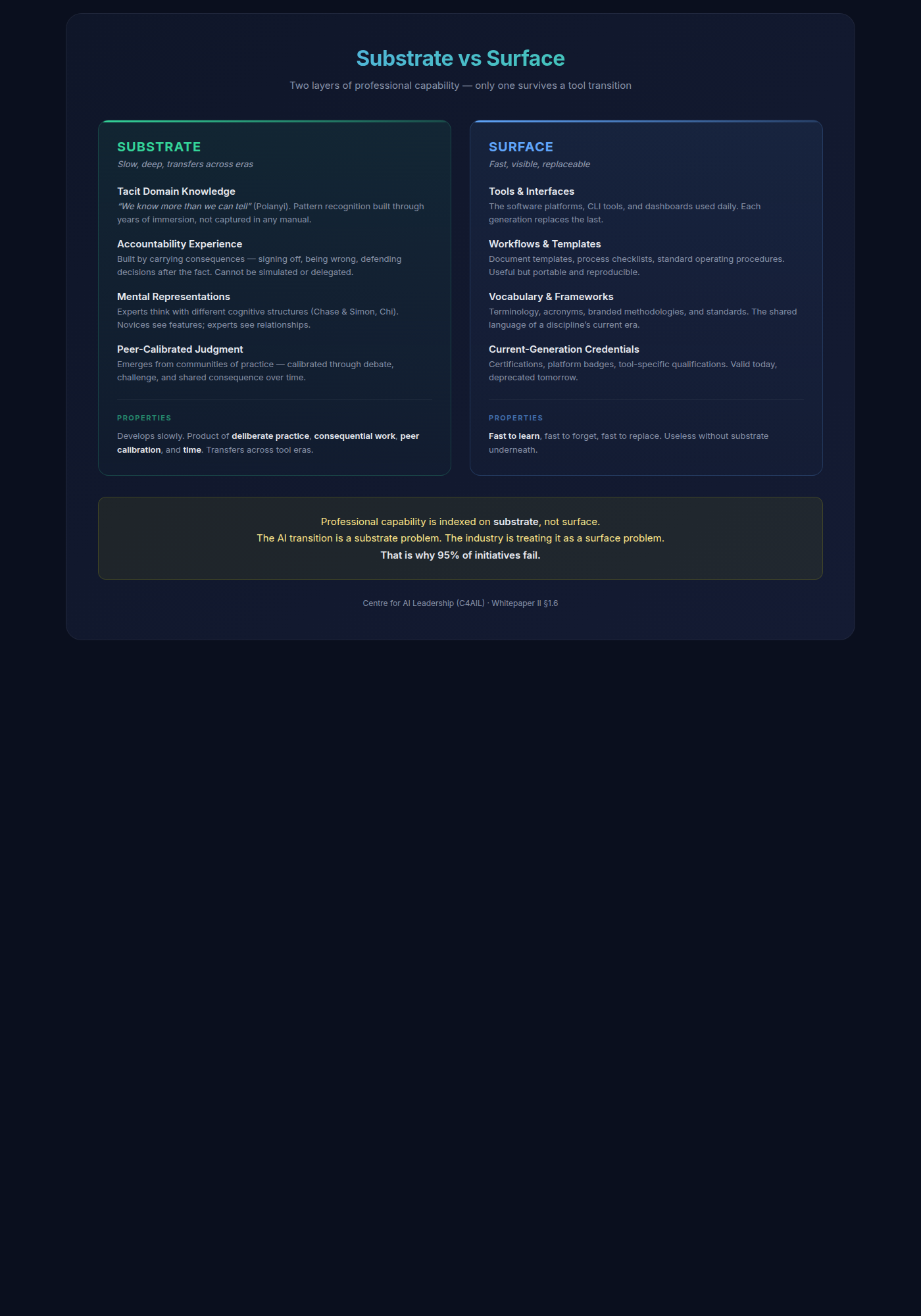

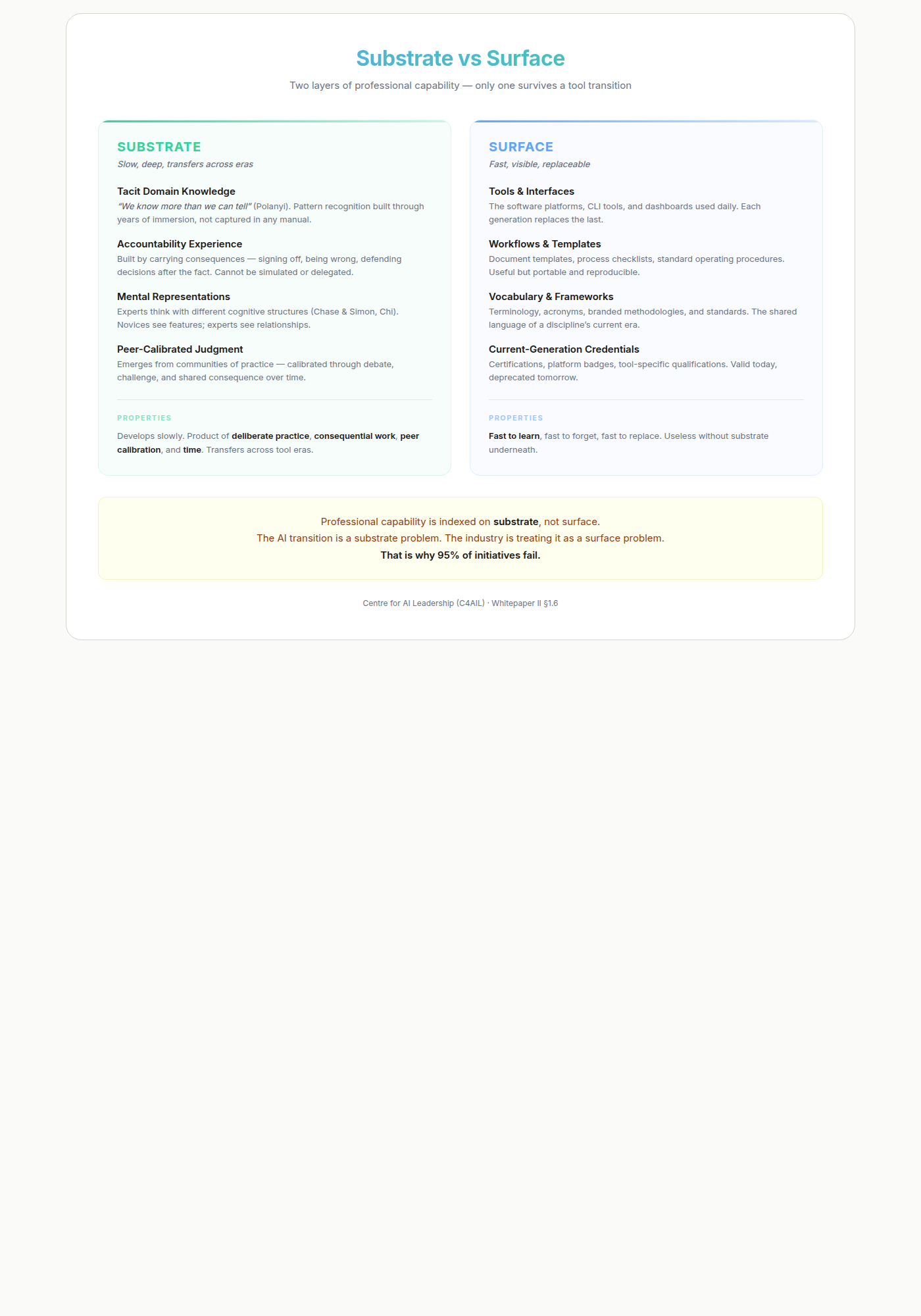

Whitepaper II §1.6 names the underlying concept: professional capability has an internal structure of substrate (tacit domain knowledge, accountability experience, mental representations, peer-calibrated judgment) and surface (tools, interfaces, workflows, vocabulary). Substrate is slow to build and transfers across tool eras; surface is fast to learn and useless without substrate. The AI transition is a substrate problem that the industry is treating as a surface problem — which is why 95% of initiatives fail.

The Guildhall, in one line: substrate infrastructure. Everything else — the Studio, the portfolio, the five accountability mechanisms, the cross-organisational community — are the means by which substrate is developed, ported, calibrated, and entrusted.

This splits into two services that share the same infrastructure but operate on very different populations.

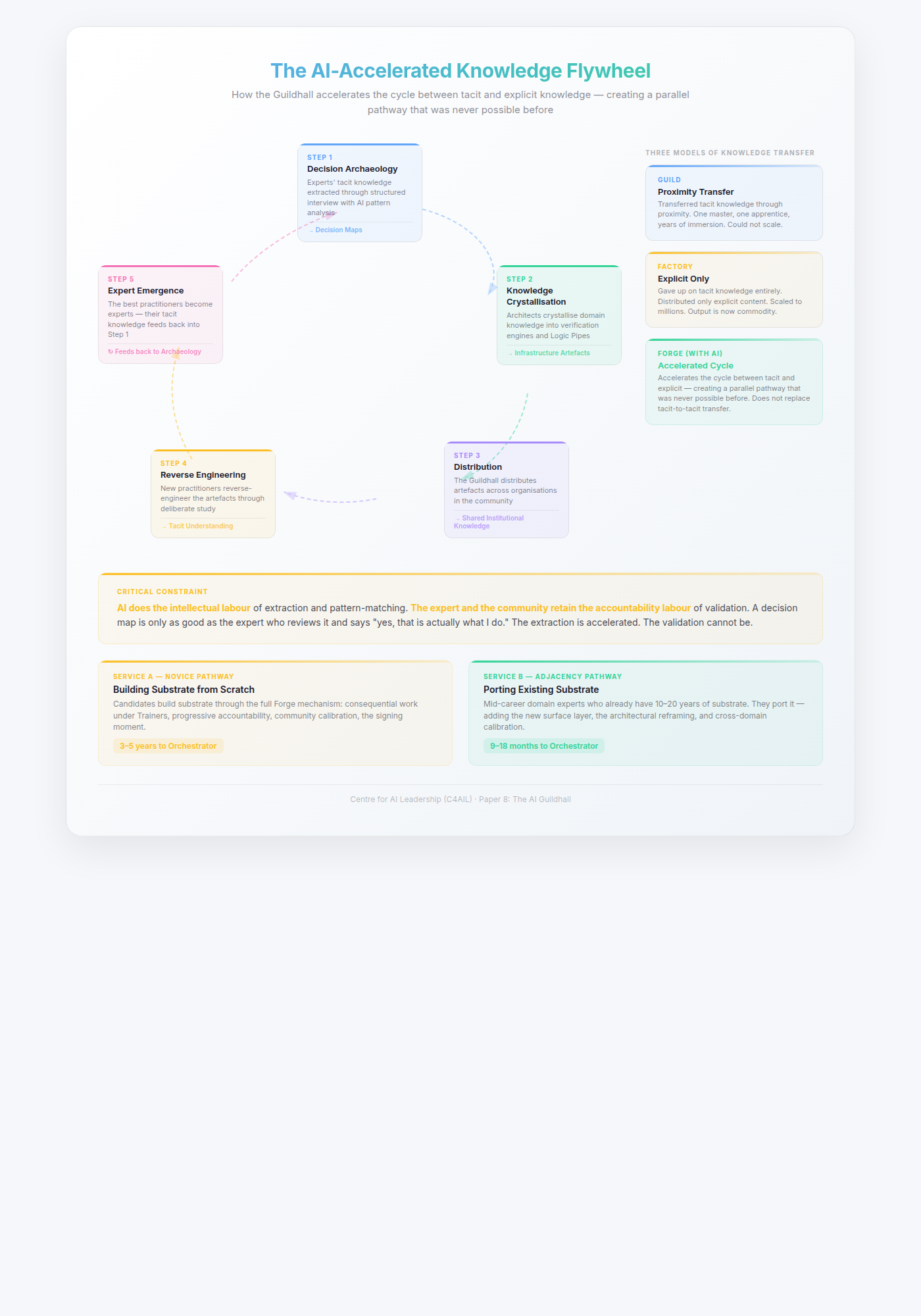

Service A — Substrate development (Novice Pathway). Candidates who do not yet have substrate build it through the full Forge mechanism: consequential work under Trainers, progressive accountability, community calibration, the signing moment. The timeline is 3-5 years to Orchestrator, 5+ years to Trainer. This is the classical capability pipeline.

Service B — Substrate porting (Adjacency Pathway). Mid-career domain experts — senior analysts, experienced lawyers, veteran auditors, practising clinicians, senior engineers — who already have 10-20 years of substrate in their field. They are not building substrate; they are porting it. What they need is the new surface layer, the architectural reframing from execution to design to governance, and cross-domain calibration. The timeline is 3-6 months to Architect and 9-18 months to Orchestrator, because the slow layer (substrate) is already there and only the fast layer (surface) is being added. Whitepaper III §5.2.1 develops this pathway in full.

The practical significance is that Service B — adjacency — is the Guildhall’s largest near-term addressable population and the fastest route to useful capacity. Most organisations are sitting on a stock of mid-career domain experts whose substrate is a strategic asset currently being written off because surface-level AI upskilling courses cannot see it. The Guildhall is designed to see it, port it, and calibrate it against cross-organisational peers in a way no internal programme can. Service A remains essential for the long run — and for new domains where no adjacent expert population exists — but it is a decade-scale investment, not a near-term capacity move.

Everything that follows in this paper — the Forge mechanism, the Progression Model, the Portfolio System, the observation architectures, the AI-accelerated flywheel — is the operational specification for how the Guildhall delivers both services.

The Forge as Pedagogical Engine

The history of professional education has produced two models, and both have failed.

The Guild integrated everything — tacit knowledge, explicit knowledge, professional identity — in a single environment. Master and apprentice, community of practice, consequential work. It produced the full stack. It could not scale. One master, a few apprentices, geographic lock.

The Factory scaled the explicit layer. Bloom’s Taxonomy was the engineering solution for expert scarcity: codify what can be codified, build curricula, standardise assessments, deliver to millions. This was necessary. But the factory produces intellectual labour only — no tacit capability, no professional identity, no accountability. And its primary output — the explicit knowledge transfer that justified its existence — is now commodity. AI makes explicit-to-explicit knowledge transfer essentially free.

The guild cannot scale. The factory’s output is free. The Guildhall operates a third model.

The Forge. Raw material transformed through heat and pressure. Constraint is the mechanism, not the obstacle. High risk, high reward — what comes out is stronger than what went in.

The Forge is not a theory. It is an empirically observed pattern. Mongolian wrestlers who dominated Japanese sumo. Cuban boxers who won 41 Olympic golds under a trade embargo. Japanese distillers whose whisky now outscores Scotch. Korean entertainment executives who studied Motown, systematised what Motown did intuitively, and created K-pop. Nigerian Scrabble players who reframed a vocabulary game as mathematics and beat native English speakers. In every case, the periphery surpassed the centre. In every case, the mechanism was the same — and it maps directly to how the Guildhall develops practitioners.

Seven Steps — and How the Guildhall Operationalises Each

Step 1: Intellectualise. Codify what can be codified. Make the organic reproducible. Without intellectualisation, you get talent but not a pipeline.

In the Guildhall: This is the Floor layer. AI literacy, structured frameworks, the C4AIL maturity model — the explicit foundation on which everything else builds. CompTIA AI Essentials is the waypoint. The Factory got this step right. We keep it.

Step 2: Reverse-engineer expert output. Study what experts do — their actual decisions, architectures, approaches — not pre-digested textbook summaries. The periphery’s distinctive capability: conscious study of what the centre does unconsciously.

In the Guildhall: Architect candidates study real Logic Pipes, real CAGE templates, real verification engines built by L4+ practitioners — not textbook examples. They analyse why this design choice was made, what would break if it were different, what the designer was seeing that the documentation does not capture. The Guildhall distributes anonymised, abstracted artefacts from real organisational deployments across its membership — each one a piece of expert output available for reverse engineering.

Step 3: Convert through deliberate practice. Ericsson’s deliberate practice combined with Chi’s self-explanation effect. The learner must generate explanations, not receive them. Exams test exam-taking. Deliberate practice builds capability.

In the Guildhall: Architect candidates do not study Logic Pipe design in the abstract. They build one for a real organisational problem. Then they explain their design choices to peers and mentors — not to demonstrate knowledge, but because the act of generating the explanation is itself the conversion mechanism. The Studio is the primary practice space.

Step 4: Build community. When no single master is available, the community becomes the developmental environment. Brian Eno called this scenius — communal genius that cannot emerge from individuals working alone.

In the Guildhall: This is the core infrastructure the Guildhall provides. Cross-organisational peer networks, after-action review circles, the community of practice that no single firm can sustain. The Architect at a logistics company sees how the Architect at a bank solved a structurally similar problem. The Orchestrator at a hospital compares governance models with the Orchestrator at a law firm. The cross-pollination produces broader judgment than any single organisation’s internal community could generate.

Step 5: Create consequential stakes. Real outcomes with real consequences — including the reality that luck plays a part. Professional work involves randomness; sanitised assessments remove it.

In the Guildhall: The work is real. Architect candidates build for real stakeholders with real deadlines. The portfolio contains real deployments with real outcomes — including the failures. The signing moment — “I built this, I stand behind it” — creates stakes that no simulated exercise can replicate.

Step 6: Preserve expert access for calibration. Not for teaching — many experts cannot articulate how they got there. For the entrustment question: “would I trust this person?” The expert’s job in the Forge is to say “yes” or “not yet.”

In the Guildhall: L4+ practitioners from different organisations review portfolios, assess readiness, and make entrustment decisions. They are not grading. They are answering the question that only someone who has done the work can answer: would I trust this person with my clients, my systems, my reputation? This is ten Cate’s Entrustable Professional Activities model, transplanted from medical education into the AI capability domain.

Step 7: Separate measurement from development. When the institution that teaches also certifies, the curriculum collapses to what the test measures. Germany’s IHK chambers examine; the Berufsschule teaches. Medical residency separates training from licensing. The Factory merged them, and the tacit dimension was lost.

In the Guildhall: The organisation develops its people. The Guildhall assesses them. The practitioners whose portfolios are reviewed at Level 3-5 are developed by their own organisations and mentors — the Guildhall provides the external assessment infrastructure, the cross-organisational review panels, and the standards. This structural separation is what prevents the Guildhall from becoming another factory.

The full theoretical argument — ten empirical cases across six domains, the community infrastructure prerequisite, the philosophical framework, and the honest constraints — is developed in Whitepaper IV: The Forge — Professional Education When Experts Are Scarce and Content Is Free.

The Progression Model

The Guildhall maps to the C4AIL maturity framework (L0-L6) but translates it into a concrete developmental pathway — what you do, how long it takes, and what signals readiness for the next stage.

Floor Users: Entry (3-6 months)

Practitioners enter the Guildhall for AI literacy and validation skills. They learn to recognise what AI actually does versus what it appears to do — the Eloquence Trap, the Reliability Trap, the Confidence Plateau. They develop the basic discipline of verifying AI output against domain knowledge rather than accepting fluent text at face value.

The waypoint here is CompTIA AI Essentials — an industry-recognised credential that validates foundational AI literacy. It is not the destination. It is proof that you have built the floor.

Translator Development (6-12 months)

Translators are bilingual. They speak the language of their domain — finance, healthcare, law, engineering — and they speak enough AI to commission, interrogate, and challenge technical work. They do not need to build the systems. They need to know when a system is being built badly.

The Guildhall runs bilingual capability workshops: structured exercises where domain experts and AI practitioners work the same problem from different angles and learn to communicate across the gap. The Translator does not become a technologist. They become a leader who cannot be fooled.

Architect Pipeline (12-24 months)

This is where the Guildhall’s infrastructure becomes essential. Architect candidates build real things under mentorship: Logic Pipes that structure AI workflows for specific organisational problems, CAGE templates that constrain AI output within domain-appropriate boundaries, verification engines that check AI work against ground truth.

The work is mentored by L4+ practitioners — Orchestrators and Trainers who review designs, challenge assumptions, and ensure that the Architect is developing judgment, not just technique. The waypoint is CompTIA AI Architect+ — an industry credential that validates the ability to design AI systems, not merely use them.

Assessment is portfolio-based. What we call Level 3 Submissions: real work, annotated with reflection, reviewed by practitioners from outside the candidate’s own organisation. No exam can test whether someone can build a system that works under pressure. A portfolio can.

Orchestrator Development (2-3 years)

Orchestrators govern. They do not just build AI systems; they build the organisational structures — governance frameworks, quality assurance processes, human oversight mechanisms — that make AI systems trustworthy at scale. This is system-level thinking, and it takes years of progressive accountability to develop.

The Guildhall provides this through what amounts to a structured residency: Architect-level practitioners take on progressively larger scopes of responsibility, moving from single-workflow governance to department-level and eventually organisation-level AI oversight. They fail. They reflect. They try again with better judgment.

Assessment at this level is what we call Level 4-5 Reviews: portfolio-based, peer-reviewed by other Orchestrators, and evaluated not just on technical correctness but on governance quality — did this person build something that an organisation can trust?

Trainer Pipeline (18-30 months adjacency / 5+ years novice)

The scarcest resource. Trainers are Orchestrators who have demonstrated the additional capability of developing others. Not teaching — developing. The distinction is critical. Teaching transfers knowledge. Development builds judgment, and judgment cannot be transferred through instruction alone.

Trainers in the Guildhall carry dual reporting: operational accountability for the AI systems they govern, and developmental accountability for the practitioners they are growing. This dual burden is what makes the Trainer role so demanding and so scarce.

The timeline is honest, and it splits along the substrate question. Floor capability in 3-6 months for everyone. Above the Floor, the pathway depends on whether the candidate is building substrate or porting it (see “What the Guildhall Is Actually Building” above and Whitepaper III §5.2.1).

Novice Pathway (substrate development): Translators in 6-12 months. First Architects in 12-24 months. First Orchestrators in 2-3 years. A functioning Trainer pipeline in 5+ years. This is the classical developmental timeline and it cannot be compressed — it is constrained by the rate at which substrate forms under consequential stakes.

Adjacency Pathway (substrate porting): Mid-career domain experts with 10-20 years of existing substrate reach Architect in 3-6 months, Orchestrator in 9-18 months, and Trainer readiness in 18-30 months. This is not a shortcut; it reflects the fact that the slow layer is already built and only the fast layer is being added.

Systemic change — cultural shift, functioning cross-organisational supply of Trainers, the Guildhall operating at steady state — takes a decade regardless, because it is constrained by the rate at which first-generation Trainers can be found and the number of mentees each can hold. Anyone promising faster across the board is selling you content consumption, not capability development. Anyone denying the adjacency track exists is wasting the strategic asset the organisation already has.

The Portfolio System

Medieval guilds required a masterpiece — a work of sufficient quality that the guild’s masters would accept the apprentice as a peer. Not a test of what you know. A demonstration of what you can make, judged by people whose own reputation depends on maintaining the standard.

The AI Guildhall’s portfolio system is this idea, modernised.

Exams test reception — can you recognise the right answer when you see it? Portfolios test creation — can you produce work that meets the standard when no one gives you the answer to recognise? The distinction maps to an ancient taxonomy: episteme is knowledge (do you know?), techne is skill (can you do?), but phronesis is practical wisdom (would we trust what you make?). Exams test episteme. Practical exercises test techne. Only portfolios test phronesis — and phronesis is what the AI economy actually needs.

A portfolio in the Guildhall contains real work with real consequences. Not case studies. Not simulations. Actual Logic Pipes built for actual organisations, CAGE templates deployed in actual workflows, governance frameworks implemented in actual teams. Each piece is annotated with structured reflection: what the practitioner intended, what happened, what went wrong, and what they would do differently. The reflection is not optional decoration. It is the primary evidence of judgment.

Review is conducted by L4+ practitioners from different organisations — people who have no institutional loyalty to the candidate, no incentive to be generous, and whose own credibility is staked on maintaining the standard. They are not grading. They are answering a single question: would we trust this person to do unsupervised work at this level?

This is the signing moment. The point where the community judges whether a practitioner has earned the right to practice without a safety net. It is uncomfortable by design. It is what makes the credential mean something.

Observation Architectures: Measuring What Cannot Be Tested

The standard objection arrives here: how do you know it works? How do you measure tacit capability — the judgment, the taste, the “would I trust this person” — without collapsing it into the explicit proxies that destroyed every previous measurement system?

This is the right question. It is also a question that has been answered, repeatedly, across multiple fields — in ways that most education systems have never examined.

What the Evidence Shows

The Guildhall’s assessment design draws on structures that have been measuring tacit capability for decades, sometimes centuries:

The Meisterstück (German master craftsman examination). An aspiring master produces a masterpiece — an original work judged by elder guild members from the Handwerkskammer. The master assessors do not check against a rubric. They see whether the candidate understands material behaviour, can solve unexpected problems during creation, and produces work that shows feel for the craft. The integrated demonstration reveals what no checklist captures. The Meisterstück has resisted Goodhart corruption for centuries because you cannot fake a masterpiece in front of masters with decades of experience.

The War Office Selection Boards (UK, 1942). When Britain’s officer selection system was producing 40%+ failure rates at training units, Wilfred Bion and Eric Trist from the Tavistock Clinic group redesigned it. Their innovation: leaderless group tasks observed by trained psychologists. No designated leader. The observers watched how candidates balanced individual performance with group support — a tacit capability invisible on paper but observable in structured group situations. Failure rates dropped to 8%. This became the ancestor of every modern assessment centre worldwide.

Entrustable Professional Activities (medical education). Olle ten Cate’s framework asks supervising physicians a single question: “Would I trust this trainee to perform this activity unsupervised?” The question accesses the expert’s own tacit knowledge as the measurement instrument. The supervisor cannot always articulate why they would or would not trust someone — but the judgment itself shows high reliability across studies. The expert is the sensor. The structure makes the reading systematic.

Morbidity and Mortality conferences (medicine). Real cases, peer judgment, no formal assessment target. Charles Bosk’s research found that M&M primarily surfaces normative errors — failures of character and diligence — not technical ones. It is the most powerful tacit assessment mechanism in medicine, precisely because it was never designed as assessment. There is no target to teach to.

The Formality-Sensitivity Paradox

The cross-field evidence reveals a pattern that governs the Guildhall’s design: the mechanisms that most reliably access tacit capability are the least formal. The most formal mechanisms are the most susceptible to Goodhart corruption.

OSCEs — medicine’s standardised clinical exams — do not measure tacit capability. Brian Hodges demonstrated in 1999 that experienced physicians score lower than students on OSCE checklists, because the checklist penalises the selective efficiency that defines expertise. The UK’s NVQ system was criticised for exactly the same reason — portfolio evidence gathering became an end in itself, with candidates and assessors focused on documentation rather than capability.

The Guildhall navigates this paradox through five design principles:

-

No fixed rubric. Portfolio review questions are open-ended: “Would you trust this person?” “What are you seeing that concerns you?” “What would make you confident?” The contexts change. The scenarios are ambiguous by design. There is no answer key to teach to.

-

Expert judgment, not algorithmic scoring. The measurement instrument is a human expert making a trust decision — which itself relies on tacit knowledge. You cannot game a sensor you cannot model.

-

Trajectories, not thresholds. The portfolio is not pass/fail at a single point. It is directional — a body of work over time. The practitioner whose failures become more sophisticated, who self-corrects before external feedback, who starts anticipating consequences two steps ahead — these trajectories are visible without requiring the practitioner to articulate their development. You cannot cram for a trajectory. You cannot fake a growth curve that spans years of real decisions.

-

Structural separation. The organisation develops. The Guildhall assesses. The practitioner cannot optimise for the assessment because it is not a test — it is a body of work produced in one context (the workplace) evaluated by people with no stake in the training (the Guildhall panel).

-

The assessment adapts. Because the Guildhall sees hundreds of portfolios across organisations, patterns emerge. If practitioners start gaming one type of demonstration, the pattern shows in the data. Review panels evolve. The assessment is a living system, not a fixed instrument.

This is measurement in the scientific sense — observation under controlled conditions — not measurement in the factory sense — scoring against a predetermined rubric. The difference is everything.

The Knowledge Acceleration Engine

The Forge says the Factory’s explicit output is now free. But there is a second move: using AI to accelerate the conversion of tacit knowledge into distributable form — so the Guildhall does not just develop practitioners one at a time, but builds institutional knowledge that compounds across the entire community.

Decision Archaeology

Experts cannot tell you their decision framework. They can respond to specific cases. The pattern: present the expert with real decisions — past cases, live scenarios, edge cases — and use AI to record, transcribe, and analyse patterns across hundreds of responses.

AI identifies what the expert notices first, what they dismiss without comment (the negative space that reveals unconscious elimination), and where their reasoning diverges from textbook logic. The output is a decision map — not the expert’s post-hoc rationalisation, but the actual pattern extracted from observed decisions.

These decision maps become Guildhall artefacts. Shared across organisations, reviewed by other experts (“Is this actually what I do?”), refined over time. Each one is a piece of tacit knowledge that now exists outside the expert’s head — available for reverse engineering (Step 2 of the Forge) by the next generation of practitioners.

Verification Engine as Knowledge Crystallisation

When an Architect builds a verification engine — the deterministic check that validates AI output against domain rules — they are necessarily intellectualising tacit knowledge. The compliance expert who “just knows” when a regulatory interpretation is dangerous — to build the engine, someone must ask: what specifically triggers that alarm? What are the boundary conditions? What is the rule behind the intuition?

Every Logic Pipe built is institutional knowledge crystallised. Every CAGE template is expert judgment made legible. Every verification rule is tacit knowledge that now exists outside the expert’s head. The act of building Forge infrastructure produces intellectualised knowledge as a byproduct.

The Guildhall distributes these artefacts across its membership — anonymised, abstracted, available as reference patterns. The verification engine built by a compliance Architect at one bank becomes a starting point for the compliance Architect at an insurance company. Not copied (context matters), but studied, reverse-engineered, adapted.

Community Calibration Loops

AI can partially scale the scarce expert calibration function:

-

Divergence detection. A junior’s decision can be compared against extracted expert decision maps — not for correctness (AI cannot judge that), but for divergence. “Your approach diverges from the expert pattern in these three ways” gives the junior something to explain and the Trainer something to probe.

-

Cohort analysis. The Guildhall sees hundreds of portfolios. AI identifies common failure patterns, common growth trajectories, common sticking points — meta-knowledge about how practitioners develop that no individual Trainer has visibility into.

-

Cross-domain pattern transfer. The judgment pattern that makes a good regulatory interpreter may share structural similarities with what makes a good clinical decision-maker. AI surfaces these analogies. Humans validate whether they hold.

The Flywheel

- Experts’ tacit knowledge is extracted through decision archaeology → becomes decision maps

- Architects crystallise domain knowledge into verification engines and Logic Pipes → becomes infrastructure artefacts

- The Guildhall distributes these artefacts across organisations → becomes shared institutional knowledge

- New practitioners reverse-engineer the artefacts → develop their own tacit understanding

- The best of them become experts → their tacit knowledge feeds back into Step 1

The Guild transferred tacit knowledge through proximity — one master, one apprentice, years. The Factory gave up on tacit knowledge entirely and distributed only explicit. The Forge, with AI, accelerates the cycle between tacit and explicit — not replacing the tacit-to-tacit transfer, but creating a parallel explicit pathway that was never possible before.

The critical constraint: AI does the intellectual labour of extraction and pattern-matching. The expert and the community retain the accountability labour of validation. A decision map is only as good as the expert who reviews it and says “yes, that is actually what I do” or “no, you have captured the surface but missed the thing that matters.” The extraction is accelerated. The validation cannot be.

What the Guildhall Cannot Solve

This section exists because the Forge philosophy demands it. The Factory claims to solve the problem. The Forge names what it cannot solve.

The Guildhall is a workaround, not a cure. Germany’s guild system works because chamber membership is mandatory (no free riders), the Wanderjahr tradition provides mobility, 620,000+ voluntary associations provide the social substrate, and cultural respect for master-level practitioners is embedded across generations. These are civilisational assets built over centuries. No single institution can replicate them.

Community infrastructure cannot be built on dead ground. The Guildhall provides cross-organisational community — but it depends on practitioners who have enough unstructured time to participate, enough psychological safety to be vulnerable, and enough intrinsic motivation to develop. In societies that have consumed all available time with economic competition, graded community participation, commercialised third spaces, and regulated organic association, the soil for community is thin. The Guildhall can plant seeds. It cannot manufacture soil.

Socialisation remains scarce. The AI-accelerated flywheel supercharges three of the four modes in Nonaka’s knowledge spiral: Externalisation (tacit→explicit, via decision archaeology), Combination (explicit→explicit, now free via AI), and Internalisation (explicit→tacit, via the Forge’s deliberate practice). But Socialisation — the direct tacit-to-tacit transfer that happens through proximity, through doing, through the body knowing before the mind articulates — still requires masters, still requires time, still cannot be fully replaced. The Guildhall reduces the dependency on Socialisation. It does not eliminate it.

The timeline is real — and it is two timelines, not one. For candidates building substrate from scratch (Novice Pathway): Floor in 3-6 months, Translators in 6-12 months, First Architects in 12-24 months, First Orchestrators in 2-3 years, functioning Trainer pipeline in 5+ years. For mid-career domain experts porting existing substrate (Adjacency Pathway, see Whitepaper III §5.2.1): Architect in 3-6 months, Orchestrator in 9-18 months, Trainer in 18-30 months. Systemic cultural shift in a decade regardless. The novice timeline is a developmental reality constrained by the rate at which substrate forms under consequential stakes; the adjacency timeline reflects that the substrate is already there and only the surface is being added. Neither can be compressed further. Anyone promising faster across the board is selling content consumption, not capability development.

The poaching problem has no organisational solution. Without mandatory chambers, the free-rider problem persists. The Guildhall’s defence is to make the developmental environment itself part of the value proposition — people stay because the community, the peer network, and the progressive accountability exist nowhere else. This works for practitioners who have experienced it. It does not prevent the first defection.

These are not objections to the Guildhall. They are the boundaries of what any single institution can achieve in a world where the civilisational infrastructure that produced capable professionals has been systematically dismantled. Naming them honestly is stronger than pretending otherwise. The Guildhall is the best available response — shared developmental infrastructure for organisations that are individually too small, too exposed to collective action failure, or too late to the game to build it alone. It is not a replacement for the centuries of institutional evolution that produced Germany’s dual system. It is a genuine workaround for societies that destroyed theirs.

The Partnership Model

No single organisation has everything the Guildhall requires. The model depends on a partnership where each contributor brings what the others lack.

C4AIL provides the theoretical framework — the maturity model (L0-L6), the diagnostic instrument (assess.c4ail.org), the pedagogical architecture, and the portfolio assessment methodology. C4AIL’s role is to define what “good” looks like at each stage and to ensure that the developmental pathway is grounded in research rather than marketing.

CompTIA provides workforce methodology, cross-industry reach, and certification waypoints. AI Essentials validates the floor. AI Architect+ validates the Architect transition. SecAI+ validates the security dimension. CompTIA’s infrastructure — exam development, global delivery, employer recognition — gives practitioners credentials that travel across borders and industries.

CirroLytix/Ligot provides services economy expertise and research into AI workforce development in the Philippines and across Southeast Asia. Their work on how AI capability develops in emerging economies — where the guild infrastructure is being built from scratch rather than evolved from existing institutions — informs the Guildhall’s design for contexts beyond the developed world.

ClassDo provides programme development and credentialing innovation. Their expertise in structuring learning pathways and building assessment systems that work across institutional boundaries is essential for a model where practitioners move between organisations while maintaining a coherent developmental trajectory.

Kingston International College provides institutional accreditation and employer networks. The Guildhall needs academic credibility to operate within regulated training frameworks, and it needs employer relationships to ensure that the developmental pathway connects to real career progression.

The Guildhall operates at the intersection of these contributions — shared infrastructure that none of the partners could build alone, producing outcomes that none of them could achieve separately.

The Business Model

The Guildhall’s economics must be sustainable without depending on the goodwill of any single organisation. The model has five revenue streams, designed to align incentives across all participants.

Enterprise subscription. Annual access for a cohort of employees to the full Guildhall infrastructure: the Studio, the portfolio platform, structured mentoring, community of practice. Priced per cohort, not per seat, to encourage organisations to send teams rather than individuals.

SME pooled access. Multiple small firms share Trainer capacity and development infrastructure through a consortium model. A 20-person cybersecurity firm and a 15-person legal tech company cannot each maintain a Studio — but together, with three or four other firms, they can sustain one collectively. The IHK model, applied to AI.

Individual practitioner membership. For professionals investing in their own development — independent consultants, career changers, practitioners whose employers have not yet joined. Access to the community of practice, portfolio review, and the Studio on a scheduled basis.

Certification revenue. C4AIL L3-5 portfolio assessments, conducted by qualified reviewers, producing credentials with genuine market signal. The assessment is rigorous enough that passing means something, which is what makes the credential worth paying for.

Partner programme. Organisations that contribute Trainers — practitioners who spend part of their time mentoring others in the Guildhall — receive priority access to development infrastructure for their own teams. This creates a virtuous cycle: the organisations that invest most in developing the ecosystem get the most back from it.

The economics mirror the German chamber model: the net cost of participation is low because practitioners produce genuine value while developing. Architect candidates build real Logic Pipes for real problems. The Guildhall is not a cost centre that produces graduates — it is a practice environment that produces both capability and output simultaneously.

This paper describes the operational design of the AI Guildhall. For the full theoretical argument, see the C4AIL whitepaper series: “Sovereign Command: AI-Ready Leadership” (Whitepaper I), “The Labour Architecture: Redesigning Work for the AI Age” (Whitepaper II), “The Organisational Response: Job Redesign for the AI Age” (Whitepaper III), and “The Forge: Professional Education When Experts Are Scarce and Content Is Free” (Whitepaper IV). The antifragile institutional design — corruption detection, the challenge protocol, the dissolution clause, and the revenue-rigour decoupling that protects the Guildhall from following the corruption arc of every previous certification body — is detailed in a separate document available on request.

The AI Guildhall is currently in development with founding partners. If your organisation is interested in participating — as an enterprise subscriber, an SME consortium member, or a contributing partner — we would like to hear from you.

Contact: [email protected]